Invented by Jaime Scott, Avigdor Susana, Frank Mendez, Kord Inc

The Kord Inc invention works as follows

A method and system for streaming audio Stems, Metadata and related music content. The system includes user interaction with a Playback device to interact with the streamed Stems. The system can also deliver Stems on demand. This platform can be used by any service or application wishing to stream Stems to end-users, for example, DSPs (i.e., streaming music platforms), radio stations, music/audio/audio-visual applications, software developers, et al (a Requesting Entity). Storage, encoding and processing of Stems can be done via server-side solutions that enable on-demand delivery, in any combination of Stems in response to requests from the client side Requesting Entity.

Background for Audio stem delivery and access solution

Most of the songs we listen to were recorded with multitrack technology. Each instrument was recorded on a separate ‘track’. In the case of digital recording, each instrument is recorded on its own software track. After all tracks have been recorded, they are usually combined or “mixed”. Stereo is a two-channel format (left and right, for example) that music can be recorded in. Music can also be mixed into multiple-channel formats in addition to stereo mixes. Audio-visual recordings, such as movies, videos, television, etc., can be mixed down to 3 or more channels. Tracks can be reduced to three or more channels. Standard surround sound, for example, is a six-channel format.

Any number discrete tracks recorded during the recording process can be combined to create a submix. This is called a ‘Stem. A Vocal Stem, for example, could consist of 3 tracks, including 1 lead vocal and 2 background vocals. Drum Stems can be composed of six tracks, including kick drum, snare, hi-hat and floor toms, as well as two overhead microphones. Stems are often used to alter and/or combine a recording’s orchestration with new elements and/or new tracks in order to create new and different versions. Remix is the common term for this.

Stems are also used in film and television productions. Mixing multiple tracks creates 1 channel for Dialog, 1 of Music and 1 of Effects. This gives you flexibility when creating a final mix. The levels and processing can be adjusted to your liking.

A mixing unit typically acquires audio/video stems or tracks from one type of recorded media. Recorded media can include analog tapes (often multi-track), digital audio files on a hard drive or digital audio files on removable discs (CD,DVD, etc.). Digital audio files on removable media (flash drive, etc.) Digital file sharing over the network and/or internet. These files were recorded in the previous, but before the user could interact with them (e.g. playback, mute or solo, mix or export), they had to be physically routed through the mixer and/or brought into the platform.

The music industry will continue to shift towards Stem-based work, increasing the need for a sharing and delivery system. Recently, many commercial ventures that could benefit greatly from this technology have been created. These companies and their respective music rights holders (e.g. artists, songwriters, publishers, labels, production companies, etc.) will continue to monetize the ‘pieces? The need for a “clearinghouse” will be necessary as these companies, and the respective music rightsholders (e.g., artists, songwriters & publishers, music labels & production companies) continue to monetize these ‘pieces? This will help to streamline the process and address security concerns as well as inefficiencies.

The system streams stems to the user from a remote server. The user can listen to the stems and interact with them using a playback unit. The stems can be accessed on demand, and they may even be streamed live.

The system can provide metadata for each stem. The system can provide stem metadata to a device that plays the stems. The metadata can include song information, musician and personnel information, song description, song statistics, recording or rights information.

The system can include receiving a HTTPS request from the playback device. The request can include at least user ID, title identification, device details, operating system, serial number of the device, manufacturer, model IP address, geolocation, UUID or software version. The system can provide URLs for different stem streams based on user ID and title. The system can provide URLs for the user ID. Each URL contains at least one unique URL, a TTL or an expiration setting. The system can create stems with recordings made by the user. The system can communicate with an external device using software controls from a playback device. The system can load stems into the memory of a device that plays back music. To implement a buffering system, the system can load two stem sets into memory at once. The system can receive user input for controlling the stem that triggers a API call to an engine of playback. The system can provide a representation of the song’s progress. The system can receive instructions to remove, isolate, muffle or solo at least one instrument. The system can also measure the decibel level on the stem.

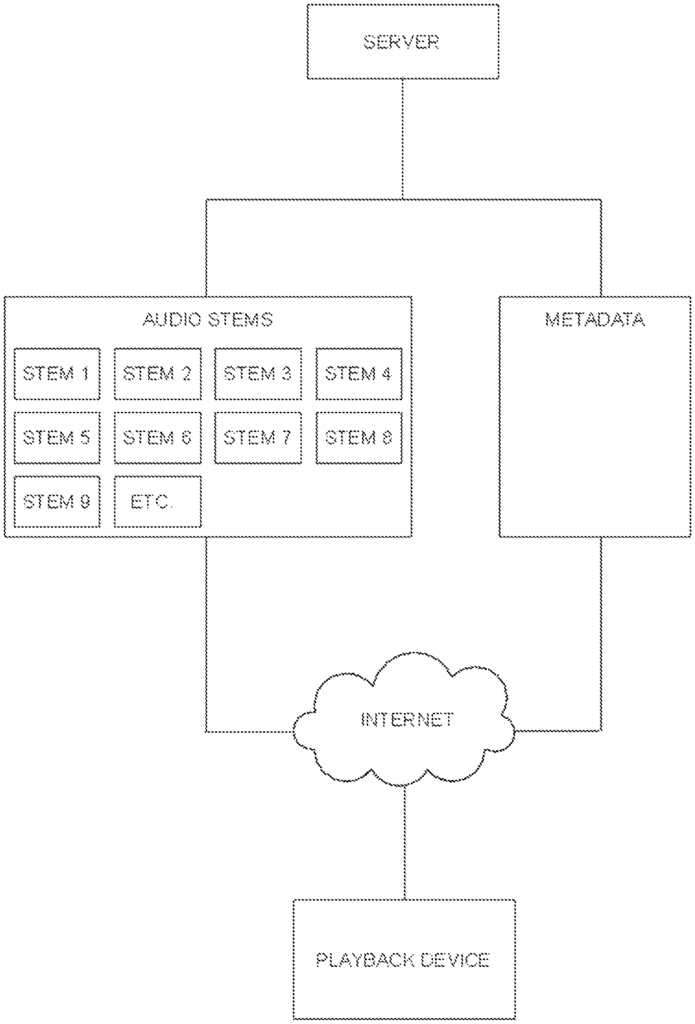

This disclosure includes a system for music instruction and entertainment. As shown in FIG., the system can include various embodiments that may include a Server that streams audio stems, metadata, videos, and/or any other content, to a Playback device. 1. The streaming content can also include images, lyrics, chords and musical notation. It may also contain information about the song, the instrument, the recording engineer, or even information about an artist (metadata). The delivery of content to the Playback Device through streaming allows the server to control copyrighted materials, and to update or modify contents if necessary or desired. By using a server to stream the content, the client does not need to download the Stems onto a Playback device. The use of a streaming server places many resources on a server, resulting in a reduction of resources needed on the client side device.

More specifically, the Playback Device may be part of, for example, a personal computer, iOS device, Android device, smart TV, TV connected streaming device, smart radio, AppleTV, Apple HomePod devices, devices with Apple’s voice assistant (Siri), Google Nest devices, Google Nest Audio devices, devices with Google’s voice assistant, Amazon Fire devices, Amazon Echo devices, devices with Amazon’s voice assistant (Alexa), Roku devices, etc. The Playback Device may send an HTTPS request to an administrative server. In various embodiments, the request may include details about the user/requester such as, for example, user id, device information, operating system details, device serial number, manufacturer, model, IP address, geo location, UUID, software version, and/or user authentication request. Based on the User and Title ID sent in the request, the server may respond with data relating to the Title ID requested, including an array of dynamic, unique, and/or encoded URL’s that correspond to different stem streams. Each URL is unique to the user/requester and may also contain a TTL (time to live) and is set to expire after use. For additional security, in various embodiments, the Playback Device and the User cannot access or use the URL in any other method or time. The URL may not be traced to reveal the path where the secure content is stored. When media is initially requested, in various embodiments, the associated metadata corresponding to that media is delivered to the playback device. The playback device may display the metadata and make it available for access. As set forth in FIG. 4, the server response may contain Metadata instructions which may include one or more of multiple streams of audio Stems, mapping for audio Stems, song lyrics, song musical notation (chord names, chord shapes, standard notation, tablature), song related videos, images and graphics, song information (release date, album, songwriter(s), record label, music publisher(s), etc. ), musician information, personnel information, song description information, song statistics, recording information (instruments, equipment, recording studio, etc. ), rights information, etc. In various embodiments, stem mapping tells a playback device how and where each stem should be used. For example, one Stem may be designated as the vocal stem for a particular song, one may be the keyboard 1 Stem, and a third Stem might be designated as a bass guitar Stem. The playback device can then match each Stem with its corresponding association in the playback device interface.

Metadata are stored in a server database.” Metadata can be manually entered or automatically ingested by secure web services. Manual entry can be achieved through a secure web interface. Automated entry can be achieved by web-service postings in a format like XML. In different embodiments, metadata created by the user or metadata provided by a third-party may be used. A third party could be a record company that owns the intellectual property and/or sound recording. Record labels provide metadata matching different music. Some metadata, however, may be specific to the system host. For example, written content about a piece, descriptions, etc.

In various embodiments of the system, a user may be able to record their performance using a musical device, microphone and/or any other audio device to create additional Stems that can be played alongside existing Stems in a song or piece. As shown in FIG. In various embodiments of the direct recording, it may be done by using existing Playback Device capabilities and/or a separate external recording device that is designed to capture audio recordings with mobile phones or computers.

In various embodiments, a hardware device may be used to manipulate and interact with stems. This external hardware device allows a user the ability to use the software controls of the Playback Device via hardware controls. This device is specifically designed to be used with the Stem streaming Playback Device described in this document. The device will also have the capability to connect external devices such as microphones and recording devices in order to record new tracks to be used with the software. As shown in FIG. As shown in FIG. may include controls and functionality such as, for example, faders to control discrete stem/track volume; mute and/or solo buttons; looping functionality; jump back and/or jump forward controls; LED level meters; input gain control(s); Instrument/microphone input connections; line outputs for connections to amplifiers, tuners, P.A. systems, etc. “Master volume output level control, playback control functions, such as play, pause and seek forward or backwards, or record; and/or USB connectivity to interface with Playback device.

In different embodiments, the Player Engine can receive and/or process metadata to be used on the client Playback device via SSL (secure server layer). The player will parse the instructions sent via data files (e.g. XML). “For example, the song title, artist(s), album, album artwork, songwriters, release date, lyrics and chords, publisher or label, and/or similar.

These Stems” are loaded into memory of the Playback Device so that it can begin playback from a synchronized start point as shown in FIG. 5. In different embodiments, the Player Engine syncs multiple audio Stems to playback using the system clock of the playback device. Multiple streams may be sent by the server(s). A custom solution can be implemented depending on the device’s capabilities to ensure synchronized playing of multiple stems in a song. The device can buffer a part of the song or the entire song into multiple threads. Each thread is both a player and a data regulator for each stem streamed. Once a minimum buffer is reached across all threads each thread caches the data and instructs the operating system to start playing the song in the future at a specified system time. This allows for synchronized playback. When a user, or the device that is playing the video, chooses to stop playback the system will go through all threads in order and pause the playback. The streaming will stop at the next buffer satisfaction. Both play and pause are handled in a similar manner. The system acts in a similar way when performing actions such as reverse seek and forward seek. It goes through all threads sequentially, and activates each command (e.g. seek, forward or reverse seek) on each thread.

In various embodiments, certain devices limit access to certain system resources such as device system clock and direct access to memory, so an alternative playback solution has been devised. These may include web browsers and/or systems that utilize web browser technology to render applications. Currently, most modern web browsers include two types of audio processing objects: HTML5 web player and Web Audio API. As set forth in FIG. 6, the system may incorporate a buffering solution, wherein two sets of Stems are loaded into memory simultaneously. The first set (Stem Set 1) is loaded into a continuous buffer to begin playback. Specifically, each stem in the set is buffered into its own web player. The system then instructs the multiple players to begin playback at the same time once all players have attained a minimum viable amount of buffering. Concurrently, an identical set of stems (Stem Set 2) is loaded to indirect-memory (e.g., blob or disk) in its entirety. The system then begins playback of Stem Set 2 on different web player threads in the background, at the highest playback speed available, at zero-volume. Once the players housing Stem Set 2 begin playback, this moves the stems from indirect-memory to direct-memory where they can now be controlled by the user. If the user interacts with the playback of the song by seeking forward or backward, the Player Engine switches over to Stem Set 2 for playback from direct-memory, as only stems in direct memory can be controlled by a user.

The user can control the Stems they want to hear by using a user interface on their Playback Device. The UI allows a user to select icons that represent the instruments in a particular song. These icons can cause the Playback device to perform various actions, such as muting, altering, or soloing the instruments. 2. The Playback Engine User Interface allows the user to control and interact with a song (a set of stems). A layer of API may be used to connect the UI and the Playback Engine so that every input selected by a customer triggers a call to the Playback Engine. These commands allow users to perform one or more of these exemplary functions while song playback is underway:

Imagine, for example, listening to Hotel California. In the app. If you’re a guitarist, then you can mute, or remove the guitar from the song. You can then sing along to the song by yourself. You would be adding your guitar to the original tracks. It also allows you to add other instruments, such as keyboards, vocals, bass or drums. It’s like karaoke but with ALL instruments. You can even muffle multiple instruments to play along with friends. You can also “solo” an instrument to hear it clearly. You can also isolate, or?solo? It is ideal for musicians to learn a vocal harmony, a guitar solo or bassline. The system lets you slow down sections to make it easier to learn and hear.

The system may have one or more features according to various embodiments. Users may be able to listen to streaming audio tracks as part of a music instruction platform that uses stems from master recordings to promote deep music learning. The system could offer sound recordings that have been curated and selected by musicologists. It may also enable end users to interact with the recordings, such as the ability to loop sections of the recording or decrease the speed. Users may be able to scroll lyrics, chord progressions and tablature and include a metronome as a guide to their instruction. Additional features may include a user profile, playlisting and an instructional chat. Users may be able to search for tracks by artist, song title or genre. In addition to being an instructional application, the system provides deep dives by end users into songs?not only by discovering and listening to tracks but also engaging with metadata including articles, liner/session notes, artist and studio images, artist/producer/engineer interviews, analytical videos, instructional videos, fan content, etc. The system is a combination of DVD commentary and classic albums documentaries, fan club content and interactive listening, all in one.

In various embodiments of the system, it includes a streaming app that allows users to listen to songs and then isolate or remove the instruments they hear. Interactive chords and lyrics scroll in real-time, creating a compelling and unique music instruction platform. The users can also view original video content that is associated with each song or album or artist. It may also serve as a platform where musicians can share their performances amongst other members of the music community. The user can stream while playing stems. The system has a streaming platform that allows musicians to stream their channel while playing along with tracks, loops and beats. The user can use an interface to add or remove instruments in real time.

The system could include a representation of song progress. The visual background fills with color as a song is played, moving from the top to the bottom, creating a unique visual of time elapsed and remaining in the song. The player can calculate the time remaining by using metadata and the lengths of each stem stored in memory.