Invented by Lichao Sun, Caiming Xiong, Jia Li, Richard Socher, Salesforce Inc

The Salesforce Inc invention works as follows

Approaches to private and interpretable machines learning systems include a method for processing a question. The system consists of one or multiple teacher modules that receive a request and generate a respective result, one-or-more privacy sanitization module(s) for privacy sanitizing each output generated by the one/or more teacher modules. A student module is also included, which receives a question and privacy sanitized outputs from each of the teacher modules. Each of the teacher modules are trained with a private data set. The student module uses a public dataset. In some embodiments a model user is given a human-understandable interpretation of the output from he student module.

Background for Machine learning systems that protect data privacy

The need for intelligent applications requires the deployment of powerful machine learning systems. The majority of existing model training processes are geared towards achieving better predictions in an environment where all the participants belong to the same party. In an assembly line project for business-to-business, participants come from different parties. For example, they may be data providers, models providers, users of the models, etc. This raises new concerns about trust. A new hospital, for example, may adopt machine-learning techniques to assist their doctors in finding better diagnostic and treatment options for incoming patients. A new hospital might not be able train its own machine learning model due to data and computation limitations. A model provider can help the new hospital train a diagnostics model using knowledge from other hospitals. To protect the privacy of patients, other hospitals will not allow their data to be shared with the new hospital.

It would be beneficial to have methods and systems for training and using machines learning systems which protect privacy and respect the trust between cooperating partners.

In view of the requirement for a privacy-protected machine learning system, embodiments described in this document provide a private machine learning framework which distills and perturbs data from multiple private providers to enforce privacy and uses the interpretable learning as understandable feedbacks for users.

As used in this document, the term “network” may include any hardware or software-based framework that includes any artificial intelligence network or system, neural network or system and/or any training models implemented thereon. “Network” may refer to any hardware- or software-based framework, including any artificial intelligence system or network, neural system or network and/or any learning or training models that are implemented on or with it.

The term “module” is used in this context. The term “module” can refer to any hardware- or software-based framework which performs one or several functions. The module can be implemented in some embodiments using one or more neuronal networks.

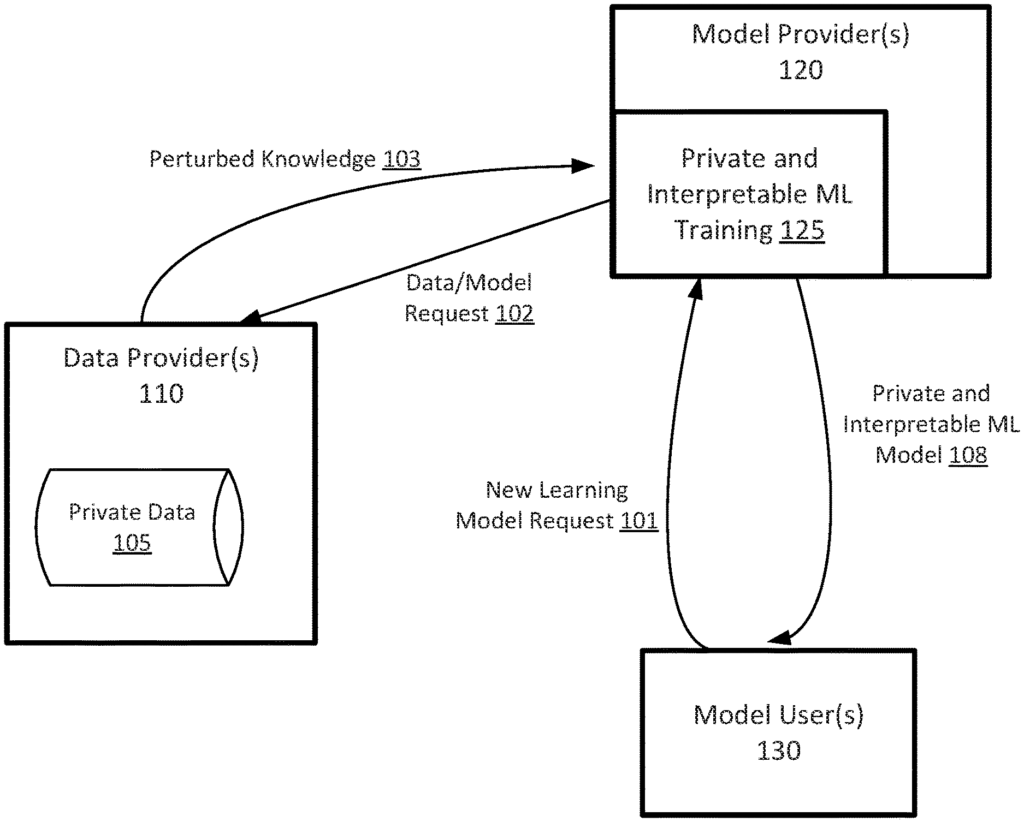

FIG. According to certain embodiments, FIG. 1 is a simplified flow chart illustrating the data transfers between parties in order to protect data privacy. Interactions between a data provider 110 (e.g. one or more hospitals), model provider 120 and model user 130 (e.g. a new hospital). In some cases, the model user may send the model provider 120 a request for a learning model 101, such as a diagnostic model for a newly opened hospital. The model provider 120 can operate a private, interpretable machine-learning training framework 125 that sends data or model training requests 102 to data providers 110.

In certain examples, each data provider has trained a student module using their own private data. They do not allow any information about the teacher module to pass to the model user. In this instance, public data are used to train the model user’s student module with performance comparable with the teacher modules. Teacher modules are fed queries based on unlabeled data from the public. These responses, which contain knowledge from the teacher modules, are not directly shared with the students module. “Instead, only the perturbed knowledge 103 of the teacher modules is used to update student module.

In some cases, if the data provider does not trust model provider, data provider(s), 110 can perturb the outputs of the teacher modules, and then send them (e.g. perturbed knowledge 103), to model provider 120. In some cases, if model provider is trusted by data provider(s), 120, model provider can receive the knowledge of the teacher modules from data providers 110 and then perturb it. The private and interpretable machine-learning training framework 125 will train the student module using perturbed teacher modules. The model users 130 can then receive a private and interpretable module 108.

The private and interpretable machine learning training framework (125) honors trust concerns between multiple parties by, for example, protecting the data privacy of data providers 110, from model users 130. The private and interpretable machine learning training framework 125 uses private knowledge transfer, which is based on knowledge distillation combined with perturbation. For example, see perturbed Knowledge 103.

The private and interpretable machine learning training framework (125) obtains perturbed information 103 in a batch by batch query method, which reduces queries sent to the instructor modules. Instead of a loss per query, the batch loss is generated by the teacher modules based on the sample queries. This further reduces the chances of recovering the private knowledge from the teacher modules to the student module.

FIG. According to certain embodiments, FIG. 2 shows a simplified view of a computer device 200 that implements the private and interpretable Machine Learning Training Framework 125. As shown in FIG. Computing device 200 is comprised of a memory 220 and a processor 210. Processor 210 controls the operation of computing device 200. Although computing device is only shown with one processor 210 in the figure, it’s understood that this processor can represent one or more central processors. Computing device 200 can be implemented in a number of ways, including as a standalone subsystem, a board that is added to a computer, or as a virtual machine.

Memory 220 can be used to store the software that is executed by computing device 100 and/or data structures that are used in computing device 200’s operation. Memory 220 can include one or more machine-readable media. Machine readable media can include: floppy disks, flexible disks, hard disks, magnetic tapes, other magnetic media, CD-ROMs, other optical mediums, paper tapes, punch cards and other physical mediums with holes. Other forms may be RAM, PROMs, EPROMs, FLASH-EPROMs, other memory chips or cartridges, or any other type of medium that a computer or processor is able to read.

Processor 220 and/or Memory 220 can be arranged into any physical arrangement that is suitable. In certain embodiments, the processor 210 or memory 220 can be implemented on one board, in the same package (e.g. system-inpackage), a single chip (e.g. system-on-chip), etc. In some embodiments processor 210 or memory 220 can include virtualized and/or containerized computing resource. In accordance with these embodiments, the processor 210 or memory 220 can be located at one or more cloud computing facilities and/or data centers.

Computing devices 200 also include a communication interface (235) that can receive and transmit data from one or more computing devices such as data providers 110. Data may be received or sent from the teacher module 230 by the data providers 110 using the communication interface. In some examples, the teacher modules 230 can be used to receive a query and then generate a result. In some cases, the teacher modules can also be used to handle iterative evaluation and/or training of their respective module. In some examples, one or more of the teacher modules 230 can include a machine-learning structure such as neural networks, deep reconvolutional networks and/or similar structures.

Memory 220 contains a student module (240) that can be used to implement the machine learning model and system described in this document and/or any of the methods. In some examples the student module 240 can be trained in part using perturbed knowledge (103), provided by one or more of the teacher modules 230. In some examples student module may also be responsible for the iterative evaluation and/or training of student module as described below. Student module 240 can include machine learning structures such as neural networks, deep-convolutional networks and/or similar.

In certain examples, memory may include tangible, non-transitory media that contains executable code. When run by one processor (e.g. processor 210), the code may cause one or multiple processors to perform methods as described herein. In some examples, the teacher module 230 and/or the student module 240 can be implemented with hardware, software or a combination. Computing device 200, as shown, receives a batch of input training data 250, (e.g. from a publicly available dataset), and creates a private, interpretable model, such as for model user 130.

As discussed above and as further highlighted here, FIG. The figure 2 is only an example and should not be used to limit the scope or the claims. A person of ordinary skill would be able to recognize many variations and alternatives. In some embodiments, the teacher modules 230 can be found in a separate computing device. In some cases, separate computing devices can be identical to computing device 200. In some cases, the teacher modules 230 can be placed in their own computing device.

FIGS. According to certain embodiments, FIGS. In FIGS. In FIGS.

FIG. The simplified 3A diagram illustrates a structure for knowledge distillation to transfer private knowledge from the teacher modules (230 a-n) to the student modules (240), with random noises which are consistent with differential confidentiality. Each teacher module 230a-n receives their own private data source, 303a-n.

To use the teacher modules to train the student modules, a batch xp of public data samples is sent to each teacher module 230a-n. Each of these modules generates its own output. Let cit be the output of last hidden layer for the ith teacher module. The output cit is then sent to the respective classification module 310a-n. In some cases, each classifier 310 a generates softmax probabilities according to Equation 1:nPi t=softmax (c i /?)??Eq. (1)nwhere Pit is a vector of probabilities that correspond to different classes and? The temperature parameter is? If?>1, then the probability of classes with normal values near zero can be increased. The relationship between classes can be embodied in the output of the classification, for example, as the soften probability P t.

In some cases, the model provider may aggregate the classification outputs Pi t of all classification modules 310a-n into a classification output according Equation (2).