Invented by Qi Sun, Anjul Patney, Omer Shapira, Morgan McGuire, Aaron Eliot Lefohn, David Patrick Luebke, Nvidia Corp

The Nvidia Corp invention works as follows

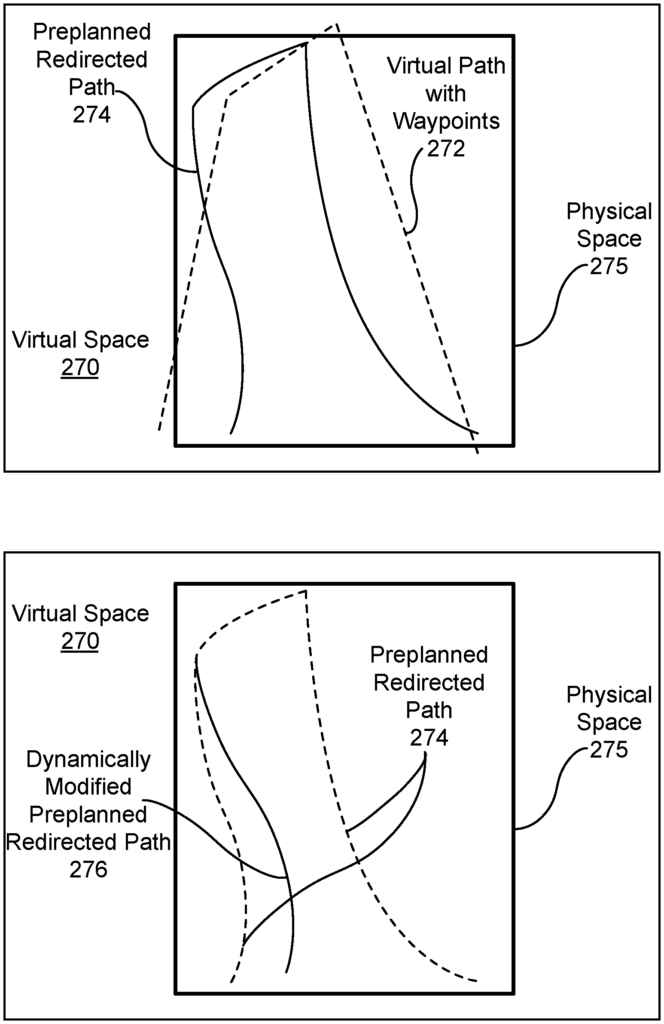

A method, computer-readable medium, or system is disclosed for computing a route for a user in a physical environment while viewing a digital environment using a virtual reality system. The path that a user will physically follow through a virtual world is calculated based on at least one physical characteristic and waypoints. Position data is then received for the user indicating how far the current path has diverged from the path.

Background for Path Planning for Virtual Reality Locomotion

Virtual reality head-mounted displays are available that support the tracking of room-scale positions for a more natural experience. Virtual spaces are usually smaller than physical spaces. Virtual reality (VR), on the other hand, faces a major challenge in embedding large virtual spaces within small, irregular physical spaces that are shared by multiple users. Ideal solution would be to create the illusion of infinite walking within the virtual space, while utilizing a finite physical area. Treadmills and other physical devices are a good way to solve the infinite-walking problem, but they are not suitable for most applications. They are bulky and expensive and may compromise the balance of the user. “Also, the acceleration and deceleration of walking naturally is not felt when using these physical devices, which can be uncomfortable.

A simple solution to the issue of a small physical space is to reset virtual orientation when users reach the physical limits/obstacles in their room. Sadly, in large virtual environments the viewpoints must be reset frequently, which disrupts and reduces the quality of user experience. An alternative to simply resetting the virtual orientation, is to redirect the user to avoid the physical boundaries/obstacles. The size, shape and content of physical environments can limit the use of redirected walking techniques. They also enhance immersion and the visual-vestibular comfort that VR navigation offers. The goal of redirection, then, is to manipulate a virtual space in a way that minimizes the number of times a user will hit a room boundary or an object like furniture.

A first technique for redirecting a user increases rotation/transformation gains when the user rotates and/or moves his or her head, such that it causes the degree of head rotation visually perceived by the user to be slightly different from the actual rotation of their head. The amount of head movement that can be done without negatively affecting the user experience should be limited. The second method of redirecting the user involves warping scene geometry in such a way that their movements are guided by modified and re-rendered images. The second technique, for example, may make a straight corridor appear curvy to prevent the user walking into an obstacle or boundary. The distortion caused by warping is not appropriate for scenes with open spaces. “There is a need to address these issues and/or others associated with prior art.

A method, computer-readable medium, or system for computing a route for a user in a physical environment while viewing a digital environment is disclosed. The path that a user will physically follow through the virtual world is calculated based on waypoints, at least one physical characteristic, and position data of the user. This information is then used to determine whether or how much the current path of the user has diverged from the path.

Redirected locomotion allows for realistic virtual reality experiences even in an environment that is smaller than the virtual one or has obstacles. The technique described here detects a natural visual suppression when the user’s eye moves rapidly relative to their head. After the visual suppression is detected, there are a number of ways to re-direct the user’s route. The redirection technique modifies the virtual camera of the user to reduce the chances of leaving a physical space, or hitting an obstacle. furniture). Minor changes to the virtual camera are usually imperceptible during visual suppression events. Modifying the virtual cameras position allows for richer experiences, without the user even noticing. The user’s virtual path is redirected to keep the user in a physical environment. Conventionally, the path of the user is redirected when the head of the user rotates. Visual suppression events are not taken into consideration. The virtual camera can be reoriented, as described in the following paragraphs, not only during visual suppression events, but also when the user moves their eyes rapidly with respect to the head.

FIG. According to an embodiment, 1A shows a flowchart for a method 100 of locomotive redirection in the event of temporary visual suppression. The method 100, although described as a processing device, can also be implemented by custom circuitry or a combination of both. The method 100 can be performed by a CPU, GPU or other processors capable of detecting visual suppression events. Persons with ordinary skill in this art will also understand that any system which performs the method 100 falls within the scope and spirit embodiments of the invention.

The duration of a visual suppression event starts before the saccade and continues for an additional time after the end of the saccade, when the human visual system temporarily looses its visual sensitivity. The eye movement during a saccade can be longer than VR frames (10-20 ms). Small changes in the virtual scene’s orientation are not noticeable during saccades due to temporary blindness. This can be used as a tool for redirected walking. Many behaviors can trigger temporary suppression of perception, including saccades. Visual suppression can also occur when certain patterns are present. For example, the presence of certain patterns may suppress our ability visually to process a scene. A zebra’s stripes can make it difficult to tell individuals apart from the group, flash suppression (in this case, a flashing image in one eye suppresses an image shown to the other), tactile saccades – where our ability to touch surfaces is suppressed by motion – and blinking ? visual suppression before and after blinking. Gaze tracking or other techniques, e.g. electroencephalographic recording) may be used to detect a visual suppression event. “In one embodiment, an eye tracked head mounted display device is configured for tracking a gaze of a virtual-reality user to identify a visual-suppression event.

At 120, the orientation of the virtual environment relative to the user during the visual suppression is modified to direct the users to physically move through the virtual environment that corresponds to the virtual image. The orientation (translation or rotation) of the scene can be changed to direct the user along a path that avoids obstacles in the physical world and leads the user towards virtual waypoints. To redirect a walking direction of a user, in one embodiment, the virtual scene is rotated based on the user position within virtual space during a visual suppressing event. In one embodiment, redirection can be used to avoid both static and dynamic obstacles. “Compared to conventional redirection, the visual and vestibular experience is retained in a wider range of virtual and real spaces.

At Step 130, the virtual image is displayed according to the new orientation on the display device. If the visual suppression events do not occur as often as necessary to redirect the user’s attention, a subtle gaze-direction event can be added to the virtual scene in order to encourage saccadic actions. The gaze direction event creates a visual distraction at the periphery to encourage a saccade. In an embodiment, the eye-movement event occurs at display time, and it does not impact rendering or content of the virtual scene.

More information will be provided to illustrate the various architectures and features that can be used with the framework, depending on the wishes of the user. Please note that the information presented below is for illustration purposes only and should not in any way be construed to be restrictive. “Any of the features listed below may be included optionally, with or without exclusion of any other features described.

FIG. According to an embodiment, 1B shows a user’s path and obstacle. A path 132 intersects two waypoints (134 and 136). The user can redirect their walking direction by using each visual suppression event (144 and 146) During visual suppression events (144 and 146) a virtual scene may be rotated based on the current user position in virtual space. The rotation can direct the user to follow the path 132 towards the waypoint 136, while making sure the user does not encounter an obstacle 142. The obstacle 142 can be in a fixed position or it may move (i.e. dynamic).

When the opportunity to redirect a users gaze is not frequent enough, it may be possible to induce a visual suppressing event. Visual suppression events 144 or 146 can be induced. To induce a visual suppressing event, subtle gaze direction (SGD), which directs the viewer’s gaze towards a specific target can be used. SGD events can be used to direct user attention in peripheral areas without changing the overall perception of the scene. SGD events can be generated in order to increase the frequency and dynamically, subtly, of visual suppression events. This will allow for more opportunities to rotate the virtual environment without being noticed. SGD events can be generated in image-space, as opposed to traditional subtle gaze directions that alter the virtual environment rendered in virtual space. In the render frame of the scene, the virtual environment is modified by one or more pixels. “Introducing the SGD in image space reduces latency between when the SGD is initiated and when the SGD is visible to the users.

In one embodiment, pixels on the user’s peripheral vision are modulated in time to create an SGD event. A content-aware method prioritizes the placement of stimuli in high-contrast regions to improve modulation effectiveness. The search for pixels that have high local contrast is an expensive computation per frame. In one embodiment, the contrast of the rendered images is computed using a downsampled version. In one embodiment, the downsampled image is generated by generating “multim in Parvo” Texture maps (i.e. MIPMAPs), for the current frame. The SGD stimulus is created by modulating luminance in a Gaussian shaped region surrounding the high contrast region, including one or multiple pixels. TABLE 1 shows an example algorithm for searching for the high contrast region in the down-sampled rendered frame.

FIG. 1C shows a diagram showing a user’s journey in a virtual space 170 and a physical environment 160, according to an embodiment. The user’s physical path 165 is restricted to the physical environment, or physical space 160. The user is unaware that they are traveling along a virtual path in the virtual space or virtual environment 170. In one embodiment, the method provides a dynamic answer to the endless walking problem. It is also effective on physical areas as little as 12.25 m2. The 12.25 m2 area is the same as recommended HMD installation limits for consumers. “Redirecting the user when visual suppression occurs due to eye movements caused by head rotations or eye movements caused by head positions allows for more aggressive redirection, because there are many opportunities to redirect.

FIG. 1D is a block-diagram of a virtual world system 150 according to an embodiment. The efficacy of redirection during visual suppression events depends on several factors, including frequency and duration of visual suppression events, perceptual tolerance of image displacement during visual suppression events, and the eye-tracking-to-display latency of the system 150.

Click here to view the patent on Google Patents.