Invented by Quinn Hawkins, Pulin Thakkar, Kapil Sharma, Avronil Bhattacharjee, Adam Eversole, Bo Qin, Microsoft Technology Licensing LLC

The Microsoft Technology Licensing LLC invention works as follows

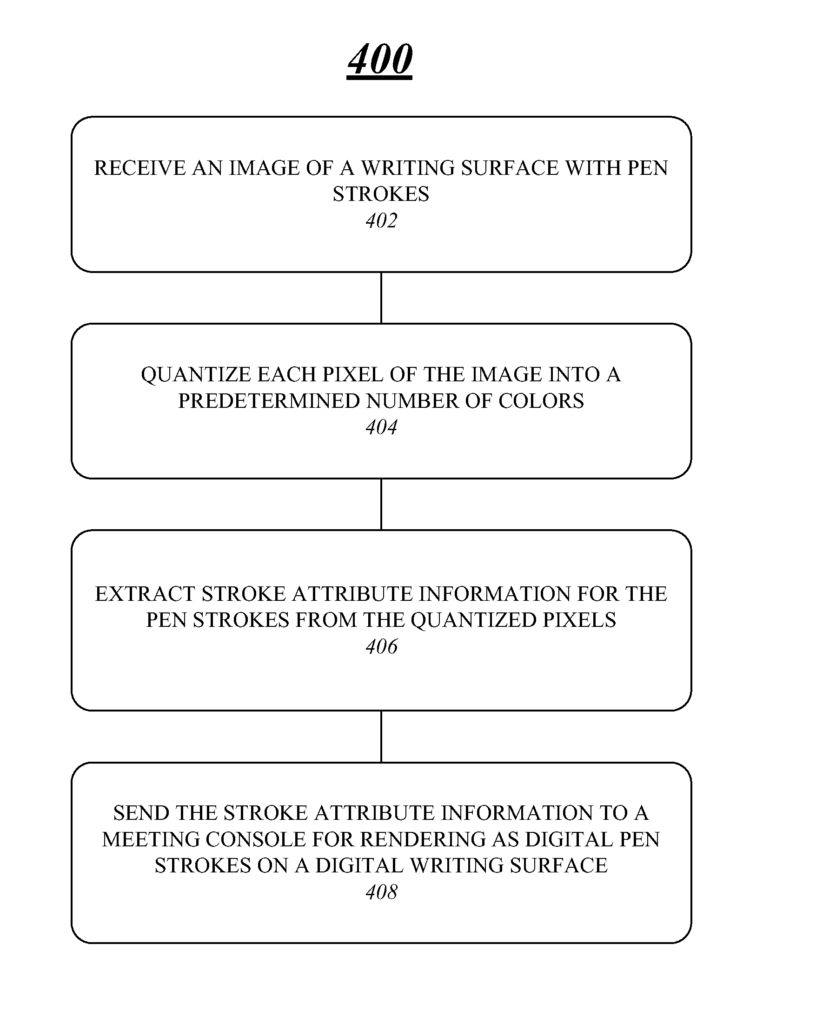

The techniques for managing a whiteboard during multimedia conferences are described. A whiteboard manager component may be included in an apparatus to manage whiteboard recording and image processing operations for a multi-media conference event. The whiteboard component may include an image quantizer operatively to receive an image with penstrokes and to quantize each pixel into a predetermined amount of colors. It can also comprise an attribute extractor communicatively connected to the image quantifyer module, which is operatively to extract stroke attributes for the penstrokes from the quantized pixel. Other embodiments are described, and claims.

Background for Techniques for managing a whiteboard during multimedia conferences

A multimedia conference system allows for multiple participants to share and communicate different types of media in a real-time collaborative meeting via a network. Multimedia conference systems can display various types of media using different GUI windows or views. One GUI view could include video images or participants. Another GUI view could include slideshows. A third GUI view could include text messages. This allows geographically dispersed participants to interact and exchange information in a virtual environment that is similar to the physical environment of a meeting where everyone is present.

In some cases, participants of a multi-media conference will gather in a meeting room. A whiteboard, or another writing surface, can be used to make notes, diagrams and other non permanent markings. However, due to limitations on input devices, such as video camera, it can be difficult for remote viewers of the whiteboard to see any writings. A common solution is the use of an interactive or electronic whiteboard that converts markings on the writing surface into digital information. A whiteboard that is interactive may be costly due to its hardware and software requirements. It can also be complex to use due to all the configurations required to set up and operate it. A pen designed specifically for whiteboards is another alternative, but it also has similar limitations. The improvements made are based on these and other considerations.

Various embodiments can be directed in general to multimedia conference systems. Some embodiments are aimed at techniques for managing recordings of a multi-media conference event. Multimedia conference events may involve multiple participants. Some may be in a conference hall, while others can participate remotely.

In one embodiment, a device may include a whiteboard component that manages whiteboard recording, images processing, and reproduction operations during a multi-media conference. The whiteboard component may include, among other components, an image quantizer that is operative to receive a picture of a writing area with pen strokes and quantize every pixel into a predetermined color. The whiteboard component may also include an attribute extractor coupled communicatively to the image quantifyizer module. This image quantizer is operative for extracting stroke attribute information from the quantized pixels. The whiteboard component may also include a whiteboard module that is communicatively connected to the attribute extraction module. This module is operative for sending the stroke attribute data to a meeting desk to be rendered as digital penstrokes on a writing surface. “Other embodiments are described, and claims.

This Summary is intended to present a number of concepts that are described in more detail in the Detailed description. This Summary does not aim to identify the key features or essential elements of the claimed object matter. Nor is it meant to be used as a tool to limit the scope.

Various embodiments” include physical or logical structure arranged to perform specific operations, functions, or services. Structures can be physical, logical or a mixture of both. Hardware elements, software components, or a mixture of both can be used to implement the physical or logical structure. The descriptions of embodiments that refer to specific hardware or software components are examples, and not intended as limitations. The decision to use software or hardware elements in order to implement an embodiment is based on several external factors. These include desired computational rates, power levels and heat tolerances, budget for processing cycles, memory resources, bus speeds and other performance or design constraints. The physical or logical structure may also have corresponding connections that allow information to be communicated between the structures via electronic signals or messages. These connections can be wired or wireless, depending on the structure and/or information. Any reference to “one embodiment” or “an embodiment” is misleading. or ?an embodiment? At least one embodiment includes a certain feature, structure or characteristic that is described in conjunction with the embodiment. The phrase “in one embodiment” appears in several places. The phrase “in one embodiment” may appear in different places within the specification. This does not mean that they all refer to the same embodiment.

A multimedia system can include, amongst other network elements, an multimedia conference server, or another processing device, configured to provide web-conferencing services. A multimedia conference server, for example, may include a server component that mixes and controls different media types in a meeting or collaboration event such as a Web conference. Meeting and collaboration events can refer to any multi-media conference event that offers various types of multimedia content in a live or real-time online environment. ?multimedia event? or ?multimedia conference event.?

In one embodiment, a multimedia conference system can include one or multiple computing devices that are implemented as meeting consoles. The multimedia conference server can be connected to each meeting console to allow it to take part in a multi-media event. The multimedia conference server may receive different types of information from various meeting consoles during the event. It then distributes that information to all or some of the meeting consoles taking part in the event. This allows geographically dispersed participants to interact and exchange information in a virtual environment that is similar to an actual meeting where everyone is present.

To facilitate collaboration during a multi-media conference, a whiteboard, or another writing surface, can be used to make notes, diagrams and other non permanent markings. However, due to limitations on input devices like video cameras, it can be difficult for viewers at a distance to see the whiteboard or any writings that are there. The cost of conventional solutions, such as electronic pens and interactive whiteboards due to their hardware and software requirements can be prohibitive.

An alternative to using pens and whiteboards is to use a video to capture and filter the images of the whiteboard, and any markings that are made on it. A Real-Time Whiteboard Capture System uses a technique that captures penstrokes on whiteboards using a camera in real-time. The whiteboard or pens do not need to be modified. The RTWCS analyzes video sequences in real-time, classifies pixels into pen strokes, whiteboard backgrounds, and foreground items (e.g. people in front the whiteboard), then extracts new pen strokes. Images are enhanced for clarity, and then sent to a device to display them to remote viewers. “Although RTWCS systems offer many advantages, they can potentially use up precious communication bandwidth because the media content is transmitted in the form images of the whiteboard.

To solve these problems and others, embodiments may implement various improved whiteboard management technologies.” Some embodiments use an RTWCS for real-time capture of whiteboard images. The embodiments can then use a vectorization method to analyze the images and identify and extract attribute information relevant to the whiteboard, and pen strokes on the whiteboard. The attribute information may then be communicated to a device remote, which converts the relevant information into digital representations for the whiteboard and pen strokes. This system is cheaper because the attribute information uses less bandwidth to communicate than images. The digital representations of the whiteboard, including the pen strokes, can be edited and manipulated by a remote user. It is possible to have interactive whiteboard sessions with a remote user who views the digital representations, and a local person writing on the whiteboard. It may be beneficial to record a multi-conference event and preserve the comments of remote viewers. This allows for a whiteboard solution that is less expensive to be used in a multi-media conference event.

FIG. The block diagram of a multimedia system 100 is shown in FIG. Multimedia conference 100 can be a system architecture that is suitable for various embodiments. Multimedia conference 100 can include multiple elements. A component can be any physical or logical arrangement arranged to perform a certain operation. Each element can be implemented in hardware, software or any combination of the two, depending on the desired design parameters and performance constraints. Hardware elements include semiconductor devices, chips, microchips and chip sets. Examples may also include processors and microprocessors. Software may be any of the following: software modules, routines and subroutines; functions, methods; interfaces. The multimedia conference system shown in FIG. Although FIG. 1 shows a limited number elements in a topology, multimedia conference system 100 can include more or fewer elements in alternative topologies depending on the desired implementation. In this context, the embodiments are not restricted.

In various embodiments, multimedia conference system 100 can be a part of a wired communication system, wireless communication system or combination of the two. The multimedia conference system 100, for example, may contain one or multiple elements that communicate information via one or several types of wired communication links. Wired communication links can include, for example, a cable, wire, printed circuit board, Ethernet connection, peer to peer (P2P), backplane fabric, semiconductor materials, twisted pair wires, coaxial cables, fiber optic connections, etc. The multimedia conference system may also include one or multiple elements that communicate information via one or several types of wireless communication links. Wireless communications links can include, for example, a radio, an infrared, a radio-frequency, Wireless Fidelity, or a portion or the spectrum.

In various embodiments, multimedia conference system 100 can be configured to communicate, manage, or process different types information, including media information and control data. Media information can include, for example, any data that represents content intended for users, such as voice, video, audio, image, textual, numerical, application, alphanumeric, graphic, etc. Media content can also be used to refer to media information. As well. Control information can be any data that represents commands, instructions, or control words for an automated system. Control information can be used, for example, to send media data through a system or to connect devices. It may also be used to instruct a device how to process media data in a certain way.

In various embodiments, multimedia conference system 100 may include a multimedia conference server 130. The multimedia conference system 130 can be any logical or physically entity that is configured to establish, control or manage a multimedia call between meeting consoles 100-1-m via a network. The network 120 can be a packet-switched, circuit-switched, or combination of the two. In different embodiments, multimedia conference server 130 can be implemented or comprised as any computing or processing device, including a computer or server, or a server farm or array, or a workstation, mini-computer or mainframe computer. The multimedia conference server may implement or comprise a computing architecture that is suitable for processing and communicating multimedia information. In one embodiment, the multimedia server 130 can be implemented with a computing system as described in FIG. 5. “Examples of the multimedia conference server may include, without limitation, a Microsoft Office Communications Server, a Microsoft Office Live Meeting server, etc.

A specific implementation of the multimedia server 130 can vary depending on the communication protocol or standard to be used. In one example, the Multiparty Multimedia Session Control Working Group Session Initiation Protocol SIP series of standards or variants may be used to implement the multimedia conference servers 130. SIP is proposed as a standard for initiating and terminating interactive sessions that include multimedia elements like video, voice, instant messages, online games and virtual reality. The multimedia conference server 130 can be implemented according to the International Telecommunication Union’s (ITU) H.323 standards or variants. H.323 defines a Multipoint Control Unit (MCU) for coordinating conference call operations. The MCU contains a multipoint control unit (MC) for H.245 signaling and one or several multipoint processors to mix and process data streams. SIP and H.323 are both essentially signaling standards for Voice over Internet Protocol or Voice Over Packet multimedia conference call operations. Other signaling protocols can be implemented on the multimedia conference server 120, but still be included in the scope of embodiments.

Regardless of which communications standards and protocols are used in a particular implementation, the multimedia server 130 includes typically two types of MCUs. The first MCU, an AV MCU (134), is used to distribute and process AV signals between the meeting consoles. The second MCU, a data MCU, is used to distribute data signals between the meeting consoles.

In general, the multimedia conference system 100 can be used to make multimedia conference calls. Multimedia conference calls usually involve the communication of voice, video and/or data between multiple endpoints. A public or private packet-network 120 can be used to make audio conferencing, video conferencing, audio/video conference calls, collaborate document sharing, etc. The packet network 120 can also be connected to the Public Switched Telephone Network via one or several VoIP gateways that convert between circuit-switched and packet information.

To establish a multi-media conference call via the packet networks 120, each meeting desk 110-1-m can connect to the multimedia conference server 130 using a variety of wired and wireless communication links that operate at different connection speeds, such as an intranet connection through a local network (LAN) with a high bandwidth, or a DSL or cable modem.

In various embodiments, multimedia conference server 120 may establish, control and manage a multimedia call between meeting consoles. In certain embodiments, a multimedia conference call can be a live web-based call that uses a web conference application with full collaboration capabilities. The multimedia conference server operates as a server central that controls and distributes the media information during a conference. The multimedia conference server 130 receives media data from the various meeting consoles (110-1-m), performs mixing for the different types of media data, and then forwards that media data to all or some of the participants. The multimedia conference server 130 allows one or more meeting consoles 110-1 to join a conference. The multimedia conference servers 130 can use various admission control methods to authenticate and include meeting consoles 110-1 m in a controlled and secure manner.