Invented by Jeffrey Roger Stafford, Michael Taylor, Sony Interactive Entertainment Inc

The Sony Interactive Entertainment Inc invention works as follows

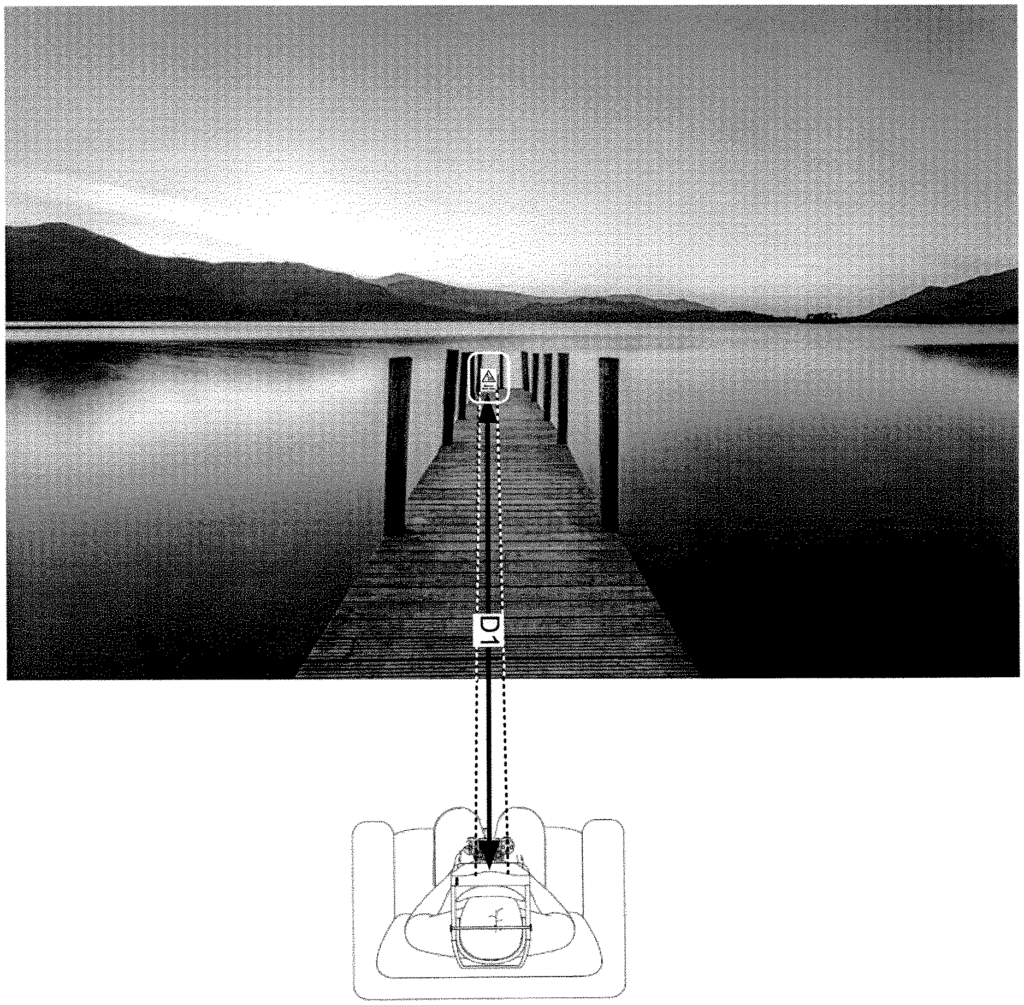

The “methods and systems” for presenting objects on the screen of a HMD include receiving an image from a real-world surrounding in close proximity to a user wearing the head mounted display. A processor in the HMD receives the image from one or several forward-facing cameras. The image is then rendered on the screen by the HMD. One or more gaze-detecting cameras on the HMD are pointed at the eye or eyes of the wearer to detect the gaze direction. Images captured by forward-facing cameras are analyzed in order to identify an actual object in the real world environment that is aligned with the gaze of the user. The image of the object will be rendered at a virtual distance which causes it to appear out of focus when shown to the user. The signal generates a zoom factor adjustment for the lens of one or more forward-facing cameras to bring the object into focus. “The adjustment of zoom factor allows the image of object to appear on the screen of HMD at second virtual distance, allowing the object be discernible to the user.

Background for HMD Transitions for Focusing on Specific Content in Virtual Reality Environments

Description of Related Art

The video game and computing industries have undergone many changes in recent years. Developers of interactive applications such as video games have taken advantage of the increased computing power by creating application software. In order to achieve this, developers of interactive applications, including video game developers have developed games that integrate sophisticated operations in order to increase the interaction between the user and gaming system. This produces a realistic game experience.

Gesture input is a general term that refers to an electronic device such as a computer system, video gaming console, smart appliances, etc., responding to a gesture made by he player and captured by electronic device. Wireless game controllers can be used to provide gesture input to create a more interactive experience. The gaming system tracks the movement of wireless game controllers to determine the gesture input provided by a player and then use this input to affect the state of a game.

A head-mounted display is another way to achieve a more immersive experience. The player wears a head-mounted display, which can be programmed to display various graphics on the display screen, including a view of an imaginary scene. The graphics on the screen can cover the entire field of vision of a player. Head-mounted displays can create a more immersive visual experience for the player, by blocking out the real-world environment.

To enhance the immersive experience of the player, the HMD can be configured to render a game scene generated by a computer/computer device or real-world images, or a mixture of the two. The player may not be able to see all of the details or objects in the real-world images that are displayed on the HMD display screen.

The invention is embodied in this context.

Embodiments” of the present invention describe methods, systems and computer-readable media for presenting objects from a real world environment to a user by bringing them into focus on the screen of a HMD. In some implementations the HMD can receive images from an environment in real life or virtual reality (VR), and display them on the screen of the HMD. Information from one or more sensors within the HMD can be used to detect the gaze direction of the user while rendering the images. After detecting the gaze, a user-identified object is selected from the images taken of the real world environment. A signal is then generated to adjust the zoom factor of one or more cameras on the HMD. Zoom factor adjustment brings an image of real-world surroundings that include the object into focus on the HMD screen. In some implementations the HMD can take into account the vision characteristics of the users’ eyes when determining a zoom factor for its lens. In some implementations the sensors detect if the user is gazing at an object for more than a threshold time before sending the signal to adjust the zoom factor of the HMD lens. In some implementations the signal which includes instructions for adjusting zoom factor of an image can also include information on how to adjust the optical setting of a lens to allow optical zooming. In some implementations, the zoom factor signal may also include instructions for digital zooming the image. Depending on the type or images being rendered, instructions or information may be included to allow optical zooming or a digital zoom.

The implementations allow for a user to discern specific objects or points in images captured of a real world environment. By bringing an object in the VR scene to focus, the embodiments can be extended to provide a more enhanced view on the screen of the HMD. In some embodiments a signal generated can take into account the user’s vision characteristics when adjusting the Zoom factor. The user can have an immersive experience by enhancing or augmenting the VR scene or the real-world scene.

The images from the VR scene will usually be pre-recorded videos/images. When images from a virtual scene are rendered on the HMD in some embodiments the historical input of other users who have viewed those images may be taken into account when generating the signals to adjust the zoom. In some embodiments, historical inputs from users can be correlated to content from a VR scene in order to determine which zoom factor settings caused dizziness or motion sickness. This information can be used to fine-tune the zoom factor for the lens of one or more forward-facing cameras when rendering the VR content so that the user doesn’t experience motion sickness, nausea, etc. when the images from the VR are shown to the user in the HMD. In some embodiments the user (e.g. thrill seekers) may have the option to override the refinement to view the VR content at a specific zoom factor setting.

The embodiments of this invention allow the HMDs to act as virtual binoculars. They do so by allowing specific portions of an image of a VR scene or a real-world setting to be zoomed by adjusting zoom factors of the devices that capture images. The inputs from the HMD’s controllers and sensors can be used dynamically to activate or deactivate certain settings of the lenses of one or more cameras facing forward to allow users to see the content clearly. Alternately, certain features or portions of the image can be adjusted in order to bring an object into focus that contains the features or portions. In some implementations the HMD is used to teleport the user from one place in the VR world or real-world to another. This teleportation enhances the user’s VR experience or AR world. “For example, the HMD may focus on a particular area or portion of the VR environment to give the impression that the user has been teleported.

In one embodiment, it is possible to present an object in a real world environment on a screen of the head-mounted display (HMD). The method involves receiving an image of the real-world surroundings in close proximity to a wearer of the HMD. A processor in the HMD receives the image from one or multiple forward-facing cameras. The image is then rendered on the screen by the HMD. One or more gaze-detecting cameras on the HMD are pointed at the eye or eyes of the wearer to detect the gaze direction. Images captured by forward-facing cameras are analyzed in order to identify an object that is captured in the real world environment and correlates with a user’s gaze direction. The object’s image is rendered from a virtual distance which causes it to be out of focus when shown to the user. The signal adjusts the zoom factor of the lens on the one or multiple forward-facing cameras to bring the object into focus. “The adjustment of zoom factor allows the image of object to appear on the screen of HMD at second virtual distance, allowing the object be discernible to the user.

In some implementations, a signal is generated when the user’s gaze is directed at the object for a preset period of time.

In some implementations the object is brought to focus for a period of time that has been predefined. After the time period has expired, the images of the real-world are rendered.

In some implementations, zoom factor can be adjusted to take into account the vision characteristics of the eyes that wear the HMD.

In some implementations, a signal for adjusting the zoom factor may include a signal that adjusts the aperture setting in one or multiple forward-facing cameras to change the depth at which an object’s image is captured by one or several forward-facing cameras of the HMD.

In some implementations the signal for adjusting the zoom factor also includes a signal that adjusts the focal length of one or more cameras facing forward when they capture the images of an object in a real-world environment. The focal length adjustment causes the zooming of the object.

In some implementations, a signal for adjusting the zoom factor may include a signal that controls a zooming speed, in which the speed is controlled depending on the type of images captured of the real-world environments or the user.

In some implementations the signal for adjusting the zoom factor also includes a signal for adjusting brightness of the HMD’s screen.

In some implementations, the HMD’s forward-facing cameras are used to create a three-dimensional model of a real-world scene. The three-dimensional model is created by tracking multiple points in images captured with more than one camera, and relating them to a three dimensional space.

In some implementations, the object can be identified by highlighting the object in the images that match the gaze direction. The object is then confirmed by the person wearing the HMD.

In some implementations, the method of rendering an object on the screen of a HMD is disclosed. The method involves receiving images of a virtual-reality (VR) scene to render on the HMD screen. The HMD detects the selection of an object among the rendered images of a VR scene. The object selected is rendered at a virtual distance which makes it appear out of focus to a HMD user. A region in the VR scene adjacent to the object is identified. This area allows the user to move freely in relation to an object while viewing it. A signal is sent to virtual teleport the selected object to an area near the selected user. “The virtual teleportation causes the object on the HMD screen to appear at a second distance, allowing the object be discernible to the user.