AI patent claims can be powerful, but they can also fail fast if they sound too broad, too vague, or too much like a basic idea dressed up with tech words. That is where many founders get stuck. They built something real. It works. It solves a hard problem. But when the patent claim does not clearly show how the system works in a real, technical way, it can get hit with an “abstract idea” rejection. This article will show how to avoid that mistake from the start. We will walk through how to frame AI inventions so they read like real engineering, not empty theory, and how to write claims that are tied to actual system steps, model behavior, data flow, and measurable results.

Why AI Patent Claims Get Rejected as “Abstract Ideas”

AI patents often get rejected as “abstract ideas” because the invention is described at a very high level while the real technical work stays hidden in the background. For a business, this is a costly mistake.

You may have built something hard, useful, and valuable, but if the patent reads like a general concept instead of a real machine process, the examiner may treat it like a broad idea rather than a protectable invention.

That gap between what your team built and what the patent actually says is where trouble starts.

A strong AI patent section needs to do more than explain that your product uses a model, scores inputs, makes predictions, or improves decisions.

It needs to show where the technical work lives, why the system is not just mental logic moved onto a computer, and how the claimed steps create a real-world technical result. The goal is not to sound smarter. The goal is to sound real.

The Problem Usually Starts With Overly Broad Framing

Many AI inventions are first described in business language because that is how founders pitch products to investors, customers, and partners. That same language is often what causes problems in patent claims.

When a patent says the system “analyzes data,” “detects patterns,” “recommends an action,” or “optimizes a result,” that may sound impressive, but it does not always sound like a technical invention.

It can sound like a basic thought process done on a normal computer. Examiners often look for this exact issue. If the claim feels like it could be done by a person with enough time, rules, and judgment, it becomes easier to call it abstract.

Business Value Is Not the Same as Patent Value

A feature can be very important to your company and still be poorly framed for patent protection.

A founder may know the system saves money, improves conversion, lowers risk, or speeds up workflow. Those are good business outcomes. But they do not automatically show a technical invention.

For patent purposes, the better question is this: what exactly is the machine doing that is new in the way it handles data, model execution, system control, timing, memory use, feature generation, output structure, or feedback flow? That is the level where abstract idea risk starts to drop.

AI Claims Often Sound Like Results Without Mechanics

A common reason for rejection is that the claim focuses on what the system achieves, but not how it gets there. This is especially common in AI because teams are excited about outcomes and often skip over the plumbing.

If your claim says the AI “predicts user behavior” or “classifies a document,” the examiner may ask what makes that more than a standard computer step. The claim needs to show technical mechanics.

That may include how training inputs are prepared, how features are selected or transformed, how the model is triggered, how outputs are calibrated, how confidence thresholds control downstream operations, or how the architecture solves a system-level issue like speed, noise, or resource limits.

Examiners Are Looking for Technical Anchors

The more your claim is tied to real system behavior, the harder it is to dismiss as abstract. That is why businesses should think in terms of anchors.

A technical anchor is a concrete part of the invention that proves the claim is not just a broad idea. It might be a specific way of generating embeddings from streaming data.

It might be a method for routing inputs across specialized models based on device conditions. It might be a feedback loop that updates thresholds using live production error signals.

It might be a pipeline that reduces false positives by combining structured and unstructured data at a specific stage.

These anchors matter because they show that your invention lives inside system design, not just business logic.

The Risk Gets Worse When the Claim Reads Like Automation of Human Judgment

Many AI products are built to help people decide something faster. That can be useful, but it can also create patent risk if the claim looks like it only automates thinking.

If the invention is framed as “receive information, evaluate it, decide something, and present a result,” the examiner may see it as a human decision process on a computer.

That is one of the fastest paths to an abstract idea rejection.

Businesses need to be careful here, especially in areas like finance, legal tools, hiring, support, health workflow, and sales scoring, where the product naturally overlaps with tasks humans already do.

The safer path is to show that the system does not just imitate judgment. It changes how a machine operates, how data is processed, how resources are allocated, how timing is controlled, or how technical performance improves.

The Word “AI” Does Not Fix a Weak Claim

A surprising number of filings lean too hard on the fact that the invention uses artificial intelligence.

That label alone does not make the claim stronger. In some cases, it can even make the writing weaker because the claim starts to rely on buzzwords instead of clear technical facts.

Saying that a neural network, language model, or classifier is involved is not enough. The examiner will still ask what the invention actually is. Is the new part in training? In inference? In feature handling?

In model orchestration? In hardware-aware deployment? In output correction? In monitoring and adaptation? Businesses should train themselves to answer this with precision before drafting begins.

A Lot of Rejections Come From Claims That Are Too Generic Across Industries

Some applicants try to write claims broad enough to cover every use case, every customer segment, and every possible implementation. That feels strategic at first, but it often backfires.

A claim that could apply equally to lending, ad targeting, logistics, fraud review, insurance, and content ranking may look flexible from a business view. From a patent view, it may look untethered and abstract.

Broad protection is important, but breadth without technical detail is fragile. A stronger business move is to claim the real technical engine clearly, then expand coverage through layered claim drafting built around that core.

What Patent Examiners Need to See in an AI Invention

Patent examiners do not need a startup pitch. They do not need a product demo in words. They need to see a real invention. That means your AI patent cannot stop at saying the system uses machine learning to make a prediction, sort data, or improve a decision.

The examiner is trying to figure out whether your invention is a real technical solution with clear moving parts, or just a broad idea placed on a computer.

If you want a stronger patent, you need to show the structure, the flow, the problem, and the technical fix in a way that feels grounded and specific.

Examiners Need to See a Real Technical Problem

The first thing an examiner often looks for is whether the invention solves a real technical issue instead of a general business problem. This matters more than many founders think.

If your patent says the system helps companies make better choices, save money, reduce churn, or improve user experience, that may sound useful, but it does not show technical depth on its own.

A stronger AI invention explains the system problem underneath the business result. Maybe your model had trouble with noisy inputs. Maybe your platform had to process data under strict speed limits.

Maybe inference had to happen on a small device with limited memory. Maybe training data came in mixed formats and needed a special way to be normalized before the model could use it.

These are the kinds of details that help the examiner see the invention as a technical one.

The Problem Should Be Tied to System Behavior

It is not enough to say there was a challenge. The challenge should affect how the machine operates.

A good patent section shows that the issue lives inside data handling, model execution, processing limits, system reliability, output quality, or another concrete part of the technical stack.

This is where many businesses can sharpen their draft. Instead of saying the system improves recommendations, explain that the invention reduces repeated scoring operations by pre-processing event streams in a new way before ranking occurs.

That changes how the system functions, and that is what examiners want to see.

Examiners Need to See the Technical Solution, Not Just the Outcome

A common mistake in AI patent writing is focusing too much on what the invention achieves and not enough on how it gets there. The examiner is not there to guess the hidden engineering.

If the patent only talks about better results, faster insights, or smarter automation, it leaves too much unsaid.

Your application needs to show the actual technical path. What enters the system. What gets transformed. What model or process is applied. What happens to the output.

What downstream component reacts. The more clearly this is explained, the easier it becomes to show that the invention is more than a broad concept.

They Want to See the “How” in Plain Terms

This does not mean you need to drown the patent in hard-to-read theory. In fact, clear and simple explanation is often much stronger. An examiner wants to understand what your system does at each important stage.

If your invention uses multiple models, explain how the system decides which one to use. If it creates features from incoming signals, explain how those features are formed and why that helps.

If it updates thresholds over time, explain what signals trigger that update. Those details matter because they turn abstract claims into concrete invention language.

Examiners Need to See a Clear Connection Between Input and Output

One strong sign of a real AI invention is a clear chain between the data coming in and the technical result coming out. When that chain is vague, the application starts to look thin.

When that chain is clear, the invention feels more real.

An examiner should be able to follow the movement of the system. The input is received in a certain format. It is cleaned, grouped, compressed, or transformed in a specific way.

A model processes it under certain rules. The output is then used to trigger or control another operation. This kind of story gives shape to the invention.

Data Handling Is Often Where the Real Invention Lives

Many founders assume the most important part is the model itself. Sometimes that is true, but often the real novelty sits elsewhere.

It may be in how the data is prepared, how edge cases are filtered, how time-based signals are aligned, or how outputs are merged with system constraints before action is taken.

This is a big opportunity for businesses. If you can show that the technical value comes from how your system handles difficult inputs or complex processing conditions, you give the examiner something concrete to hold onto. That makes the application stronger.

Examiners Need to See That the AI Is Part of a System, Not Floating by Itself

A patent gets stronger when the AI is presented as one part of a larger technical system. Examiners often become skeptical when the model feels detached from any real machine process.

A claim that simply says a model analyzes data and produces a result can look too general. A claim that shows the model driving a technical system looks more grounded.

Your draft should show where the AI sits inside the product. Does it control a workflow engine. Does it guide data routing. Does it affect resource allocation. Does it trigger fallback logic.

Does it change how sensors are activated or how outputs are generated. These details show that the invention is not just about thinking. It is about operating.

System Consequences Matter

A useful way to think about this is simple. After the model produces an output, what changes in the machine or platform because of that output. That answer is often one of the most important parts of the patent.

If the output causes a downstream service to run differently, that matters. If it reduces server load by choosing a lighter path for certain inputs, that matters.

If it changes timing, memory usage, scheduling, or device behavior, that matters even more. Examiners look for these technical consequences because they help prove the invention is rooted in actual computing.

Examiners Need to See More Than Generic AI Language

Words like model, classifier, neural network, prediction engine, and intelligent analysis may sound useful, but they do not carry much weight on their own. Examiners see those terms all the time.

What matters is what makes your implementation different and real.

That is why your patent should not lean on labels. It should lean on specifics. What type of data is being processed. How is it transformed. When is the model invoked. What conditions shape the execution path.

How are outputs evaluated. What system reacts to those outputs. These are the kinds of details that separate a serious AI filing from a weak one.

Naming a Model Is Not the Same as Explaining an Invention

A company may say its invention uses a large language model, a vision network, or a reinforcement system. That may be true, but the examiner still needs to know what the invention actually is.

The new part may not be the model family. It may be how the model is applied in a constrained environment, how the prompts are structured for a machine task, how confidence is measured before action, or how outputs are corrected with a second layer before system use.

The takeaway is simple. The brand of AI does not carry the patent. The technical implementation does.

Examiners Need Support in the Specification, Not Just in the Claims

A strong claim needs a strong foundation. If the specification is vague, the claims may not carry enough force. Examiners read the whole application to understand what the inventors actually built.

If the written description is full of broad statements and light on technical detail, it becomes harder to argue that the claims are tied to a concrete invention.

That means the body of the application should explain the architecture in practical language. It should describe the relevant inputs, transformation stages, model behavior, control logic, and system outcomes.

It should also explain the problem the invention solves and why older approaches struggled. This supporting detail gives the claim real depth.

The Description Should Match the Real Product

One of the best ways to improve patent quality is to make sure the draft reflects how the system truly works.

Businesses sometimes let patent language drift into a generic version of the product because they want the filing to sound broad. But when the language moves too far from the actual build, the invention can lose clarity.

A better approach is to start with the real architecture and then shape broader protection around it.

That keeps the filing honest, useful, and easier to defend. It also reduces the risk of empty wording that invites an abstract idea rejection.

Examiners Need to See Why the Claimed Steps Are Not Mental Steps

This point is critical for AI inventions. If the claimed process looks too much like what a person could do by reading information, thinking about it, and making a judgment, the examiner may see it as abstract.

The fact that a computer performs the steps does not always solve that issue.

Your draft should make clear that the invention is not just a digital version of a human thought process.

It should show machine-scale processing, structured transformations, system-controlled execution, and technical actions that do not make sense as ordinary mental work. The more the invention looks like a real computing process, the better.

Avoid Framing the Invention Like a Human Task

This is especially important in AI products used in finance, legal work, customer support, hiring, or operations. These areas often involve decisions that people also make. That creates risk if the patent is written too loosely.

A safer strategy is to center the claim on the technical mechanisms that let the platform operate in a new way.

That may include model gating, event sequencing, confidence-based routing, memory-aware inference, signal compression, or post-processing tied to system actions. These details move the invention away from human judgment and into technical territory.

Examiners Need Evidence of Precision

An AI patent should feel precise. That does not mean it needs narrow language everywhere. It means the invention should not feel hand-wavy. Examiners notice when key parts are missing.

If the patent leaves open too many basic questions, the invention can seem generic.

Precision comes from naming the important components and showing how they work together. It comes from describing the conditions that matter.

It comes from explaining why the order of operations matters or why a certain transformation happens before the model runs. These details signal that the inventors understood the engineering and captured it properly.

Precision Creates Business Value Too

This is not just about pleasing the examiner. Precision also helps your company. A clear patent is easier to explain to investors, easier to use in diligence, and more useful when thinking about competitors.

It gives shape to your moat. It turns invention into an asset that others can understand and respect.

That is why the drafting process should not be rushed or treated like a formality. A good AI patent is not built from surface claims. It is built from real system detail presented in a way that maps to legal protection.

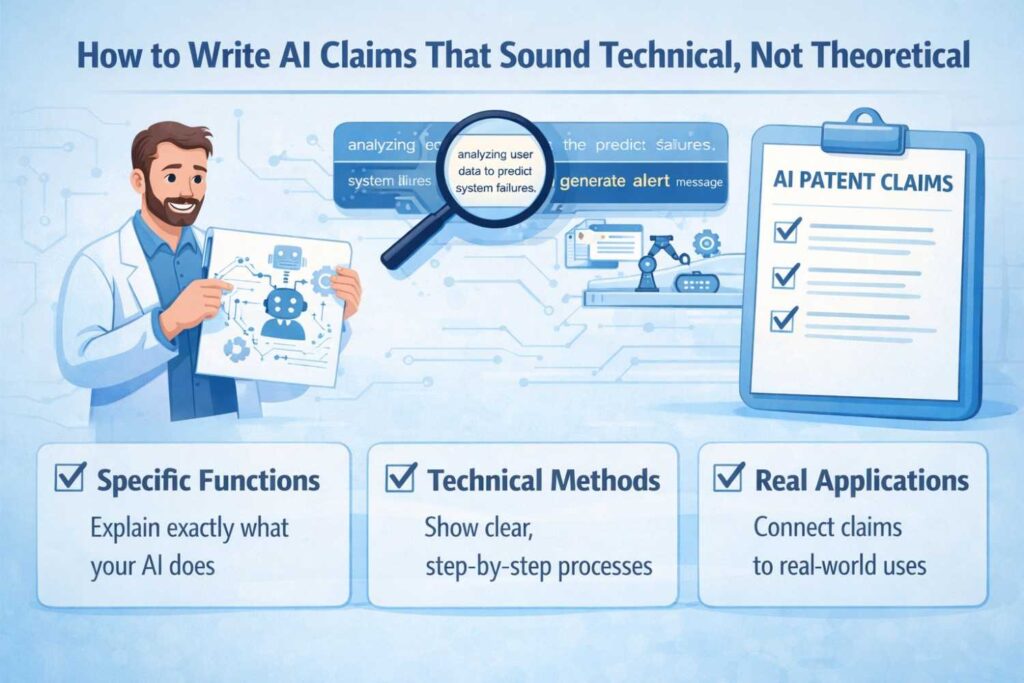

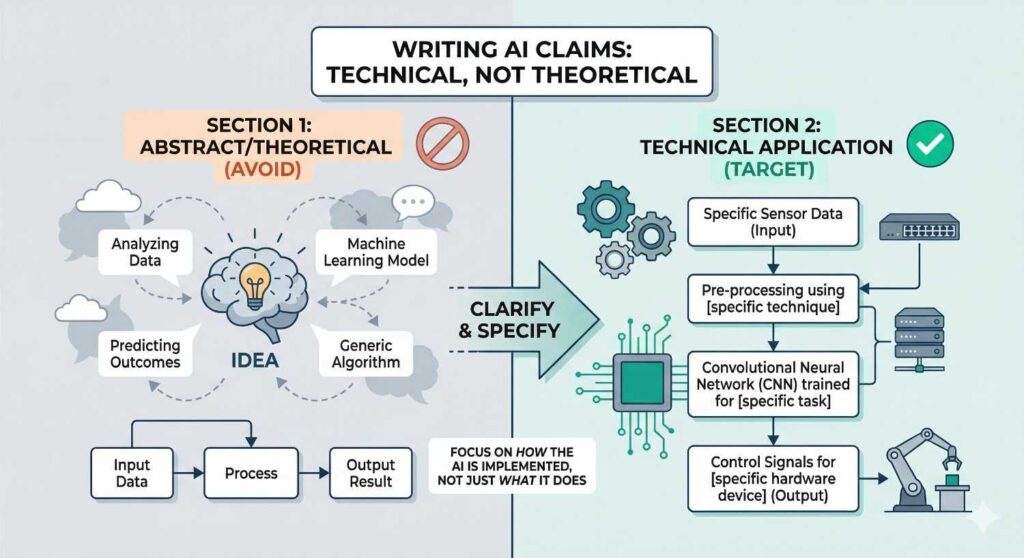

How to Write AI Claims That Sound Technical, Not Theoretical

Writing AI patent claims is where many strong inventions start to lose power. The team built something real. The system works. The product solves a hard problem.

But when the claim is written in soft, general, high-level language, the invention can start to sound like a theory instead of a machine-based solution. That is the gap you need to close.

A strong AI claim should make the reader feel that the invention lives inside an actual system. It should sound like engineering, not a pitch deck.

It should point to what the machine receives, what it does, how the parts interact, and what technical result follows. When a claim sounds technical, it becomes easier to defend, harder to dismiss, and more valuable for the business.

Start With the Real System, Not the Big Idea

The fastest way to make a claim sound theoretical is to begin with the broad concept behind the product. That usually leads to language that feels vague, inflated, and hard to pin down.

Claims written that way often talk about improving decisions, analyzing behavior, generating recommendations, or optimizing outcomes.

Those phrases may describe business value, but they rarely describe the technical invention clearly enough.

The stronger move is to begin with the real system your team actually built. That means looking at the architecture, the data path, the model flow, the control logic, and the downstream action.

When you start there, the claim naturally becomes more concrete because it grows out of technical facts instead of broad goals.

Use Words That Show Machine Activity

Claims sound technical when the language points to operations a system actually performs. That is very different from language that simply describes a result.

A claim that says a system “improves classification” feels far less grounded than one that says the system receives event data, generates a compressed feature representation, applies a trained model to that representation, compares an output score against a dynamic threshold, and triggers a downstream system action based on the comparison.

The difference is not style alone. It is substance. Technical claims show movement inside the system.

They make clear that something structured is happening. This is one of the easiest ways to shift your claim away from the theoretical and toward the practical.

Strong Verbs Make a Big Difference

Many weak claims rely on soft verbs like determine, evaluate, identify, or analyze without explaining how those actions take place. Those words are not always bad, but they become weak when they stand alone.

They need support from real system behavior.

A stronger claim uses verbs tied to actual operations. It may say the system transforms, partitions, routes, encodes, calibrates, aggregates, filters, aligns, updates, or triggers.

These words make the invention sound more like computing and less like abstract thinking. They also help connect the claim to engineering work the team can explain and support.

Tie Every Major Step to a Specific Technical Role

A claim becomes stronger when each step has a clear job inside the system. This is where many AI claims become too airy.

They mention receiving data, applying a model, and outputting a result, but the steps feel disconnected. The examiner reads them as generic motion instead of invention.

Each step in the claim should do meaningful technical work. A preprocessing step should not be there just to sound complete. It should solve something. A threshold step should not be decorative.

It should control a meaningful system response. A routing step should show why different inputs are handled differently. When each step has a role, the claim starts to feel purposeful and engineered.

Show the Shape of the Data Flow

One of the best ways to make an AI claim sound technical is to make the data flow visible. Data should not appear and disappear like magic. The claim should help the reader follow what comes in, what changes, and where the result goes.

This does not mean adding endless detail. It means giving enough structure to show that the invention has a real process.

If the invention depends on transforming raw input into a specific intermediate form before model execution, that matters.

If the output is not simply displayed but instead drives another part of the system, that matters too. Technical claims often feel strong because they reveal this flow without getting lost in noise.

Intermediate Data Can Be Where the Invention Lives

Businesses often focus too much on the input and final output. But in many AI systems, the real invention sits in the middle.

It may be in how features are generated, how signals are aligned, how noisy records are filtered, or how multiple model outputs are reconciled before action.

When that middle layer is important, the claim should reflect it. This can make the difference between a claim that reads like a generic AI pipeline and one that sounds like a real technical method.

The more your claim points to the stage where the hard engineering happens, the better it tends to hold up.

Avoid Claim Language That Sounds Like Human Thought

AI claims often get weaker when they sound like a person could do the same task by reading information and making a judgment. That kind of framing makes the invention feel theoretical, even if the real product is not.

This problem shows up when the claim is written around ideas like reviewing inputs, deciding a category, scoring a condition, or recommending a next step without grounding those actions in machine operations.

The safer path is to write the claim around the technical mechanics that no normal human process would perform in the same way. Focus on transformations, execution rules, model invocation conditions, system triggers, and operational consequences.

A Simple Way to Turn Real AI Systems Into Strong Patent Claims

Many AI patent claims fail for one simple reason. They do not capture the real invention. They circle around the product, the outcome, or the promise, but they never lock onto the technical core that makes the system work. That is why founders often feel frustrated.

They know their team solved something hard. They know the product is not just a vague idea. But when the patent is drafted, the claim can still come out sounding broad, soft, and easy to challenge.

The good news is that there is a simpler way to do this. You do not need to start with legal language. You do not need to force your invention into abstract patent phrases.

The better path is to begin with the actual system your team built and then turn that system into claim language piece by piece. When you do that, the patent starts to reflect the real engineering instead of a watered-down version of it.

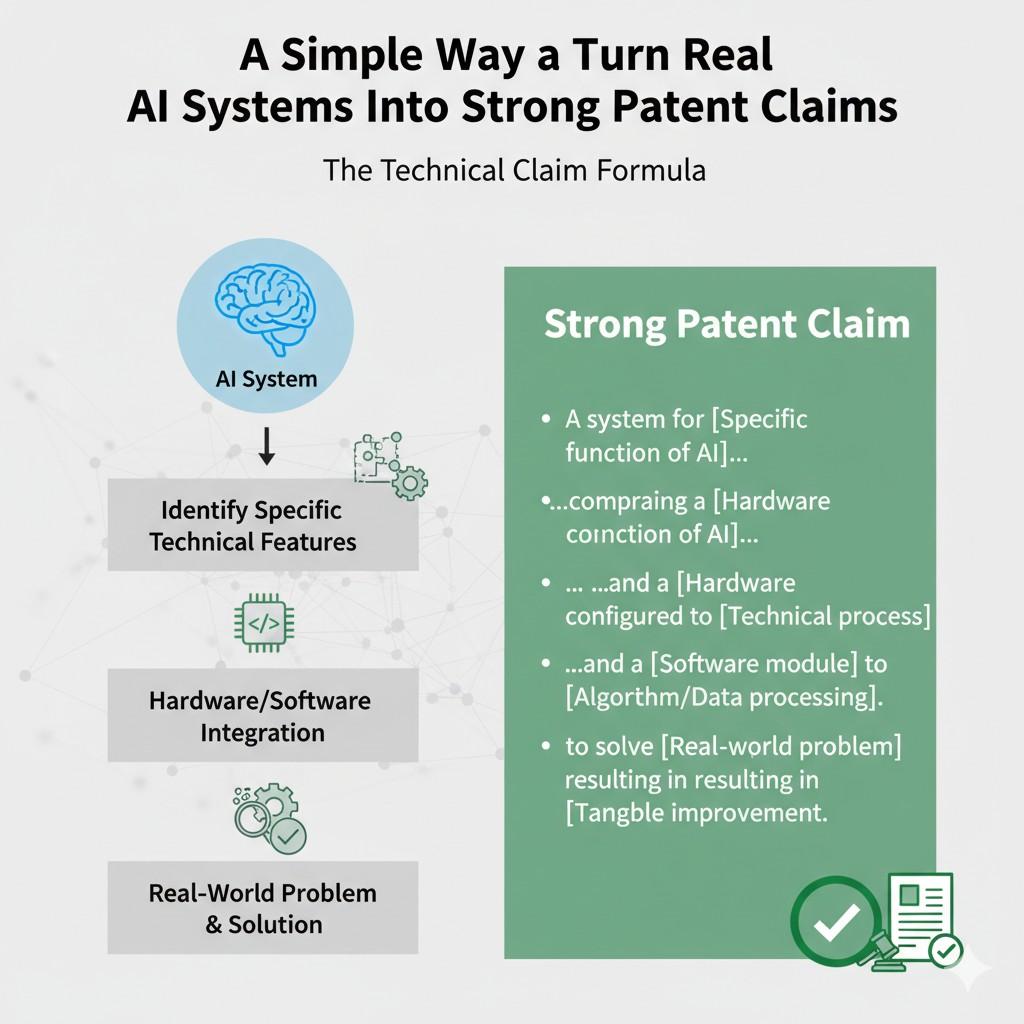

Start by Finding the Part That Actually Creates the Edge

Every useful AI product has many layers. There is the user-facing feature. There is the business promise. There is the model. There is the data pipeline. There is the control flow.

There are fallback rules, thresholds, triggers, and downstream effects. But not all of these pieces carry the same patent weight.

If you want strong claims, you need to isolate the part that gives your system its real edge. That is the part a competitor would struggle to copy without doing meaningful engineering work.

It may be how the system prepares messy data before inference. It may be how the model is invoked only under certain conditions. It may be how output confidence drives later machine actions.

It may be how several signals are combined in a way that keeps performance stable under hard production limits.

That hidden technical layer is often where the strongest patent claim begins.

Most Founders Start Too High Up

Founders naturally talk about what the product does. They say the system predicts failures, summarizes documents, routes support tickets, detects fraud, or improves ranking.

That is normal. It is how products are explained in the real world. But that is not the best place to start a claim.

A stronger approach is to step below the feature and ask what the machine is doing under the hood. What raw input enters the system. What gets transformed. What is generated before the model runs.

What happens after the output is produced. What system changes because of that output. That is where the invention starts to become claimable in a serious way.

The Surface Feature Is Usually Not Enough

This is an important shift for businesses. The product feature may matter for sales, but the patent usually needs to protect the mechanism underneath.

If the claim only follows the feature story, it may end up sounding broad and theoretical. If it follows the technical mechanism, it becomes much harder to dismiss.

That is why strong patents often feel more specific than startup messaging. They are not trying to win a demo day. They are trying to protect the engine.

Turn the System Into a Clear Technical Story

A simple way to build strong AI claims is to tell the invention as a machine story. That story should move in a clean line. The system receives something. It transforms or structures it.

It applies logic or model behavior. It generates an output. That output changes another part of the system.

This sounds basic, but it is powerful. It forces the invention into a form that feels real. It shows technical flow. It gives the claim shape. Most importantly, it helps avoid vague language that reads like a goal instead of a method.

The best patent claims often come from teams that can explain their invention in this plain, structured way before any formal drafting begins.

The Simpler the Explanation, the Stronger the Drafting Foundation

Many people think patent-worthy inventions need to be described in dense, complex language. In practice, clear and simple explanation is usually a better starting point.

If an engineer can explain the system step by step in a direct way, it becomes much easier to draft a claim that sounds technical.

For example, it is much more useful to say that the system converts unstructured event logs into fixed-length vectors, scores those vectors with a trained model, compares the score to an adaptive threshold, and triggers a response queue when the score exceeds the threshold than to say the platform uses AI to identify important events.

The first version gives you real material to claim. The second one does not.

A Strong Claim Usually Starts Before the Model

Many businesses assume the patentable part of an AI system must be the model itself. That is not always true. In many cases, the stronger invention sits before the model even runs.

It may be in how signals are cleaned, grouped, compressed, or aligned. It may be in how data from different sources is normalized into a form the system can use. It may be in how the invention reduces noise, removes duplicates, or builds richer feature sets from weak inputs.

These steps often carry real novelty because they affect whether the model works in production at all.

Data Preparation Can Be the Secret Asset

This matters a lot for startups. A team may think the magic is in the final prediction, when the real advantage is the data preparation layer that makes the prediction reliable.

If that is the part competitors would struggle to replicate, that is often the part the patent should emphasize.

Strong claims do not always sit on the most obvious layer. They sit on the layer where technical leverage lives.

A Strong Claim Usually Ends After the Model Too

Just as the invention may begin before the model runs, it often continues after the output is created. This is another place where many patent drafts lose strength.

They stop at the prediction. They say the system generates a score, a label, a recommendation, or a classification. Then the claim ends.

That can make the invention feel incomplete. A stronger claim often shows what happens next. Does the output change routing. Does it trigger a workflow. Does it select a processing path.

Does it update a threshold. Does it cause a second model to activate. Does it alter system behavior in a measurable way. These downstream effects help prove that the invention lives in a real technical environment.

Wrapping It Up

AI patent claims do not fail because AI is too new or too hard to explain. They usually fail because the invention is described in a way that sounds broad, vague, or detached from the real system. That is the key lesson. If you want stronger protection, you cannot rely on high-level product language or generic AI terms. You need to show the actual technical work your system performs, the real problem it solves, and the concrete way it changes machine behavior.

Leave a Reply