Most founders think the hard part is building the experiment engine. It is not. The hard part is protecting it in a way that still matters two years from now, after the dashboard changes, the UI gets rebuilt, and the team ships five new versions. That is where claim drafting matters. If you run an A/B testing or experiment platform, your real invention usually is not “showing version A to one user and version B to another.” That idea is too broad, too old, and too easy to copy in a slightly different form. The real value often lives deeper in the system. It may be in how traffic gets assigned, how results stay valid when users switch devices, how rollout risk is controlled, how metrics are cleaned before analysis, or how the platform connects decision logic with real product actions. That is where smart patent claims can create real protection.

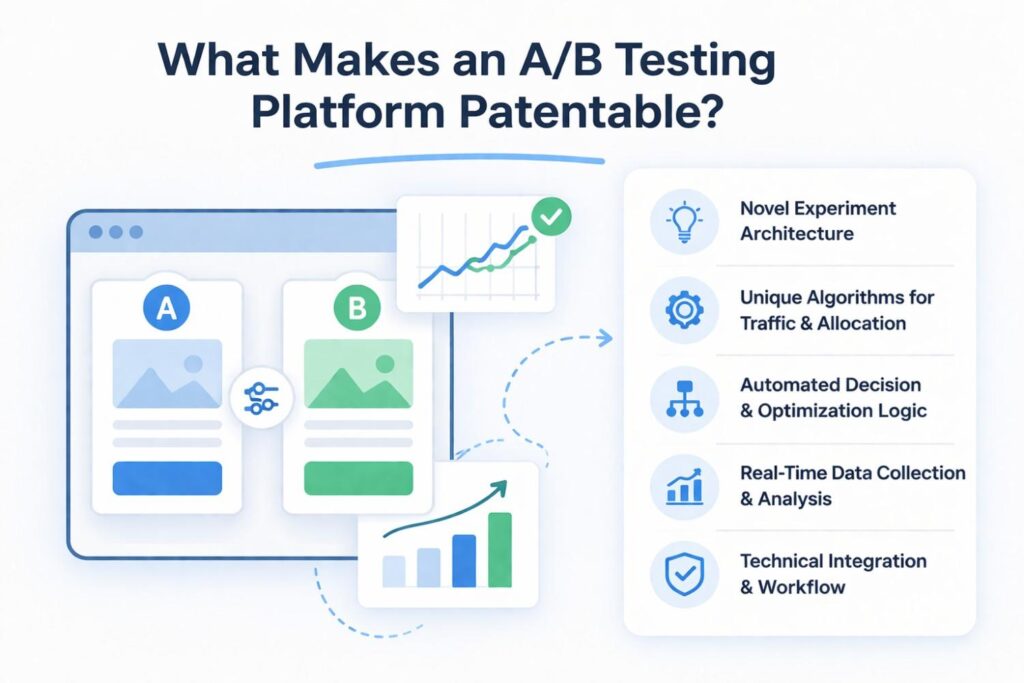

What Makes an A/B Testing Platform Patentable

Not every experiment platform feature is worth patenting. That is the first thing a business should understand before spending time, money, or energy on a filing.

A lot of teams assume that if they built something useful, it must be patentable.

That is not how this works. A feature can be useful, loved by customers, and hard to build, yet still be too general to protect in a meaningful way.

What matters is not whether your platform runs tests. Many tools do that. What matters is whether your system solves a real technical problem in a concrete way.

A strong patent story usually starts when the platform does more than split users into groups and compare outcomes.

It starts when the system improves how experiments are set up, delivered, measured, corrected, scaled, or acted on inside a live product environment.

For businesses, this matters because the goal is not just to get a patent. The goal is to protect a business advantage.

That means you need to identify the part of your platform that gives you leverage in the market, makes your product harder to copy, or helps customers trust your results more than they trust other tools.

Patentable Value Starts Where Generic Testing Ends

The most important shift for a company is this: stop thinking about the patent as protection for “A/B testing” and start thinking about it as protection for the special engine inside your testing platform.

The broad idea of showing one version to one user and another version to another user has been around for too long. That idea alone is not where your edge lives.

Your edge may live in how your platform handles unstable traffic, how it avoids sample pollution, how it assigns users when identity is incomplete, how it reacts when a metric becomes unreliable, or how it controls rollout based on confidence and business risk at the same time.

These are not cosmetic details. These are often the places where the real invention sits.

A smart business should ask one simple question: if a competitor copied our product tomorrow, what part would they need to copy for customers to get the same result?

The answer often points toward patentable subject matter far better than a product roadmap or a feature list.

A Useful Product Feature Is Not Always a Patentable Invention

This is where many teams go wrong. They confuse customer value with patent value. Customer value is important, but patent value comes from how the result is achieved.

If the claim only says that the platform helps teams run experiments faster, that is too soft.

If the claim explains a specific system for reducing setup mistakes through a rules-driven dependency model that automatically validates variant conflicts before deployment, that starts to sound more like a protectable invention.

The business lesson here is simple. You should not ask, “What feature do customers love?” You should ask, “What technical method makes that feature possible?” That method is often what deserves protection.

The Best Patent Targets Usually Solve a Messy Real-World Problem

Patentable ideas often come from pain. Not fake pain from a pitch deck, but real operational pain that engineering teams had to work through.

A platform becomes much more interesting from a patent angle when it solves problems that happen in live systems under real conditions.

That may include issues like noisy data from bots, contamination when users move between devices, conflicting experiments running at the same time, poor metric quality caused by delayed events, or fairness issues when traffic allocation changes during a live run.

These are real system-level challenges. When your platform solves them through a defined technical process, you may have something valuable to protect.

For a business, this is good news. It means patentable material is often hiding inside the work your team already did to make the product stable, trusted, and scalable.

You are not inventing a patent story from thin air. You are uncovering the hard part of what you already built.

Patentability Often Lives in the System Design, Not the User Interface

Founders often point first to the dashboard, the experiment builder, or the reporting screen. Those parts matter for product adoption, but they are not always where the strongest claims come from.

Screens change fast. Menus move. Labels get rewritten. A patent tied too closely to a visible interface can become narrow very quickly.

What tends to last longer is the system underneath.

That includes how the platform receives event data, how it stores experiment states, how it resolves assignment decisions, how it computes significance, how it filters bad samples, and how it triggers actions based on measured outcomes.

Those parts are harder for competitors to redesign around because they sit closer to the core mechanics of the platform.

A strong business strategy is to treat the interface as evidence, not as the invention itself. The real focus should be on the technical workflow behind the interface.

A Patentable Platform Usually Does Something More Than Report Results

A basic reporting engine is rarely enough. The stronger inventions often involve decision-making or system control. For example, an experiment platform may not just display test outcomes.

It may automatically adjust traffic allocation based on incoming performance signals, stop unsafe rollouts before damage spreads, or switch from one measurement model to another when data quality drops below a threshold.

That kind of behavior matters because it moves the invention from passive observation to active platform control. The system is not just watching the product. It is managing the product based on experiment intelligence.

For businesses, this creates a strong framing tool. If your platform influences what happens next in the product stack, not just what users see in a chart, that is worth examining closely for patent potential.

Technical Specificity Creates Stronger Patent Positioning

It is tempting to describe the platform in broad business language because that sounds bigger. In patent drafting, that instinct can backfire. Broad words without technical detail often sound vague.

A vague idea is easier to reject and easier for competitors to avoid.

A better path is to describe the exact mechanics that make the platform work. How is a user assigned when identity data is partial? How does the system detect overlap between experiments?

How does it decide whether data should be excluded? How does it preserve validity when events arrive late? How does it manage rollout decisions across services with different latency and risk profiles?

This level of detail does not weaken your business story. It strengthens it. It shows that the company did not just have an idea. It built a working solution to a hard technical problem.

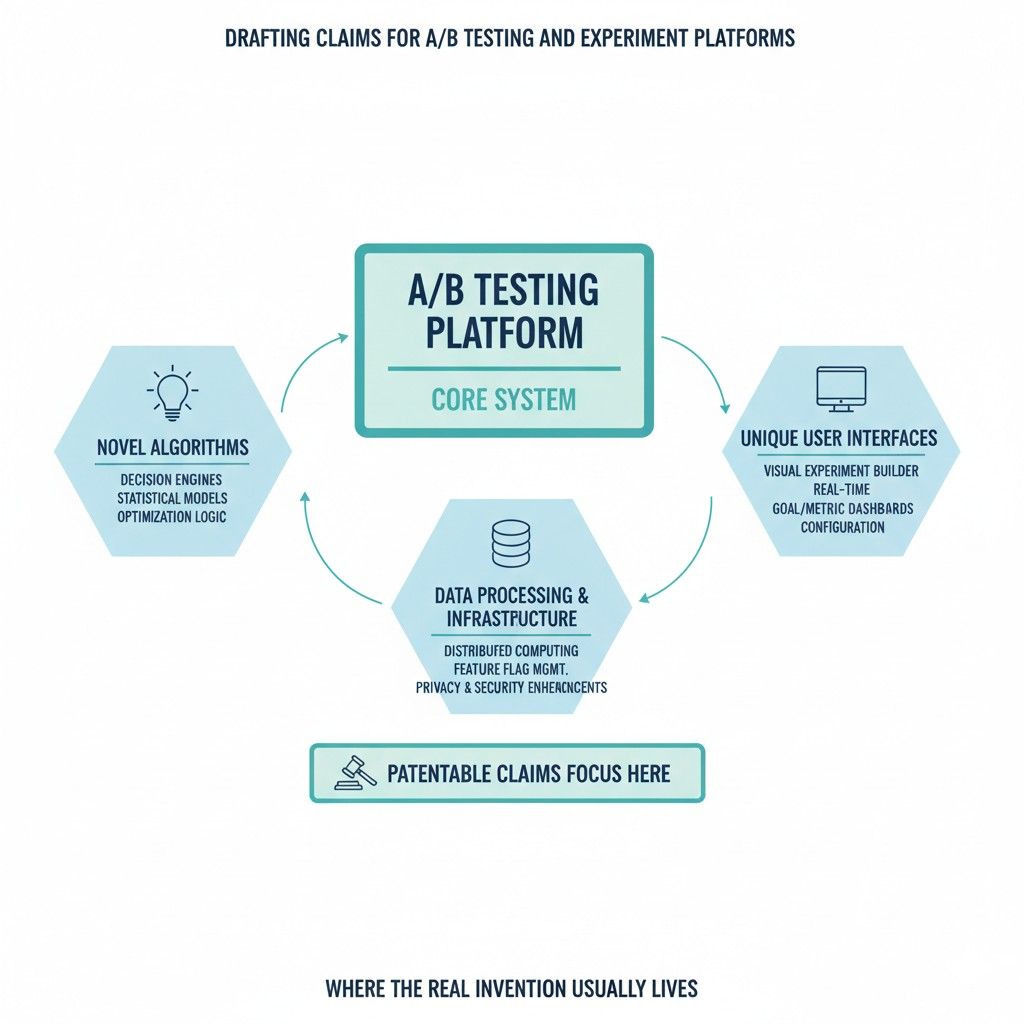

Where the Real Invention Usually Lives

Most A/B testing founders look at the wrong layer when they think about patents. They look at the test itself. They look at the dashboard. They look at the report.

But that is usually not where the strongest invention lives. The real invention is often buried deeper in the system, inside the logic that makes the platform useful, reliable, and hard to replace.

This matters for business because patents should protect what gives your product real leverage. A clean UI can be copied. A nicer graph can be copied.

Even a workflow can be copied if the core system under it is easy to rebuild. What is harder to copy is the hidden engine that keeps experiments accurate, safe, fast, and trusted in live production settings.

That is where businesses should focus when they want protection that actually means something.

The invention is rarely the idea of running a test

The idea of comparing two versions is not the breakthrough. That basic concept has been around for a long time.

A business does not gain much by trying to wrap a patent around the simple fact that one group sees version A and another group sees version B. That is too thin. It does not reflect the hard work your team did.

The stronger move is to ask what your system had to do in order to make that test work in the real world. Maybe the platform had to assign users in a stable way across channels.

Maybe it had to stop teams from corrupting results with overlapping experiments. Maybe it had to detect when event timing broke the analysis. Those are not small details. Those are the places where real engineering value lives.

The invention often lives in the part customers do not see

This is the first shift many businesses need to make. Patent value often hides in the invisible layer, not the visible one.

Users may love the interface, but customers stay because the system works. The hidden plumbing often carries more long-term value than the screen they click through.

That hidden layer may include the assignment engine, the metric processing pipeline, the conflict detection logic, the rollout guardrails, the identity resolution system, or the model that decides when a result is stable enough to act on.

These pieces may never appear on a homepage, yet they are often the hardest parts to build and the hardest for a rival to copy cleanly.

The strongest claim targets are usually tied to system behavior

A strong patent story is not about what the platform says. It is about what the platform does. That difference matters. A report can say anything. A system behavior is harder to fake.

If your platform changes traffic allocation based on incoming signals, that is system behavior. If it holds back rollout when confidence is weak but risk is high, that is system behavior.

If it preserves user assignment when identity data changes midstream, that is system behavior. These are the kinds of actions that tend to support stronger claims because they show a technical process, not just an outcome.

The real invention may sit in how the platform handles uncertainty

Experiment platforms live in uncertainty. Data is incomplete. Users move across devices. Events arrive late. Metrics drift. Traffic changes. Some teams treat these as annoying edge problems.

In patent work, these messy conditions are often where the best invention material sits.

A system that performs well only when data is clean is not very impressive. A system that still gives usable results when the environment becomes messy is much more interesting.

If your platform has a special way to manage weak signals, delayed events, identity gaps, or noisy conversion paths, that may be where the invention really lives.

The invention can live in the safeguards, not just the test engine

Many teams focus on the part that launches a test. That matters, but the stronger invention may be in the safeguards around that launch.

Businesses often gain more value from a platform that prevents bad decisions than from one that simply measures outcomes.

A safeguard may be a rule engine that blocks a rollout when key metrics fall outside safe ranges. It may be a detection layer that identifies sample pollution before analysis begins.

It may be a system that pauses experiment exposure when traffic becomes abnormal. It may be a workflow that reroutes test execution when a service dependency fails.

These kinds of protections can be patentable because they solve hard technical problems in live systems.

The real invention is often the bridge between analysis and action

A lot of platforms stop at reporting. The stronger ones go further. They turn experiment results into controlled product action.

That bridge from analysis to action is often where the business value becomes real and where the invention becomes more defensible.

If your platform does more than show numbers, pay attention. A system that automatically changes exposure, adjusts allocation, updates feature flags, or triggers rollout decisions based on performance signals is doing something more powerful than a normal experiment dashboard.

It is operating the product based on experiment intelligence. That operating logic can become a very strong patent target.

The best patent material usually starts with a question from the field

One useful pattern shows up again and again. The most valuable invention areas often start with an ugly operational question from a customer or an internal team.

What happens when the same user appears under two identifiers? What happens when a test spans multiple services? What happens when event logging is delayed?

What happens when one team’s test affects another team’s metric?

Each of those questions points to a real system challenge. If your platform has a distinct answer, you may be looking at core invention material.

Businesses should collect these questions because they reveal exactly where the platform had to become smarter than a generic tool.

The invention may be buried in assignment logic

Assignment is one of the most common places where real invention hides. Many people outside the engineering team assume assignment is simple. It often is not.

The moment users switch devices, log in late, appear through multiple channels, or pass through more than one service, assignment becomes a hard systems problem.

If your platform includes a way to preserve consistency across changing identity states, manage assignment across distributed environments, or prevent contamination when users re-enter a test path, that is worth serious attention.

A business should not describe this as background plumbing. It should treat it as a candidate for core claim coverage.

Why assignment logic matters commercially

From a business angle, assignment logic is not just a backend detail. It affects customer trust. If assignment breaks, results break. When results break, the platform loses credibility.

That means a technical solution in this area can support both product value and patent value at the same time.

The invention may sit in metric cleaning and result integrity

Many experiment platforms look similar on the surface because they all show lifts, trends, and conversions.

The real difference is often in how the numbers become reliable enough to use. That is where result integrity becomes a rich area for patent strategy.

A business may have built special logic to filter invalid sessions, correct event timing drift, treat missing data in a certain way, or preserve attribution when users move across environments.

Those methods may not look exciting in a product demo, but they can carry major weight in a patent application because they solve concrete technical problems that affect experiment validity.

The invention often appears where teams had to stop bad tests

A surprisingly strong signal is this: look for moments where the platform had to stop people from doing something harmful. That is often where the deepest product logic sits.

Good experiment platforms do not just help teams launch tests. They stop them from launching broken tests, unsafe tests, misleading tests, or conflicting tests.

If your system validates setup against dependency rules, checks metric conflict before launch, identifies audience overlap, or prevents exposure when sample quality drops below a threshold, those are not just product features.

They are examples of problem-solving logic that may support strong claim language.

The invention can live in coordination across experiments

As products scale, experiments stop being isolated events. Many are running at once. Different teams are involved. Shared metrics get touched from multiple directions.

One change in onboarding affects another change in pricing or retention. Coordination becomes hard, and this is where platforms can develop truly protectable value.

If your system can detect interference, sequence tests intelligently, isolate effects across related experiments, or adjust execution based on shared dependencies, that deserves a close look.

A patent in this area can be strategically useful because it protects the platform’s ability to operate well in larger, more chaotic product environments.

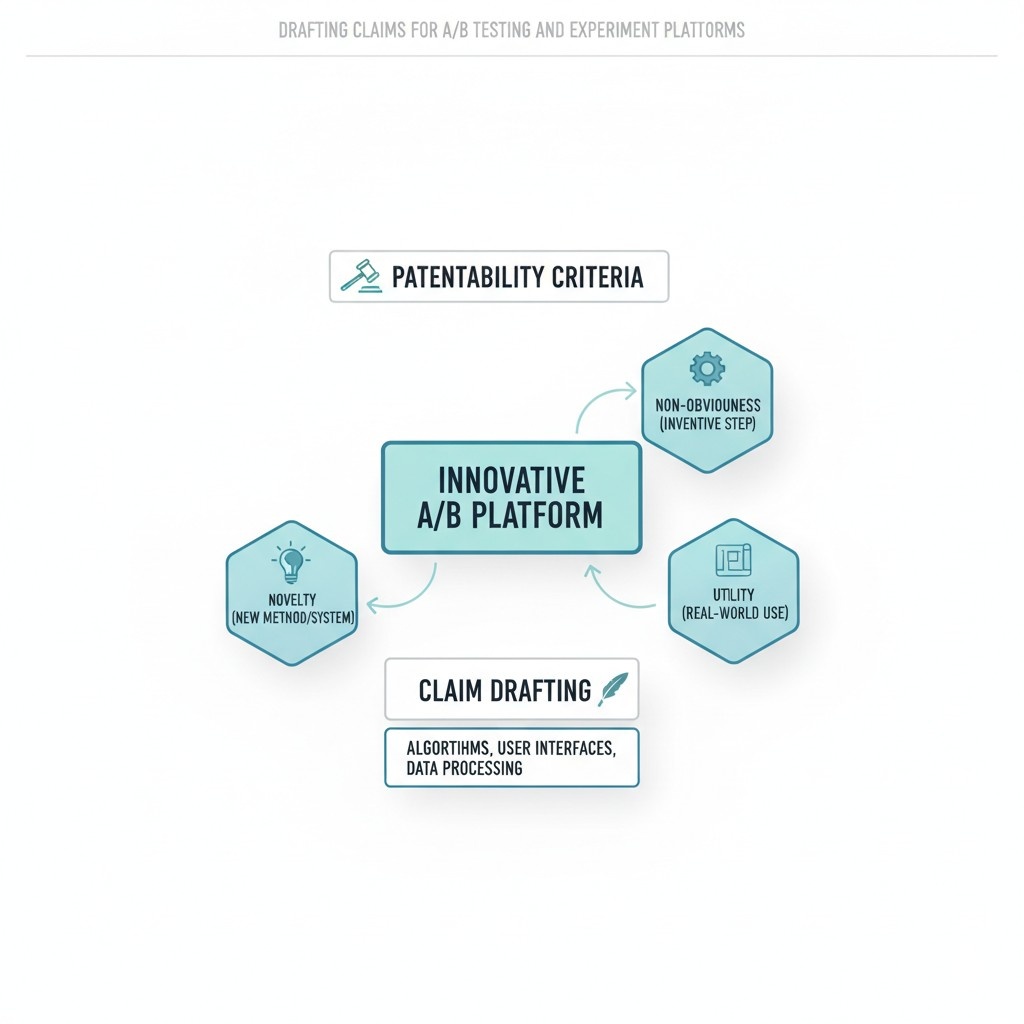

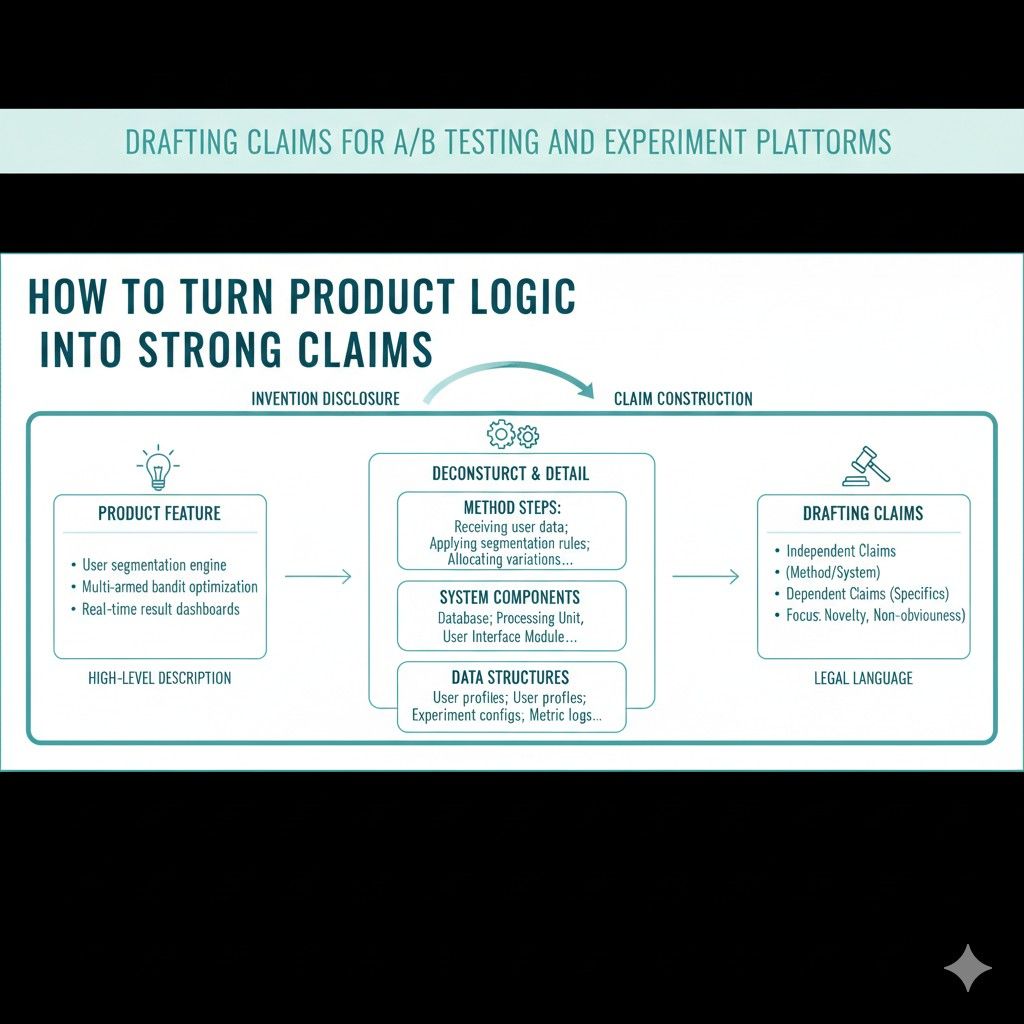

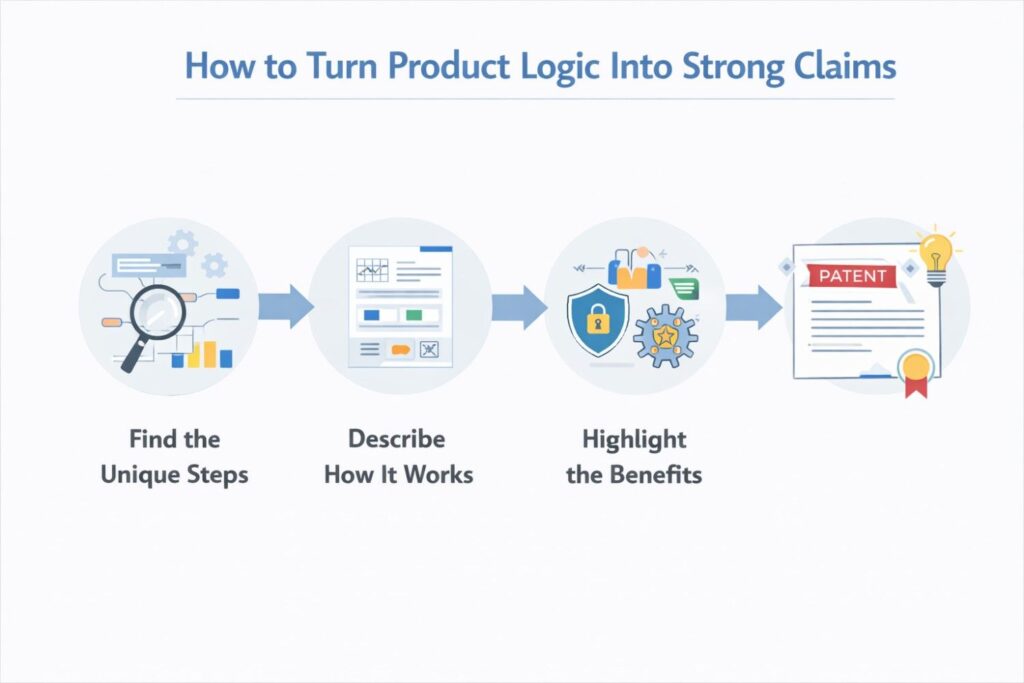

How to Turn Product Logic Into Strong Claims

A lot of teams know their product is smart, but they struggle to explain that smartness in a way that becomes real patent protection.

They talk about what the platform does for the user, but not how the system actually works under the hood.

That gap is where weak patent claims often begin. If the goal is to protect a serious business advantage, then product logic has to be translated into claim language with care.

For a business, this section matters because a patent does not protect your pitch. It does not protect your homepage wording. It does not protect a vague idea that your platform helps teams run better experiments.

What it can protect is the technical process your system performs to solve a real problem.

That means the company needs to take hidden logic, turn it into a clear system story, and then shape that story into claims that are broad enough to matter and specific enough to hold up.

Start with the system behavior, not the feature name

The first step is to stop using product labels as a shortcut. A feature name may help with sales, but it usually does very little for claim drafting.

Names like smart allocation, adaptive rollout, or unified experimentation may sound strong in a deck, yet they do not tell anyone what the system is actually doing.

The better move is to describe the behavior. What inputs does the platform receive. What conditions does it evaluate. What decision does it make. What action does it take next.

This is the raw material of a strong claim. When a business can explain the sequence clearly, claim drafting becomes much easier and much more useful.

Describe what changes inside the system

A claim becomes stronger when it describes a real change happening inside the platform.

That change might be a change in assignment state, traffic exposure, event treatment, metric qualification, identity mapping, or rollout status.

These are concrete things. They show that the invention is not just an idea floating in business language. It is a working process happening inside a technical environment.

This is where many teams miss a major opportunity. They say the system improves decision-making, but they never explain what the system actually changes to make that improvement happen.

Businesses should push deeper. They should write down the internal state transitions the platform performs because those transitions often become the backbone of good claims.

A claim should follow the logic path the platform actually uses

Strong claims usually come from the real decision path inside the product. That means the drafting should reflect the actual flow the system follows when it is running.

If the platform receives event data, resolves identity, checks experiment eligibility, assigns a treatment, monitors a metric threshold, and then adjusts exposure, that logic path matters. It tells the patent story in an operational way.

That operational framing helps the business in two ways. First, it creates claims that map to the real product, which makes the application more grounded and easier to support.

Second, it creates stronger business protection because the claims are tied to the engine competitors would likely need to copy.

Product logic should be broken into steps before drafting begins

Before claim language is drafted, the company should break the product logic into simple steps in plain words. This should happen before anyone tries to sound formal.

The goal is to capture the logic cleanly, not elegantly. A useful internal draft often sounds almost too simple at first. That is fine. Clarity matters more than polish at this stage.

For example, instead of saying the platform intelligently optimizes experiment delivery, the business should explain that the system receives traffic data for an active experiment, compares the traffic data against a stability rule, determines that the assigned group sizes have drifted beyond a threshold, and updates assignment weights to restore a target distribution.

That kind of statement is already much closer to claim-ready material.

The claim should protect the mechanism, not just the result

This is one of the most important strategic points. A weak claim often focuses on the result.

A strong claim focuses on the mechanism that creates the result. Saying the platform improves experiment accuracy is not enough. Many people can say that. The question is how accuracy is improved.

Maybe the system filters delayed events using a timing window tied to event source reliability. Maybe it preserves treatment continuity by linking session identifiers to a later-resolved account identifier.

Maybe it detects overlapping tests by comparing exposure rules before assignment is finalized. These mechanisms are far more valuable to claim because they describe what the platform actually does to get the benefit.

Businesses should capture the reason the logic was created

Claim drafting gets much better when the business can explain why the logic exists. The reason usually points straight to the technical problem being solved.

That problem gives shape to the claims. It also helps prevent the invention from being described too broadly or too vaguely.

If the logic was created because users moved between anonymous and logged-in states during active experiments, that matters. If it was created because delayed events made metrics unreliable, that matters.

If it was created because concurrent tests were contaminating each other, that matters.

Businesses should document the pressure that forced the system design, because that pressure often reveals what the claim needs to capture.

Turn the hard part into the center of the claim

Many startups accidentally center claims around the easy part of the product because that part is easier to describe.

That is a mistake. The easy part is usually the part competitors can avoid or rebuild differently. The hard part is where the real moat sits.

If the difficult part was preserving stable treatment assignment across device changes, that is where attention should go.

If the difficult part was safely shifting rollout levels based on noisy live data, that should sit near the core of the claims.

The business should not hide the hardest engineering work in the background section. It should often be at the center of the drafting strategy.

A useful internal question

A very practical question for the product team is this: what part of this workflow took the longest to get right? The answer often reveals the mechanism that deserves claim focus.

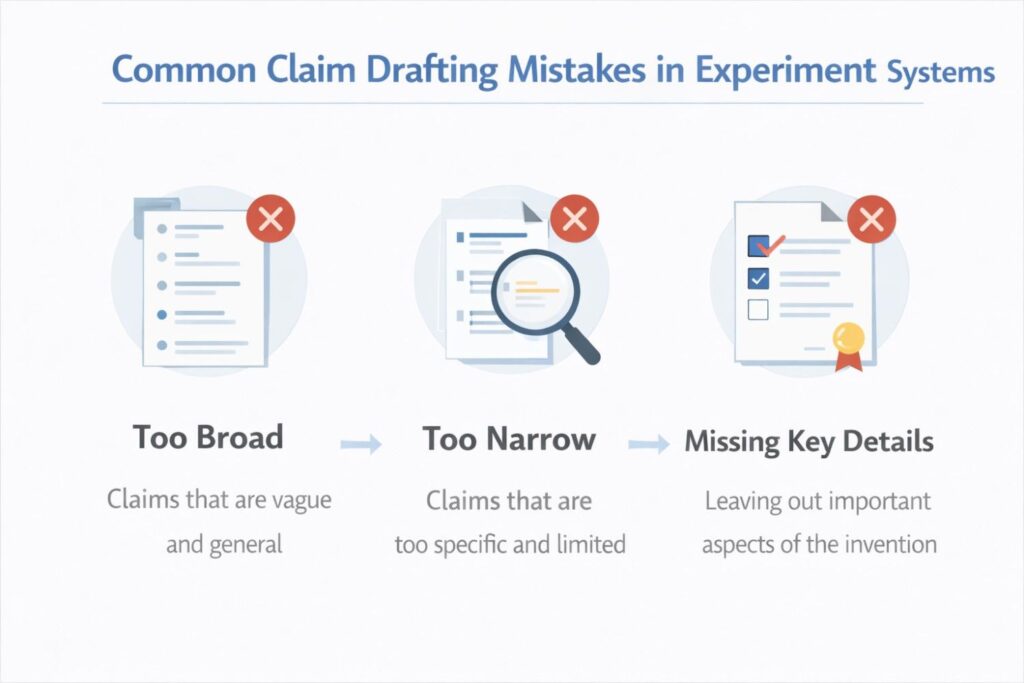

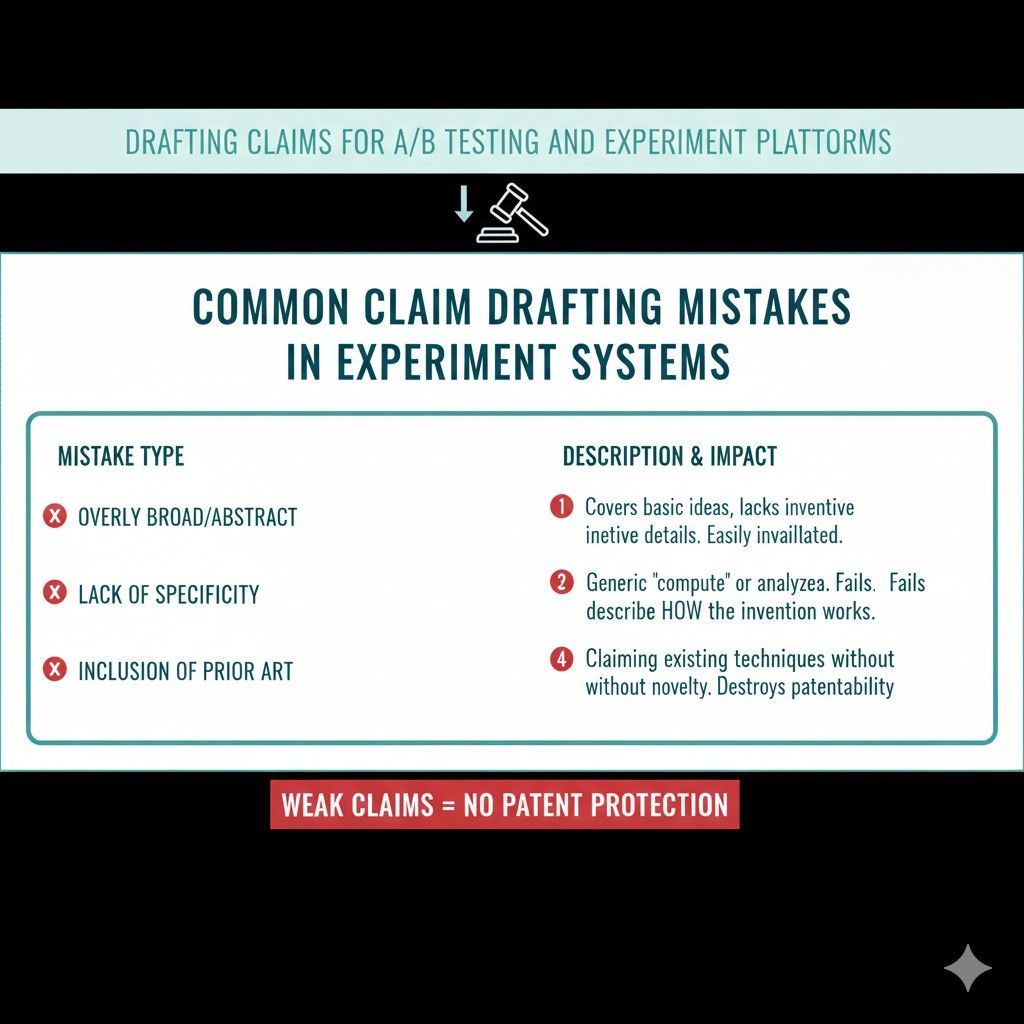

Common Claim Drafting Mistakes in Experiment Systems

Claim drafting often goes wrong in experiment systems because teams describe what looks impressive on the surface instead of what actually creates value underneath.

That mistake is easy to make. Experiment platforms have dashboards, reports, controls, alerts, and rollout views that feel important because users see them every day.

But strong patent claims do not come from what feels polished. They come from the technical logic that makes the system trustworthy, useful, and hard to copy.

For a business, this section matters because poor claim drafting does more than weaken legal protection. It can waste time, shrink future options, and leave the real product moat exposed.

A company may think it protected its core invention when it really protected a thin version that rivals can avoid with small changes. The good news is that these mistakes are common, visible, and fixable once you know where to look.

Claiming the idea of testing instead of the actual invention

This is one of the most common mistakes in the space. A company tries to claim the general idea of presenting different versions, collecting user responses, and picking a winner.

That sounds broad, but broad is not the same as strong. In most cases, that kind of claim lands on ground that is already crowded and easy to challenge.

The better path is to focus on the actual system logic that makes the platform different.

If the product preserves assignment through identity changes, filters corrupted events before analysis, or coordinates overlapping experiments across services, that is far more valuable than trying to own the basic act of running a test.

Businesses should remember that a claim becomes stronger when it protects the hard part of the product, not the oldest part of the category.

Writing claims around a dashboard instead of a technical process

Many experiment products have beautiful interfaces, and that creates a trap. Teams start describing screens, controls, and views as though those things are the invention.

In most cases, they are not. A dashboard may help the customer use the product, but it often does not reflect the technical engine that gives the platform its edge.

A stronger strategy is to treat the interface as proof that the system exists, not as the center of claim coverage.

The real question is what the platform is doing behind the scenes when the dashboard displays a result, blocks a rollout, detects a conflict, or changes an allocation.

When drafting gets stuck at the screen level, the patent usually ends up narrow and easy to work around.

Focusing on outcomes without explaining mechanisms

This mistake shows up in almost every weak draft. The claims say the platform improves experiment quality, increases result accuracy, or makes rollout safer, but they never explain how that happens.

That creates a serious weakness because results alone are not enough. Many systems can claim similar benefits using different methods.

The business needs to capture the mechanism. Maybe the platform applies a rule set to identify polluted samples before metric computation.

Maybe it links anonymous and authenticated identifiers to preserve treatment continuity.

Maybe it updates traffic allocation only when a stability check passes. Those kinds of details matter because they define the invention in a way that can actually be examined, supported, and enforced.

Using product marketing words in place of system language

This mistake feels harmless at first, but it weakens the whole application. Product teams naturally use phrases that sound good in demos.

They talk about smart rollout, adaptive allocation, trusted experimentation, or unified insights. Those phrases may help sell the product, but they usually do not explain the technical operation clearly enough for strong claims.

A better drafting approach translates those terms into system behavior.

Instead of saying the platform adaptively allocates traffic, the draft should explain what inputs are monitored, what conditions are checked, what threshold or rule is applied, and what adjustment is made when the condition is satisfied.

That shift from marketing language to system language often separates a soft draft from a serious one.

Drafting claims too close to one product release

Another common mistake is writing claims that mirror the current product version too closely. This often happens when the team uses the latest interface, naming conventions, and implementation details as the template for the whole application.

The result may fit the current release well, but it can become outdated fast and give competitors room to avoid infringement with small structural changes.

A better business move is to anchor the claims in the core technical logic that will likely remain valuable across versions. That means protecting the mechanism and the decision flow, while avoiding details that only reflect a temporary design choice.

The goal is not to float into vagueness. The goal is to protect the enduring engine inside the product rather than the outer shell of one moment in time.

Writing claims that are too abstract to survive review

Some teams hear that patents should be broad, so they strip out too much detail. They end up with claims that sound big but do not say enough.

This can be a serious problem in experiment systems because the field is full of generic activity like assigning users, gathering metrics, and comparing results.

If the claims stay at that level, they can appear thin, familiar, and unsupported.

The solution is not to become overly narrow. The solution is to include meaningful technical structure.

A claim can still be broad while identifying the actual data being used, the decision being made, and the system change that follows.

Businesses should think in terms of operational clarity. The draft should show what the platform really does, even when it is written to cover more than one implementation.

Writing claims that are too narrow to matter

The opposite mistake happens too. Some drafts get so tied to a specific implementation that they stop being strategically useful.

They might describe one exact threshold value, one precise event format, one interface sequence, or one code-level architecture. That can make the claim easier to support, but it can also make it easy for a competitor to step around it.

Strong drafting needs a better balance. The business should capture the invention at a level that protects the technical concept while still using dependent claims and examples to cover narrower versions.

This layered structure helps create protection with both reach and support. When every claim is locked to one exact implementation, the company may win a document but lose the moat.

Forgetting that edge cases often contain the strongest invention material

A lot of teams treat edge cases as cleanup details. They write the patent around the happy path because it seems simpler. In experiment systems, that often means leaving the strongest invention material on the table.

Real technical value frequently appears where the environment becomes messy.

That may include identity changes during an active test, delayed event arrival, partial metric data, conflicting experiments, traffic imbalance, or rollout decisions under service instability.

If the platform has special logic for these cases, it deserves serious claim attention. From a business view, edge-case handling is often what makes the system enterprise-ready and hard to replace. Ignoring it in the draft can leave a major gap.

Claiming the report instead of the decision engine

Experiment platforms often produce reports, and it is tempting to center the patent on the output. But reports are usually the visible end of the process, not the part that creates the advantage.

A chart or result summary may matter to the user, yet the more valuable invention often sits in the engine that generated the decision behind that report.

If the platform determines whether to widen exposure, stop a rollout, exclude a metric, or reassign traffic based on incoming signals, that is a richer technical target.

Businesses should be careful not to let the reporting layer overshadow the control logic. The control logic is often where the product becomes truly defensible.

Why this mistake hurts commercial protection

From a business angle, protecting the report alone may do very little to stop a rival. A competitor can generate a different style of report while copying the same decision logic underneath.

That is why the drafting focus should usually move closer to the system behavior that drives action, not just the presentation of results.

Leaving out the problem that forced the invention

A strong claim strategy usually starts with a clear technical problem. Weak drafts often skip that part or state it too vaguely.

They may say the invention improves testing performance, but they never explain what system-level failure, friction, or technical limit forced the new design.

That missing context matters. If the platform was built to solve identity instability, event timing drift, experiment interference, or rollout risk under noisy data, those specifics help shape the whole drafting approach.

They reveal why the mechanism exists and what the claims need to cover. Businesses should make sure the application tells the story of the problem in a concrete way because that story supports both clarity and depth.

Wrapping It Up

Patent claims for experiment platforms are only useful when they protect the part of the system that truly gives the business an edge. That is the big takeaway. The goal is not to describe A/B testing in a general way. The goal is to capture the engine inside the platform that makes decisions better, results more trusted, rollouts safer, and the product harder to copy.

Leave a Reply