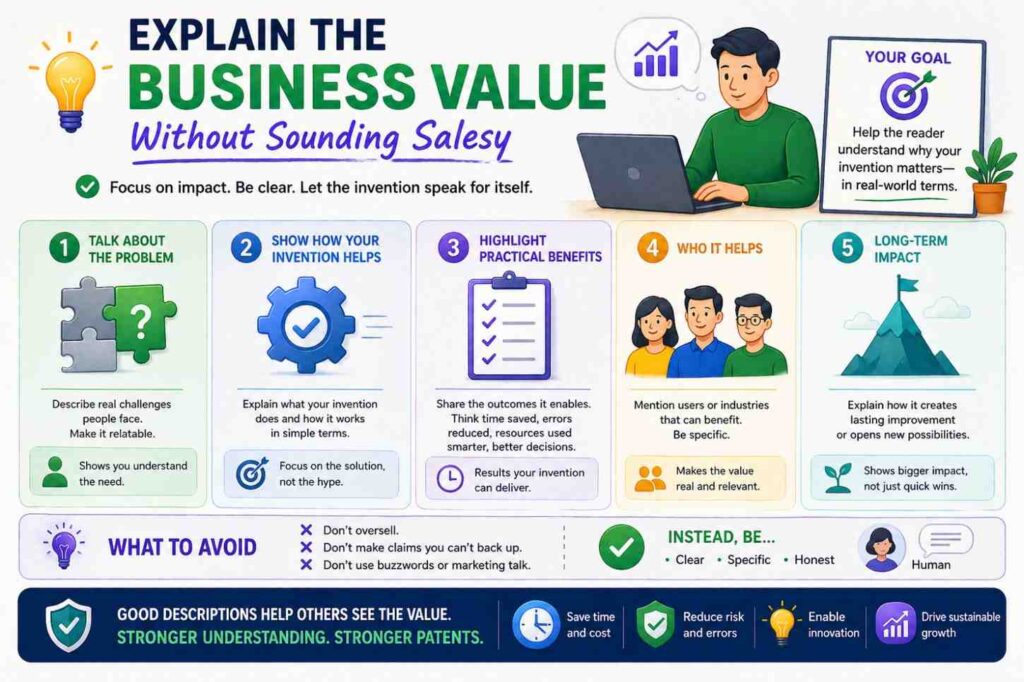

Security features can make a patent stronger when they are described well. They can show how an invention protects data, controls access, checks trust, stops misuse, or keeps a system safe.

But vague security words do not help much. A patent spec should explain what the security feature does, when it acts, what data it uses, and how the system changes because of it.

PowerPatent helps founders, engineers, and inventors turn technical systems into stronger patent filings with smart software and real patent attorney oversight. You can see how it works here: https://powerpatent.com/how-it-works

Why security features matter in patent specs

Security is not just a product add-on.

In many modern inventions, security is part of the system’s core value. A healthcare AI tool may need to hide patient data. A fintech platform may need to stop fraud. A cloud system may need to control who can see customer records. A robot may need to reject unsafe commands. A developer tool may need to protect source code. A device may need to prove that sensor data came from the right place.

Security can shape how the invention works.

That means security can also shape the patent.

But many patent specs describe security in a weak way. They say things like:

“The system may include security.”

“The data may be protected.”

“The user may be authenticated.”

“The system may use encryption.”

Those statements are not always wrong, but they are thin. They do not explain much. They may not support strong claims later. They may also sound like generic security language that could apply to almost any system.

A better patent spec explains the security feature as part of the technical workflow.

What is being protected?

Who or what is being checked?

What condition triggers the security step?

What happens if the check passes?

What happens if the check fails?

Does the security feature change access, processing, storage, routing, display, or control?

Does it create a record?

Does it update a trust score?

Does it select a safe mode?

Does it block an action?

Those details make security features useful in a patent.

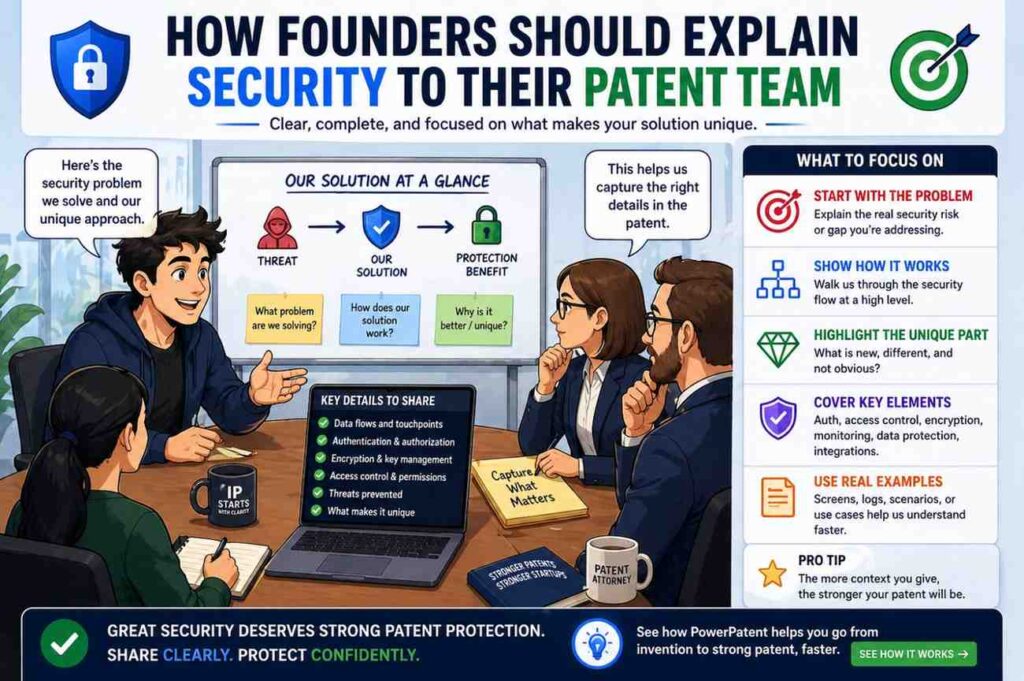

Start with the security problem

Before describing a security feature, explain the problem it solves.

Do not start with a buzzword. Start with the risk.

For example, instead of saying:

“The system uses encryption.”

You can say:

“In some examples, input data may include sensitive data. The system may encrypt the sensitive data before storing the sensitive data or sending the sensitive data to another device.”

That is already better.

It tells the reader what is protected and when encryption happens.

Instead of saying:

“The system uses authentication.”

Say:

“In some examples, the system may verify an identity of a user before allowing the user to view an output, change a setting, approve an action, or send data to another system.”

Now authentication has a role.

Instead of saying:

“The system detects tampering.”

Say:

“In some examples, the system may detect a change in device data that indicates tampering with a sensor, housing, firmware, or communication path. In response, the system may block a control action, generate an alert, or switch the device to a safe mode.”

That is strong.

Security language should not float above the invention. It should be tied to a real risk and a real system response.

Describe security as behavior, not decoration

A security feature should do something.

It should not just sit in the spec as a nice-sounding word.

A weak description says:

“The system includes access control.”

A stronger description says:

“The system may control access to input data, output data, review controls, settings, or stored records based on a user role, permission, device identifier, location, policy, or other access condition.”

Then it can go further:

“When the access condition is not satisfied, the system may hide the data, block the action, request additional verification, create an audit record, or send an alert.”

Now the security feature has behavior.

That is what a patent spec needs.

Think of each security feature as a decision point.

The system checks something.

The system decides something.

The system acts based on that decision.

That simple structure makes security descriptions clearer and stronger.

Avoid generic security filler

Many specs include generic security paragraphs that could fit any product.

For example:

“The system may use encryption, authentication, authorization, firewalls, secure sockets layer, and other security methods.”

This may not hurt, but it usually does not do much.

It does not explain how security relates to the invention.

A stronger version is specific to the system:

“In some examples, the system may authenticate a device before accepting sensor data from the device. When the device is authenticated, the system may process the sensor data to generate an output. When the device is not authenticated, the system may reject the sensor data, route the sensor data for review, or mark a generated output as low confidence.”

This is much better.

It connects security to data trust and output generation.

For a fintech system:

“In some examples, the system may verify that a request is associated with an authorized account before generating a transaction approval output. If the request is not authorized, the system may block the transaction and store an audit record.”

For an AI system:

“In some examples, the system may check whether a user has permission to access selected context data before including the selected context data in a model input.”

For a robot:

“In some examples, the system may verify that a control command satisfies a safety rule before sending the control command to the robotic device.”

These examples are much more useful than a generic security list.

Identify what is being protected

Security features are clearer when the protected thing is named.

The protected thing may be data, access, model behavior, a device, a user account, a workflow, a command, a transaction, a file, a record, a key, a model, a sensor, a network link, or a physical asset.

For example:

“The system may protect patient data by masking selected fields before displaying the patient data to a user.”

“The system may protect a model by limiting access to model weights or model configuration data.”

“The system may protect a device by rejecting control commands that do not satisfy a safety rule.”

“The system may protect source code by checking user permission before sending code context to a model service.”

“The system may protect transaction data by verifying a signature associated with a request.”

This is plain and specific.

It tells the reader why the security feature matters.

A strong patent spec should not just say “security.” It should say what is protected and how.

Name the security trigger

Many security features happen only when something occurs.

That trigger should be described.

For example:

A user requests access.

A device sends data.

A model output is generated.

A control command is requested.

A file is uploaded.

A transaction is submitted.

A location changes.

A confidence score drops.

A device state changes.

A policy is updated.

A user role changes.

A network connection fails.

A sensor value looks strange.

A good spec may say:

“In response to receiving a request to view the output, the system may determine whether the user has permission to view the output.”

Or:

“When a device sends sensor data, the system may verify an identifier associated with the device.”

Or:

“Before sending a control signal, the system may determine whether the control signal satisfies a safety condition.”

This makes the security step part of the workflow.

It also supports method claims that depend on timing or conditions.

Explain what happens after the security check

A security feature is weak if the spec does not say what happens next.

For example, saying “the system authenticates the user” is incomplete.

What if authentication passes?

What if it fails?

A better description says:

“When authentication succeeds, the system may allow the user to access the output. When authentication fails, the system may deny access, request additional credentials, create an audit record, or send a security alert.”

This gives the patent more detail.

Another example:

“When the device signature is valid, the system may process the received device data. When the device signature is invalid, the system may reject the received device data or mark the received device data as untrusted.”

Another:

“When a command satisfies the safety rule, the system may send the command to the device. When the command does not satisfy the safety rule, the system may block the command and provide a warning through a user interface.”

This kind of pass/fail logic is very useful.

It turns security into a clear technical process.

Describe authentication carefully

Authentication means checking identity. It can apply to users, devices, services, applications, sensors, clients, servers, or external systems.

A patent spec should say who or what is authenticated.

For example:

“In some examples, the system may authenticate a user before allowing the user to access a user interface.”

That is a user authentication example.

“In some examples, the system may authenticate a device before accepting data from the device.”

That is device authentication.

“In some examples, the system may authenticate an external service before sending output data to the external service.”

That is service authentication.

Then describe how authentication may happen, but keep it flexible unless a specific method matters.

“The authentication may be based on a password, token, certificate, key, biometric value, device identifier, signed message, one-time code, session value, or other authentication factor.”

This gives room.

If one method is central, explain it in more detail.

For example:

“In some examples, the device may sign a message using a device key, and the system may verify the signed message before accepting data from the device.”

That is more specific and may support stronger claims.

Describe authorization separately from authentication

Authentication and authorization are related, but they are not the same.

Authentication asks: who are you?

Authorization asks: what are you allowed to do?

A user may be authenticated but still not allowed to view certain data or perform certain actions.

A patent spec should describe authorization if it matters.

For example:

“In some examples, after authenticating the user, the system may determine whether the user is authorized to view an output, change a setting, approve an action, download data, or send data to an external system.”

Then:

“The authorization may be based on a role, permission, policy, organization, data type, device, location, workflow state, or other access condition.”

This is useful.

It supports role-based workflows and enterprise security.

For example:

“A first user role may allow viewing an output. A second user role may allow approving the output. A third user role may allow changing a threshold or rule used to generate later outputs.”

That kind of detail can support claims around secure review and approval.

Describe role-based access

Role-based access is common in enterprise systems.

But saying “role-based access may be used” is thin.

A stronger description explains what roles affect.

For example:

“In some examples, the system may display different interface elements based on a user role. A first role may allow a user to view an output. A second role may allow the user to approve or reject the output. A third role may allow the user to change a system setting.”

Then explain system behavior:

“The system may enable or disable controls based on the user role.”

Or:

“The system may hide sensitive fields from a user who does not have a required role.”

Or:

“The system may create an audit record when a user with an approval role approves an output.”

This makes role-based access part of the invention flow.

Describe permission checks tied to data

A permission check is often strongest when tied to specific data.

For example:

“The system may determine whether a user has permission to access a first data field before displaying the first data field through a user interface.”

Or:

“The system may determine whether a model service has permission to receive selected context data before sending the selected context data to the model service.”

Or:

“The system may determine whether an external system has permission to receive an output before sending the output to the external system.”

These examples are concrete.

They can support claims around data privacy, AI context selection, secure APIs, and controlled workflows.

Describe data masking

Data masking is a useful security feature.

It means hiding or changing sensitive parts of data.

A weak description says:

“The system masks data.”

A stronger description says:

“In some examples, the system may mask one or more sensitive fields before displaying data to a user or sending the data to another system.”

Then give examples:

“The sensitive fields may include personal information, account information, health information, location information, source code, customer data, device identifiers, or other protected data.”

Then explain the result:

“The masked data may include a removed value, replaced value, shortened value, hashed value, tokenized value, generalized value, or summary value.”

This is much more useful.

If masking depends on a role or rule, say so:

“The system may select which fields to mask based on a user role, permission, policy, data type, output type, or destination.”

Now the security feature has real structure.

Describe tokenization

Tokenization means replacing sensitive data with a token.

A patent spec may say:

“In some examples, the system may replace sensitive data with a token before storing or sending the data. The token may be used to refer to the sensitive data without exposing the sensitive data.”

Then:

“The system may store a mapping between the token and the sensitive data in a secure data store.”

Or:

“The system may allow only selected users or services to resolve the token to the sensitive data.”

This can be useful in payments, health, enterprise AI, and data processing systems.

Tie tokenization to the invention if possible.

For example:

“In some examples, tokenized data may be included in a model input while the underlying sensitive data is excluded from the model input.”

That is stronger for AI systems.

Describe encryption by place and time

Encryption can happen in different places.

Data can be encrypted before storage.

Data can be encrypted during transfer.

Data can be encrypted on a device before being sent.

Data can be encrypted by a server before being stored.

Data can be encrypted per tenant, per user, per record, or per field.

A patent spec should say where and when encryption occurs.

For example:

“In some examples, the system may encrypt input data before storing the input data in a data store.”

Or:

“In some examples, a local device may encrypt sensor data before sending the sensor data to a remote server.”

Or:

“In some examples, the system may encrypt output data before providing the output data to an external system.”

If key management matters, describe it.

“The encryption may use a key associated with a user, device, organization, tenant, session, record, or data type.”

This supports more specific security claims.

Avoid just saying “encryption may be used.” Explain its role.

Describe key management when it matters

Keys can be central to security.

A system may generate keys, store keys, rotate keys, revoke keys, derive keys, assign keys to tenants, or use keys to sign and verify data.

If key handling is part of the invention, describe it.

For example:

“In some examples, the system may select an encryption key based on a tenant identifier associated with the data.”

Or:

“In some examples, the system may rotate an encryption key in response to a time condition, security event, user request, policy change, or detected risk.”

Or:

“In some examples, the system may revoke a key associated with a device when the device is marked as untrusted.”

Then explain what happens next:

“After revoking the key, the system may reject data signed by the device or prevent the device from receiving configuration updates.”

That is strong.

It shows how key management changes system behavior.

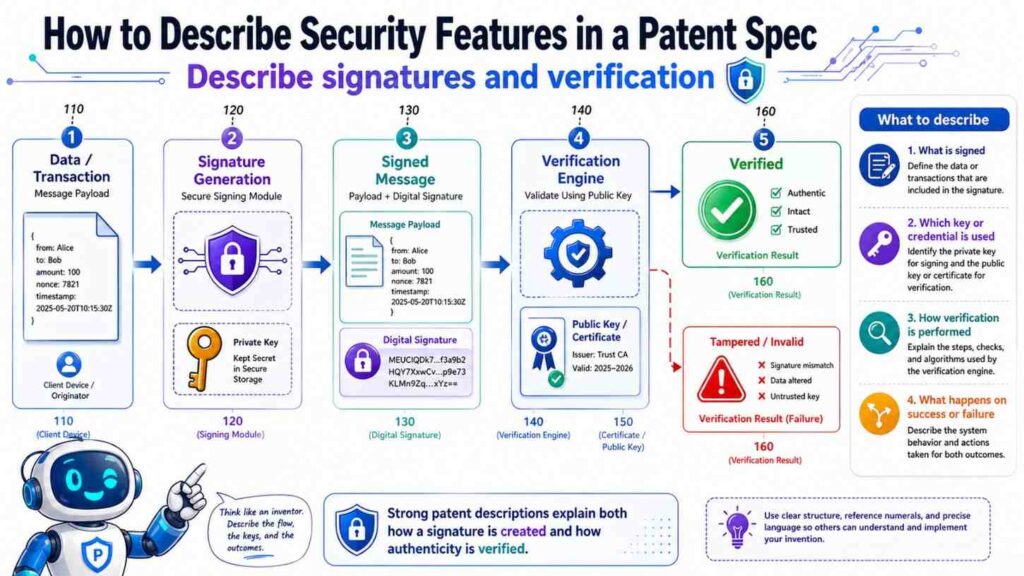

Describe signatures and verification

Digital signatures can help prove that data or commands came from a trusted source and were not changed.

A patent spec may say:

“In some examples, a device may generate a signature based on input data and a device key. The system may verify the signature before processing the input data.”

Then:

“When the signature is verified, the system may accept the input data. When the signature is not verified, the system may reject the input data, flag the input data, or route the input data for review.”

This is clear.

For control commands:

“In some examples, the system may sign a control command before sending the control command to a device. The device may verify the signature before performing the control command.”

This can be valuable for robotics, IoT, industrial systems, medical devices, and vehicles.

Describe secure communication

Networked systems send data between devices.

A patent spec may describe secure communication without being tied to one protocol.

For example:

“In some examples, data may be sent through a secure communication channel.”

Then:

“The secure communication channel may use encryption, authentication, certificates, keys, tokens, signed messages, or another security measure.”

If a specific flow matters:

“The client device may establish a secure session with the server before sending input data.”

Or:

“The device may verify a certificate associated with the server before sending sensor data.”

Or:

“The server may verify a token associated with the client device before accepting a request.”

This gives enough detail to be useful.

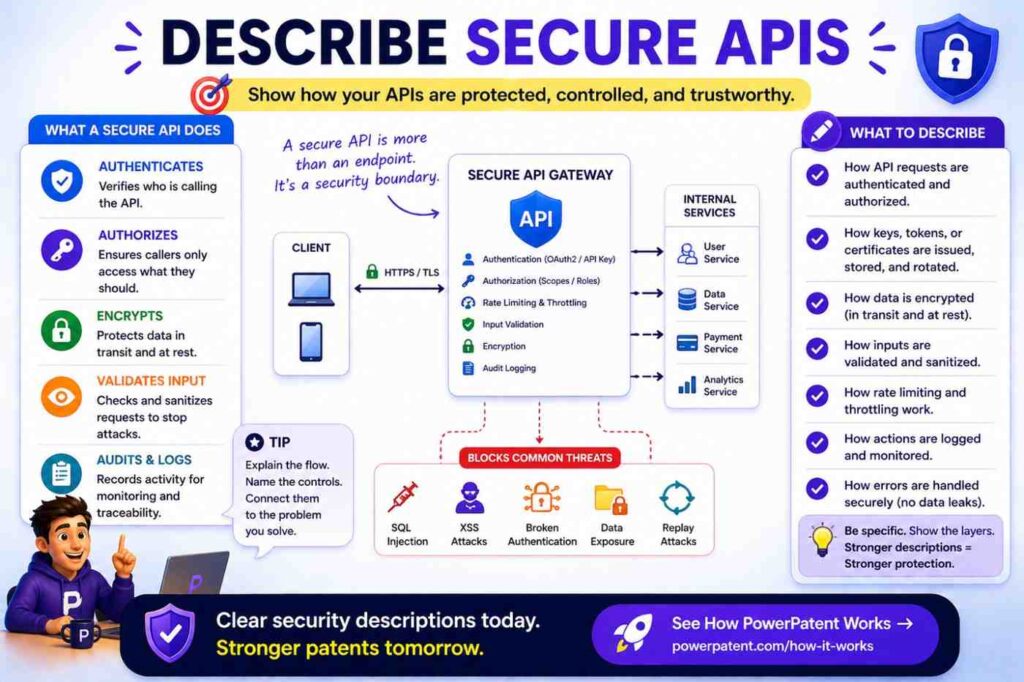

Describe secure APIs

Many security features happen at APIs.

A patent spec may say:

“In some examples, the system may receive an API request from a client or external system. The system may verify an access token, signature, credential, permission, or policy associated with the API request before processing the API request.”

Then:

“If the API request is verified, the system may process input data included in the API request and return an API response. If the API request is not verified, the system may reject the request, return an error, create an audit record, or send an alert.”

This is practical and patent-friendly.

If rate limiting matters:

“The system may limit a number of API requests based on a user, tenant, device, source address, risk score, or request type.”

Now you have support for abuse prevention too.

Describe rate limiting and abuse control

Rate limiting can prevent misuse, overload, scraping, fraud, or attacks.

A weak description says:

“The system may use rate limiting.”

A stronger description says:

“In some examples, the system may limit a number of requests accepted from a user, device, account, tenant, network address, API key, or external system during a time period.”

Then:

“The request limit may be based on a risk score, user role, account status, request type, system load, past behavior, or other factor.”

Then:

“When the request limit is exceeded, the system may reject a request, delay processing, require additional verification, lower a priority, or send an alert.”

This is useful.

It ties rate limiting to system behavior.

Describe anomaly detection

Security systems often detect unusual activity.

But “anomaly detection” is vague unless explained.

A patent spec may say:

“In some examples, the system may detect an anomaly based on a difference between current activity and expected activity.”

Then explain activity:

“The activity may include login attempts, API requests, data access events, transaction events, device readings, model outputs, file changes, user actions, network traffic, or control commands.”

Then explain response:

“When an anomaly is detected, the system may block an action, require additional verification, lower a trust score, generate an alert, create an audit record, route the event for review, or change a processing mode.”

Now the feature is concrete.

If the anomaly detection itself is the invention, go deeper into how expected activity is generated, how scores are calculated, and how thresholds change.

Describe trust scores

Trust scores can be powerful in security patents.

A trust score may apply to a user, device, data source, request, output, model, service, or session.

A strong spec may say:

“In some examples, the system may generate a trust score associated with a device based on one or more trust factors.”

Then:

“The trust factors may include device identity, signature verification, past behavior, firmware version, location, network state, sensor consistency, error rate, or other factor.”

Then:

“The system may use the trust score to accept data, reject data, route data for review, select a processing path, limit access, or trigger an alert.”

This is excellent patent support.

It links security to decision-making.

Describe risk-based security

Risk-based security means the system changes security behavior based on risk.

For example:

“In some examples, the system may generate a risk score associated with a request. The system may select a security action based on the risk score.”

Then:

“The security action may include allowing the request, denying the request, requesting additional authentication, limiting data access, masking data, routing the request for review, creating an audit record, or sending an alert.”

This is strong.

It can apply to fraud systems, identity systems, cloud platforms, AI tools, device networks, and enterprise workflows.

If your invention includes adaptive security, describe the inputs to the risk score and the actions it controls.

Describe multi-factor authentication when relevant

Multi-factor authentication can be mentioned broadly.

For example:

“In some examples, the system may require more than one authentication factor before allowing access to a protected function.”

Then:

“The authentication factors may include a password, token, device proof, biometric value, one-time code, hardware key, location value, or other factor.”

Then explain trigger:

“The system may require the additional authentication factor when a risk score exceeds a threshold, when a user requests a high-risk action, when a device changes, or when access is requested from an unusual location.”

This is better than simply saying MFA may be used.

It ties MFA to risk and workflow.

Describe step-up authentication

Step-up authentication happens when the system asks for more verification in certain situations.

A patent spec may say:

“In some examples, the system may allow a first action after a first authentication step and require an additional authentication step before allowing a second action.”

Then:

“The second action may include viewing sensitive data, changing a security setting, approving a transaction, sending data to an external system, or issuing a control command.”

This is useful.

It supports claims around adaptive access.

Describe session security

Sessions can matter in cloud and web systems.

For example:

“In some examples, the system may create a session after authenticating a user. The system may associate the session with a session identifier, user identifier, device identifier, expiration time, permission, or risk score.”

Then:

“The system may end the session, reduce permissions, or require re-authentication based on inactivity, location change, device change, risk score, policy, or user action.”

This supports session control features.

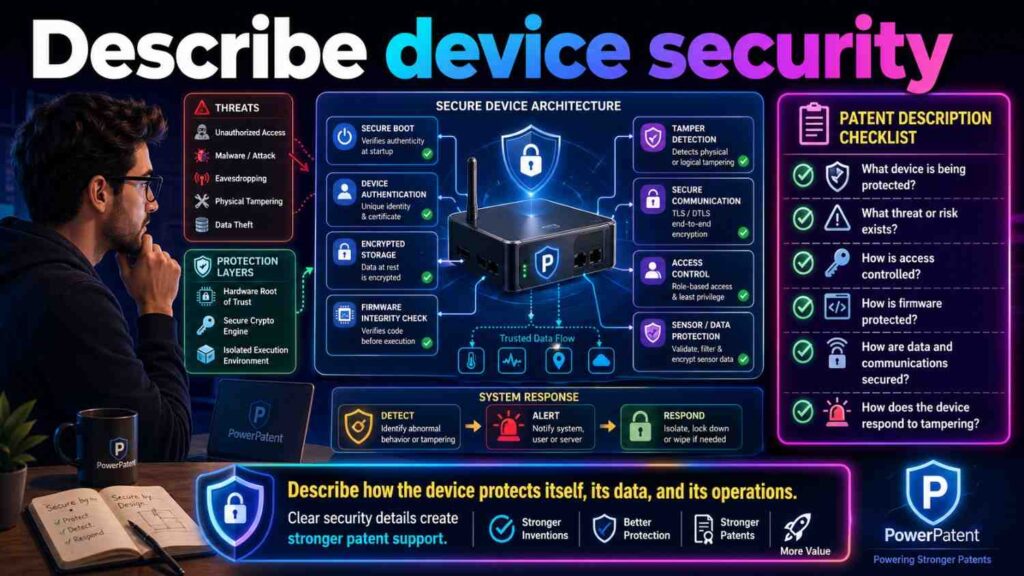

Describe device security

Devices can be security subjects.

A system may check device identity, firmware, sensor state, location, configuration, or tamper status.

For example:

“In some examples, the system may verify a device state before accepting data from the device or sending a command to the device.”

Then:

“The device state may include a firmware version, configuration value, sensor status, tamper indicator, location value, battery state, network state, or other device information.”

Then:

“When the device state does not satisfy a security condition, the system may reject data from the device, block a command, send an alert, request an update, or place the device in a restricted mode.”

This is very useful for IoT, robotics, medical devices, industrial systems, vehicles, and edge AI.

Describe firmware and software integrity

If a device or system checks software integrity, describe it.

For example:

“In some examples, the device may verify integrity of firmware or software before performing an operation.”

Then:

“The verification may include comparing a hash, checking a signature, verifying a version, checking a secure boot value, or comparing the firmware or software to an expected value.”

Then:

“If verification fails, the device may stop operation, enter a safe mode, reject commands, or send an alert.”

This gives strong support for device security.

Describe secure boot when relevant

Secure boot can be described simply.

“In some examples, a device may perform a secure boot process before running application software. During the secure boot process, the device may verify a signature or hash associated with firmware or software. If the verification succeeds, the device may continue operation. If the verification fails, the device may block operation, enter a safe mode, or send a security alert.”

That is clear.

If secure boot is only generic and not tied to the invention, do not overdo it.

Describe tamper detection

Tamper detection can be physical or digital.

Physical tampering may involve opening a housing, moving a sensor, cutting a cable, changing a seal, or altering a device.

Digital tampering may involve changed firmware, altered data, invalid signatures, unusual commands, or unexpected configuration changes.

A patent spec may say:

“In some examples, the system may detect tampering based on a tamper signal, sensor value, device state, housing state, firmware value, signature check, configuration change, or other tamper indicator.”

Then:

“In response to detecting tampering, the system may generate an alert, stop accepting data from the device, block a command, erase selected data, rotate a key, disable a function, or enter a safe mode.”

This is strong.

It connects detection to action.

Describe safe mode and restricted mode

Security features often change system mode.

For example:

“In some examples, the system may place a device, user account, service, or workflow in a restricted mode based on a security condition.”

Then:

“In restricted mode, the system may limit data access, block selected actions, require review, use local processing, disable external communication, reduce control permissions, or require additional verification.”

For physical systems:

“In safe mode, the device may stop motion, reduce power, ignore remote commands, use default settings, or perform another safety operation.”

This is useful for devices and control systems.

Describe command authorization

For systems that send commands, authorization is critical.

A patent spec may say:

“In some examples, before sending a command to a device, the system may determine whether the command is authorized.”

Then:

“The determination may be based on a user role, device state, command type, risk score, safety rule, location, time, policy, or prior approval.”

Then:

“If the command is authorized, the system may send the command. If the command is not authorized, the system may block the command, request review, or generate a warning.”

This is strong.

It supports safe control claims.

Describe approval workflows

Security often involves approval.

For example:

“In some examples, a requested action may require approval from one or more users before the action is performed.”

Then:

“The requested action may include data export, model update, rule change, device command, transaction approval, access grant, or deletion of data.”

Then:

“The system may provide the requested action to a review interface and receive an approval or rejection input.”

Then:

“The system may perform the requested action only after approval is received.”

This supports claims around secure workflows.

Describe dual control

Dual control means two approvals are required.

If relevant:

“In some examples, the system may require approvals from two or more users before performing a protected action.”

Then:

“The two or more users may have different roles, permissions, organizations, or approval levels.”

This is useful in finance, security, enterprise admin, industrial control, and medical workflows.

Describe audit records

Audit logs are one of the most important security features to describe well.

A weak description says:

“The system logs events.”

A stronger description says:

“In some examples, the system may create an audit record associated with a security event, user action, device action, data access event, model output, review result, command, or external system request.”

Then:

“The audit record may include a user identifier, device identifier, tenant identifier, time, action type, input data identifier, output identifier, permission value, model version, rule version, security result, location value, or other audit data.”

Then explain use:

“The audit record may be used to review a decision, detect misuse, explain an output, compare system behavior over time, or trigger a later security action.”

This is far more useful.

It supports claims around traceability and compliance.

Describe immutable or protected logs

Some systems protect logs from change.

A patent spec may say:

“In some examples, the system may store an audit record in a protected log. The protected log may prevent or detect later change to the audit record.”

Then:

“The protected log may use a hash chain, signature, append-only storage, access control, replication, trusted storage, or another protection technique.”

Then:

“If a change is detected, the system may generate an alert or mark the log as invalid.”

This can be valuable in security, finance, healthcare, AI governance, and industrial systems.

Describe alerting for security events

Security alerts should be tied to triggers and recipients.

For example:

“In some examples, the system may generate a security alert when a security condition is detected.”

Then:

“The security condition may include failed authentication, unauthorized access, unusual request volume, invalid signature, tamper detection, suspicious device state, policy violation, data export request, or blocked command.”

Then:

“The security alert may be provided to a user, administrator, security system, workflow system, device, or external service.”

Then:

“The security alert may include a risk score, event type, affected data, user identifier, device identifier, time, suggested action, or status.”

This gives strong support.

Describe incident response

Security features may trigger response steps.

For example:

“In some examples, the system may perform an incident response action when a security event is detected.”

Then:

“The incident response action may include blocking access, rotating a key, disabling a device, ending a session, requiring additional authentication, creating a ticket, notifying an administrator, preserving evidence, or changing a processing mode.”

This is useful.

It shows the security system does more than detect.

Describe data export controls

Data export can be sensitive.

A patent spec may say:

“In some examples, the system may control export of data based on a permission, policy, data type, destination, user role, or risk score.”

Then:

“Before exporting data, the system may mask selected fields, require approval, create an audit record, encrypt the exported data, or limit the export format.”

This supports secure data handling claims.

Describe deletion and retention security

Deletion can be part of security.

For example:

“In some examples, the system may delete, archive, anonymize, or retain data based on a retention rule.”

Then:

“The retention rule may be based on data type, user setting, tenant setting, policy, time period, event, or request.”

Then:

“The system may create an audit record when data is deleted, archived, or anonymized.”

This is useful for privacy and compliance features.

Describe anonymization

Anonymization removes or changes identity-related data.

A patent spec may say:

“In some examples, the system may anonymize data before using the data for model training, analytics, reporting, or sharing.”

Then:

“The anonymization may include removing identifiers, generalizing values, replacing values with tokens, aggregating records, adding noise, or applying another anonymization process.”

Then:

“The anonymized data may be used to generate a model, report, benchmark, or output without exposing the underlying identity data.”

This can support privacy-preserving AI claims.

Describe differential privacy only if relevant

Do not add technical privacy terms unless they fit.

If differential privacy is part of the invention, describe it plainly.

For example:

“In some examples, the system may add noise to data, a query result, or a model update before sharing the data, query result, or model update. The added noise may reduce the chance that information about a specific user or record can be inferred.”

This is simple and clear.

If the exact method matters, more detail is needed.

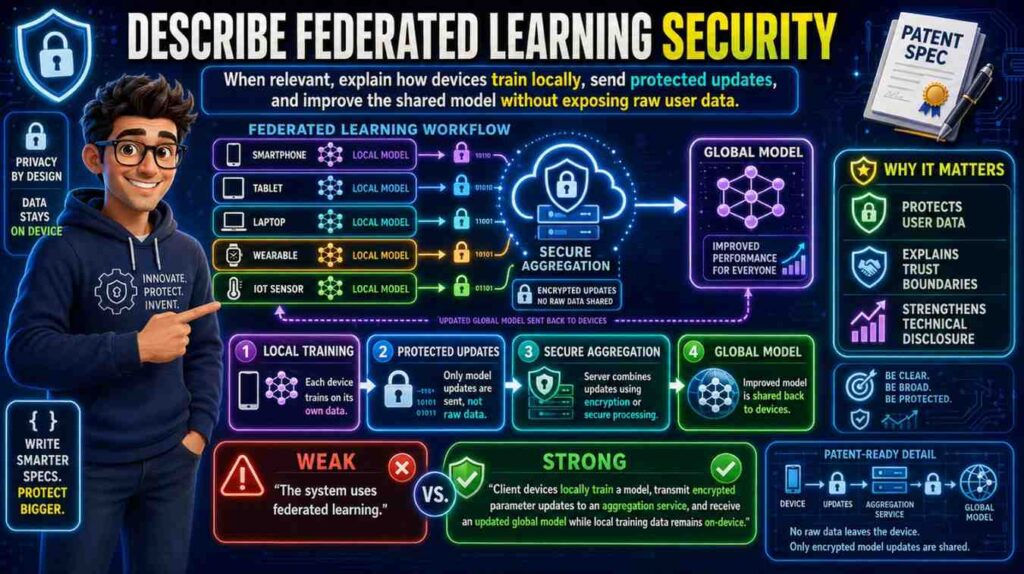

Describe federated learning security when relevant

Federated learning can involve local training and shared updates.

A patent spec may say:

“In some examples, a local device may update a local model based on local data and send a model update to a remote system without sending the local data.”

Then:

“The remote system may combine model updates from multiple local devices to update a global model.”

Security features may include:

“The system may encrypt, sign, mask, aggregate, or validate the model update before using the model update.”

This supports privacy-preserving training.

Describe secure model use

AI systems need security too.

A model may be protected from unauthorized access, bad inputs, prompt injection, data leakage, model theft, or unsafe outputs.

A patent spec may say:

“In some examples, the system may control access to a model, model input, model output, prompt template, context data, model weights, or model configuration.”

Then:

“The system may determine whether a user, device, or service is authorized before providing access.”

For prompt or context security:

“In some examples, the system may check whether context data is permitted for use in a model input before including the context data in the model input.”

This is especially important for AI tools that handle private company data.

Describe prompt injection defenses

For AI products, prompt injection and malicious input can matter.

A patent spec may say:

“In some examples, the system may analyze input data to detect an instruction or content that may cause a model to ignore a rule, disclose protected data, or perform an unauthorized action.”

Then:

“When such content is detected, the system may remove the content, modify the model input, lower a trust score, route the request for review, block the request, or generate a warning.”

This is clear.

It avoids jargon while explaining the security behavior.

Describe context access control for AI

AI systems often retrieve context data.

A security-aware spec may say:

“In some examples, the system may select context data for a model input based on a user request. Before including selected context data in the model input, the system may determine whether the user or model service is permitted to access the selected context data.”

Then:

“If access is permitted, the system may include the selected context data in the model input. If access is not permitted, the system may omit, mask, summarize, or replace the selected context data.”

This is strong and practical.

It supports claims around secure AI context selection.

Describe output filtering

AI and automation systems may filter outputs.

For example:

“In some examples, the system may evaluate an output before providing the output to a user or external system.”

Then:

“The evaluation may detect protected data, unsafe instructions, policy violations, low confidence, unsupported statements, or other output conditions.”

Then:

“When an output condition is detected, the system may suppress the output, modify the output, request review, generate a warning, or provide a limited output.”

This describes security and safety in action.

Describe model output permissions

Some users may see full outputs. Others may see limited outputs.

For example:

“In some examples, the system may generate different output versions based on a user permission or destination. A first output version may include detailed supporting data, and a second output version may omit or mask at least part of the supporting data.”

This is useful for enterprise AI.

It supports role-based output generation.

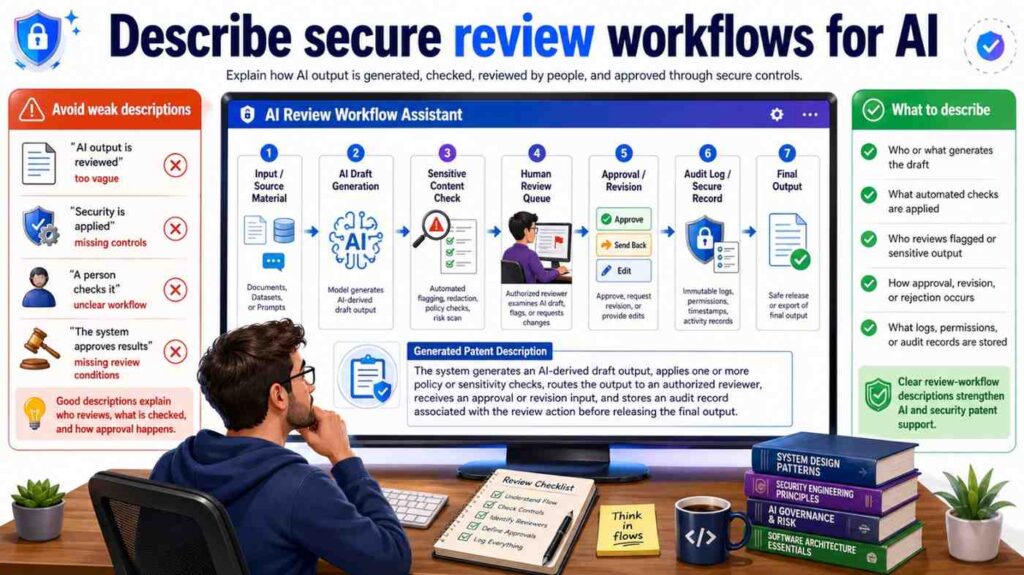

Describe secure review workflows for AI

AI review often involves security.

For example:

“In some examples, the system may route a model output for review when the model output includes protected data, low confidence, a policy conflict, or a high-risk action.”

Then:

“The review interface may present the model output and one or more security indicators. The system may receive a review result and provide, modify, suppress, or store the model output based on the review result.”

This connects AI security to human review.

Describe fraud detection security

For fintech or transaction systems, fraud detection may be central.

A patent spec may say:

“In some examples, the system may generate a fraud risk score associated with a transaction request.”

Then:

“The fraud risk score may be based on transaction data, account data, device data, location data, user behavior, network data, historical data, or other risk factors.”

Then:

“The system may approve, block, delay, route for review, request additional authentication, or generate an alert based on the fraud risk score.”

This is much stronger than saying “the system detects fraud.”

Describe transaction security

Transactions can require special checks.

For example:

“In some examples, before approving a transaction, the system may verify a user identity, device identity, account permission, transaction amount, destination, location, time, risk score, or policy.”

Then:

“If the transaction does not satisfy a security condition, the system may block the transaction, request review, require additional authentication, or create an audit record.”

This supports secure transaction claims.

Describe source code security

Developer tools often handle source code.

A patent spec may say:

“In some examples, the system may control access to source code data based on a user permission, repository permission, organization policy, file type, branch, or project setting.”

Then:

“Before sending source code data to a model service, the system may mask secrets, remove sensitive files, check access permissions, or select only permitted code context.”

Then:

“The system may create an audit record identifying code portions included in a model input.”

This is strong for AI developer tools.

Describe secret detection

Source code and cloud systems often contain secrets.

A patent spec may say:

“In some examples, the system may detect a secret in input data before storing, displaying, or sending the input data.”

Then:

“The secret may include an API key, token, password, certificate, private key, connection string, or other credential.”

Then:

“When a secret is detected, the system may mask the secret, remove the secret, block the request, rotate the secret, notify a user, or create a security record.”

This is practical and valuable.

Describe medical data security

Healthcare systems often handle sensitive data.

A patent spec may say:

“In some examples, the system may restrict access to patient data based on a user role, care team assignment, patient permission, data type, policy, or other access condition.”

Then:

“The system may mask patient identifiers before providing data to a model service or external system.”

Then:

“The system may create an audit record when patient data is viewed, modified, exported, or used to generate an output.”

This ties security to medical workflows.

Describe industrial system security

Industrial systems may need to prevent unsafe commands.

A patent spec may say:

“In some examples, the system may verify a control command before sending the control command to an industrial device.”

Then:

“The verification may be based on a user role, device state, safety rule, operating mode, command type, risk score, or prior approval.”

Then:

“When verification fails, the system may block the command, alert an operator, or place the device in a safe mode.”

This is strong for industrial and robotics inventions.

Describe vehicle or robot command security

For vehicles and robots:

“In some examples, the system may authenticate a command source before accepting a control command.”

Then:

“The system may determine whether the control command is allowed based on vehicle state, robot state, environment data, safety zone, user permission, or risk condition.”

Then:

“If the control command is not allowed, the system may ignore the command, modify the command, request approval, or switch to a safe control mode.”

This is clear and useful.

Describe cloud security features

Cloud products may use many security steps.

A strong spec might describe:

Secure API requests.

Tenant isolation.

Role-based access.

Encrypted storage.

Encrypted transfer.

Audit logging.

Data masking.

Policy-based routing.

Incident response.

Key rotation.

But avoid listing them without context.

For example:

“In some examples, the system may select a data store based on a tenant identifier and may restrict access to the data store based on a permission associated with the tenant identifier.”

That is better than “the system uses cloud security.”

Describe tenant isolation

Tenant isolation is important for SaaS.

A patent spec may say:

“In some examples, the system may associate data with a tenant identifier. The system may use the tenant identifier to control access to the data, select configuration data, select a processing path, select an encryption key, select a model, or select a data store.”

Then:

“In some examples, data associated with different tenant identifiers may be stored in separate data stores or logically separated within a shared data store.”

This supports multi-tenant security claims.

Describe policy engines

A policy engine may decide whether an action is allowed.

A patent spec may say:

“In some examples, the system may apply one or more policies before allowing access to data or performing an action.”

Then:

“The policy may be based on user role, data type, device state, location, time, request type, risk score, tenant setting, or other condition.”

Then:

“The system may allow, deny, modify, delay, route, mask, or log the action based on the policy.”

This is strong.

It explains policy-based security without overcomplicating it.

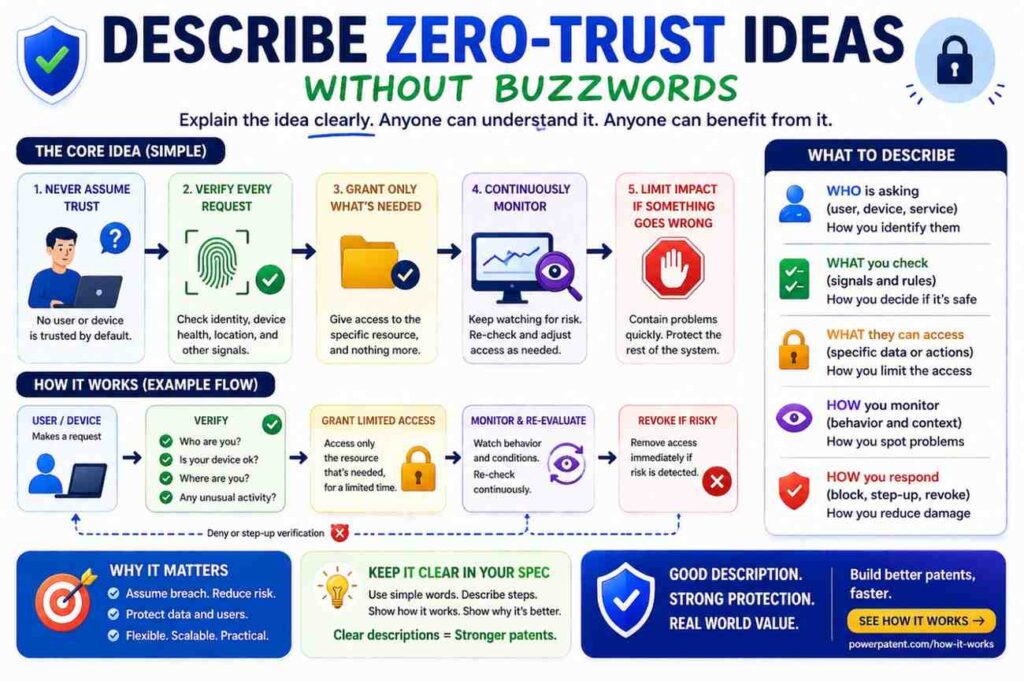

Describe zero-trust ideas without buzzwords

You do not need to say “zero trust” unless useful.

Describe the behavior.

For example:

“In some examples, the system may verify a user, device, service, and request before allowing access to protected data, even when the request is received from a known network.”

That is clear.

Then:

“The verification may be repeated when the request type, location, device, risk score, or session state changes.”

This captures the idea in simple terms.

Describe least-privilege access

Again, use behavior.

“In some examples, the system may grant a user or service access only to selected data or functions needed to perform a requested operation.”

Then:

“The selected data or functions may be determined based on role, request type, workflow state, policy, or time.”

This supports least-privilege features without needing jargon.

Describe security state changes

A system may change state based on security events.

For example:

“The system may change an account from an active state to a restricted state based on a security condition.”

Or:

“The system may change a device from a trusted state to an untrusted state when a signature check fails.”

Or:

“The system may change a workflow from an automatic approval state to a review-required state when a risk score exceeds a threshold.”

These state changes are important.

They show how security affects the system.

Describe security scoring over time

Some systems update risk or trust over time.

For example:

“In some examples, the system may update a trust score associated with a user, device, service, or data source based on later activity.”

Then:

“The later activity may include successful authentication, failed authentication, valid signatures, invalid signatures, unusual request volume, policy violations, review results, or confirmed security events.”

Then:

“The updated trust score may affect future access decisions or processing paths.”

This is strong.

It supports adaptive security.

Describe learning from security events

If a system learns from security events, explain it.

For example:

“In some examples, the system may store security events as feedback. The system may use the security events to update a rule, threshold, model, risk score, trust score, or processing path.”

Then:

“In one example, when a request is later confirmed as malicious, the system may update a model or rule used to evaluate similar requests.”

This supports AI security and fraud detection claims.

Describe false positives and review

Security systems often create false positives.

A review workflow can help.

For example:

“In some examples, when the system detects a security condition, the system may route the event for review instead of automatically blocking the event.”

Then:

“The review result may confirm the security condition, reject the security condition, update a status, update a model, change a threshold, or create an audit record.”

This is useful.

It supports human-in-the-loop security.

Describe security and confidence

Security outputs can include confidence.

For example:

“In some examples, the system may generate a confidence value associated with a security output.”

Then:

“The confidence value may indicate a level of certainty that a request, user, device, event, output, or data source is trusted or risky.”

Then:

“The system may use the confidence value to allow an action, block an action, request review, require additional authentication, or change a display.”

This connects security to decision logic.

Describe security in user interfaces

A user interface may show security status, warnings, access controls, approvals, or audit information.

For example:

“In some examples, the user interface may display a security indicator associated with an output, request, device, or user.”

Then:

“The security indicator may include a trust score, risk score, warning, status label, permission indicator, review status, or other security information.”

Then:

“The user interface may receive user input approving, rejecting, escalating, or commenting on a security event.”

This supports UI-security claims.

Describe security warnings

A warning is stronger when its trigger and action are clear.

For example:

“The user interface may display a warning when a user requests access to sensitive data from an untrusted device.”

Or:

“The user interface may display a warning when a command may cause a device to enter an unsafe state.”

Or:

“The user interface may display a warning when an AI output may include protected data.”

Then:

“The system may require confirmation or additional authorization before continuing.”

This ties warning to control.

Describe security dashboards carefully

If your product has a security dashboard, do not make the dashboard the whole invention unless it is.

A better description:

“In some examples, the system may provide security information through a user interface, such as a dashboard.”

Then:

“The security information may include events, risk scores, trust scores, alerts, affected users, affected devices, suggested actions, review status, audit records, or other security data.”

Then:

“In other examples, the security information may be provided through an API, report, message, notification, or external system.”

This keeps the scope flexible.

Describe security APIs

Security systems often expose APIs.

For example:

“The system may receive a security event through an API request.”

Or:

“The system may provide a risk score through an API response.”

Or:

“The system may send a security alert to an external system through a webhook.”

Then describe the data:

“The API response may include an event identifier, risk score, trust score, recommended action, status, or explanation.”

This supports integration-based security products.

Describe explanations for security decisions

Security decisions may need explanations.

For example:

“In some examples, the system may generate an explanation for a security decision.”

Then:

“The explanation may identify one or more factors that contributed to the security decision, such as device state, location, request type, failed authentication, policy match, unusual behavior, invalid signature, or prior event.”

Then:

“The explanation may be displayed to a user, stored in an audit record, or sent to an external system.”

This supports explainable security and AI security claims.

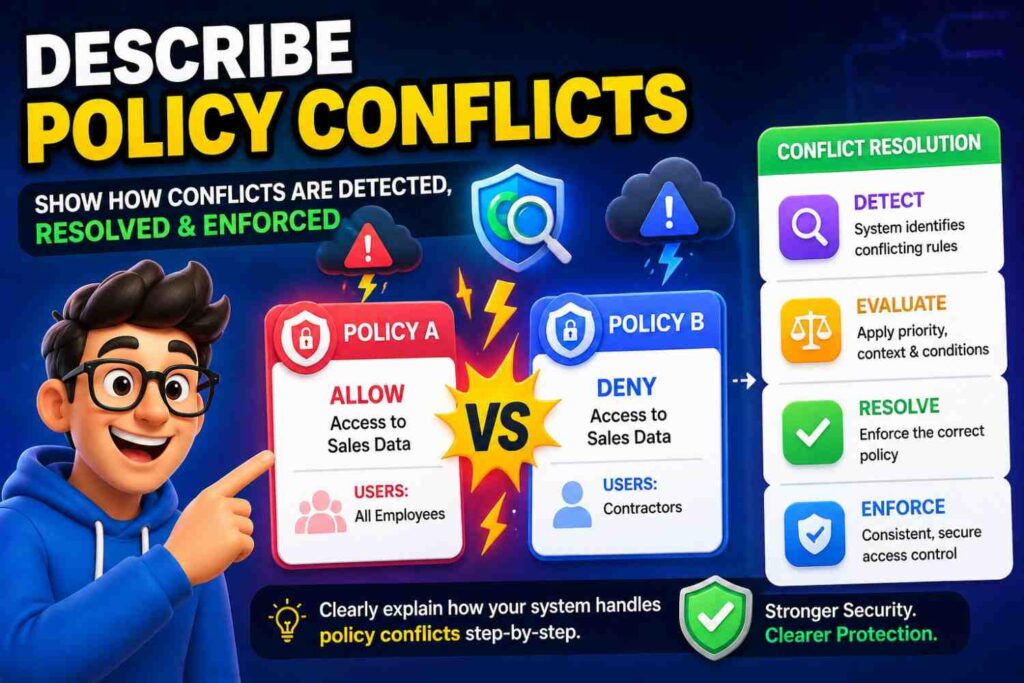

Describe policy conflicts

Sometimes policies conflict.

For example, one policy allows access, another denies it.

A spec may say:

“In some examples, when multiple policies apply to a request, the system may resolve a policy conflict based on a priority rule, risk score, user role, data type, tenant setting, or other conflict rule.”

Then:

“The system may apply the selected policy to allow, deny, modify, or route the request.”

This can support advanced access control claims.

Describe security for data sharing

Data sharing needs controls.

For example:

“In some examples, before sharing data with an external system, the system may determine whether the external system is authorized to receive the data.”

Then:

“The system may mask, encrypt, summarize, filter, or remove at least part of the data before sharing the data.”

Then:

“The system may store an audit record associated with the sharing event.”

This is strong.

It applies to many enterprise products.

Describe security for model training data

AI training data can include sensitive records.

A patent spec may say:

“In some examples, before using data for model training, the system may determine whether the data is permitted for training use.”

Then:

“The determination may be based on a user setting, tenant setting, data type, consent value, policy, or data source.”

Then:

“If the data is not permitted for training use, the system may exclude the data, mask the data, use a summary of the data, or store a record indicating exclusion.”

This is powerful for AI platforms.

Describe security for feedback data

Feedback may also be sensitive.

For example:

“In some examples, the system may store feedback data separately from input data or may control access to feedback data based on permission or policy.”

Then:

“The feedback data may be used to update a model, rule, threshold, or training record only when a training permission is satisfied.”

This supports secure learning systems.

Describe security for embeddings

Embeddings can leak information in some systems.

If relevant, describe protections.

For example:

“In some examples, the system may control access to embeddings generated from sensitive data.”

Then:

“The system may store embeddings in a protected data store, associate embeddings with a tenant identifier, encrypt embeddings, delete embeddings based on a retention rule, or restrict use of embeddings based on a policy.”

This can support AI security claims.

Describe security for logs

Logs may contain sensitive data.

A spec may say:

“In some examples, the system may remove, mask, or tokenize sensitive data before storing the data in a log.”

Then:

“The system may control access to logs based on a role, permission, tenant identifier, or policy.”

This is useful for cloud platforms and AI systems.

Describe security for admin settings

Admin settings are powerful.

A patent spec may say:

“In some examples, the system may require an authorization check before allowing a user to change a security setting, threshold, model, rule, data source, or integration.”

Then:

“The system may create an audit record when the setting is changed.”

Then:

“The changed setting may be applied to later requests, outputs, workflows, or access decisions.”

This supports secure configuration claims.

Describe security for integrations

External integrations can create risk.

For example:

“In some examples, before sending data to an external system, the system may verify an integration configuration associated with the external system.”

Then:

“The integration configuration may include an endpoint, credential, permission, data type, tenant identifier, or policy.”

Then:

“If the integration configuration is not valid, the system may block the transfer, request reconfiguration, or send an alert.”

This is strong for SaaS and API products.

Describe inbound data trust

A system may receive data from many sources. Not all sources are trusted.

A patent spec may say:

“In some examples, the system may assign a trust level to a data source.”

Then:

“The trust level may be based on source identity, authentication status, signature verification, past accuracy, review results, data quality, or policy.”

Then:

“The system may process data differently based on the trust level.”

For example:

“Data from a lower-trust source may be routed for review, given less weight, or excluded from model training.”

This is a strong security concept.

Describe data provenance

Provenance means where data came from and what happened to it.

A patent spec may say:

“In some examples, the system may store provenance data associated with input data.”

Then:

“The provenance data may identify a source, time, device, user, system, transformation, signature, model version, or processing step associated with the input data.”

Then:

“The system may use the provenance data to verify trust, generate an explanation, create an audit record, or determine whether the input data may be used.”

This is valuable for AI, data platforms, healthcare, finance, and security tools.

Describe chain of custody

For sensitive data or evidence, chain of custody may matter.

A spec may say:

“In some examples, the system may store a chain-of-custody record that identifies one or more users, devices, services, or systems that accessed or modified data.”

Then:

“The chain-of-custody record may include times, actions, signatures, hashes, locations, or status values.”

Then:

“The system may use the chain-of-custody record to verify integrity or support later review.”

This supports audit-heavy inventions.

Describe integrity checks

Integrity checks verify data has not changed unexpectedly.

For example:

“In some examples, the system may generate a hash value associated with data and later compare the hash value to a later hash value to determine whether the data has changed.”

Then:

“When the hash values do not match, the system may mark the data as changed, reject the data, generate an alert, or route the data for review.”

This is clear and useful.

Describe version integrity

For AI and software systems:

“In some examples, the system may verify a model version, rule version, software version, or configuration version before using the model, rule, software, or configuration.”

Then:

“If the version is not approved, outdated, revoked, or inconsistent with a policy, the system may block use, request an update, or select another version.”

This supports safe deployment and secure updates.

Describe security updates

Security updates can matter.

For example:

“In some examples, the system may send a security update to a device or service in response to a detected vulnerability, policy change, key rotation, or security event.”

Then:

“The device or service may apply the security update before performing a protected operation.”

This is useful for connected devices and cloud systems.

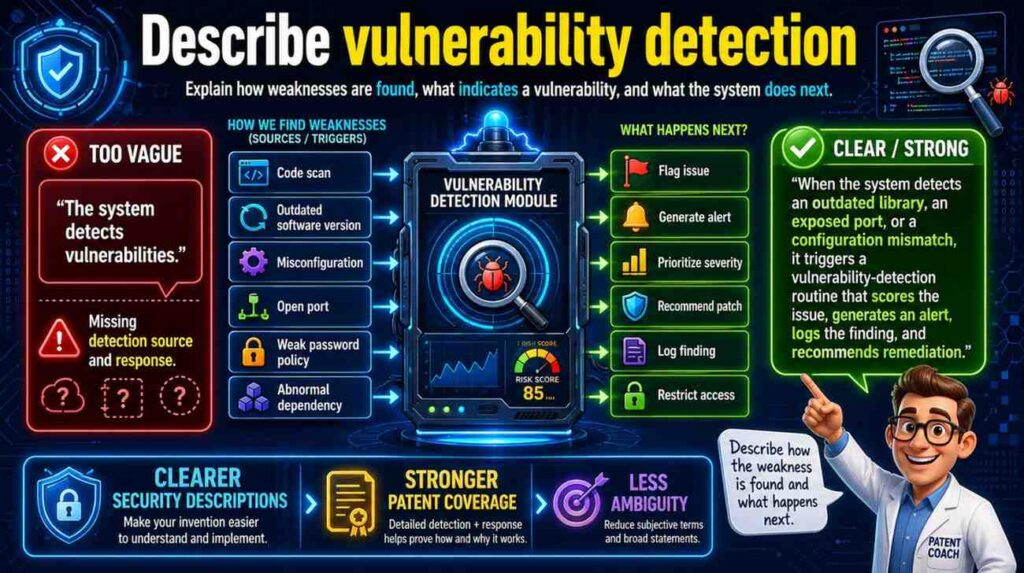

Describe vulnerability detection

If your system detects security weaknesses, describe how.

For example:

“In some examples, the system may analyze configuration data, code data, dependency data, network data, device data, or access data to detect a vulnerability.”

Then:

“The vulnerability may include an exposed secret, outdated dependency, weak permission, open network port, insecure configuration, untrusted device, or policy violation.”

Then:

“The system may generate a vulnerability output, rank vulnerabilities, suggest an action, create a task, or block a deployment.”

This is strong for cybersecurity tools.

Describe secure deployment

For developer tools and cloud systems:

“In some examples, before deploying software, a model, or a configuration, the system may perform a security check.”

Then:

“The security check may include scanning for secrets, verifying permissions, checking dependencies, validating signatures, checking policy rules, or reviewing a risk score.”

Then:

“If the security check fails, the system may block deployment, request approval, create a task, or generate a warning.”

This can support DevSecOps patent claims.

Describe secrets management

Secrets include keys, tokens, passwords, and certificates.

A spec may say:

“In some examples, the system may store a secret in a protected secret store and provide access to the secret only when an access condition is satisfied.”

Then:

“The access condition may include a user role, service identity, device identity, policy, time, request type, or approval.”

Then:

“The system may rotate, revoke, mask, or audit access to the secret.”

This supports secure cloud architecture.

Describe security for data pipelines

Data pipelines often move sensitive data.

A patent spec may say:

“In some examples, the system may apply a security operation at one or more stages of a data pipeline.”

Then:

“The security operation may include authentication, authorization, masking, encryption, validation, logging, policy checking, or anomaly detection.”

Then:

“The stage may include data ingestion, preprocessing, storage, model input generation, output generation, sharing, or deletion.”

This ties security to pipeline architecture.

Describe security at ingestion

Ingestion is when data enters the system.

For example:

“In some examples, the system may perform an ingestion security check before accepting input data.”

Then:

“The ingestion security check may verify source identity, message signature, data format, permission, data type, malware status, schema, or policy.”

Then:

“If the input data fails the ingestion security check, the system may reject the input data, quarantine the input data, route it for review, or store a security record.”

This is strong.

Describe quarantine

Quarantine means holding risky data or files apart.

A patent spec may say:

“In some examples, the system may place data in a quarantine state when the data satisfies a security condition.”

Then:

“The security condition may include invalid signature, malware detection, unexpected format, policy violation, unknown source, or suspicious content.”

Then:

“While in the quarantine state, the data may be blocked from use in processing, model training, output generation, or sharing until the data is approved or cleared.”

This is useful for data security and AI training pipelines.

Describe malware or unsafe content scanning

If relevant:

“In some examples, the system may scan an uploaded file, message, code portion, document, or other input for unsafe content.”

Then:

“When unsafe content is detected, the system may block the input, remove the unsafe content, quarantine the input, create an alert, or request review.”

This supports secure upload workflows.

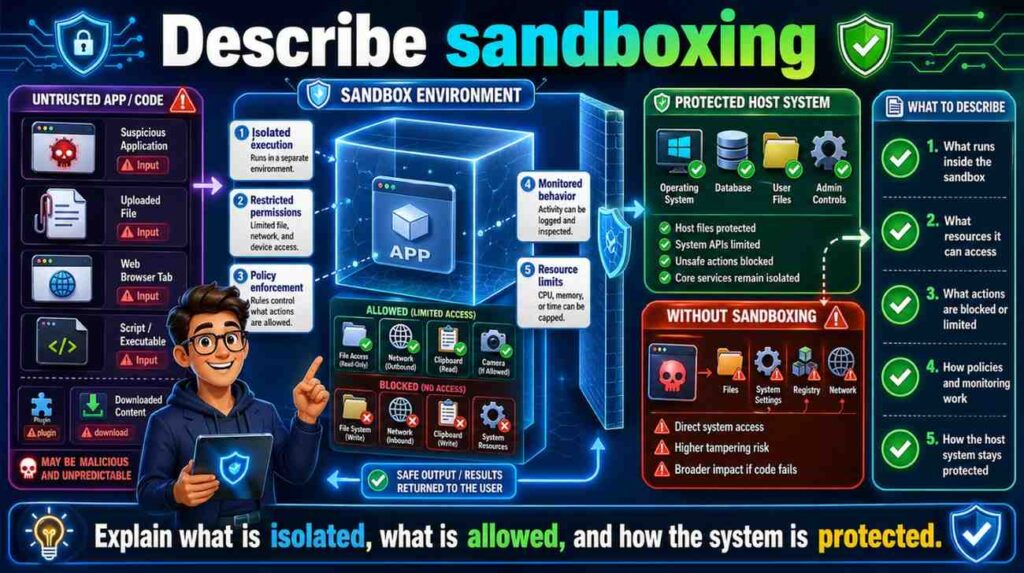

Describe sandboxing

Sandboxing can be useful for code, documents, models, or plugins.

A patent spec may say:

“In some examples, the system may execute code, open a file, test a model, or run a plugin in a restricted environment.”

Then:

“The restricted environment may limit network access, file access, memory access, system calls, data access, or execution time.”

Then:

“The system may allow or block later use based on a result from the restricted environment.”

This is strong for developer tools and security platforms.

Describe plugin security

Modern systems often use plugins or extensions.

A spec may say:

“In some examples, before allowing a plugin to access data or perform an action, the system may determine whether the plugin has permission for the data or action.”

Then:

“The system may limit plugin access based on a scope, user approval, tenant setting, policy, risk score, or plugin identity.”

This is useful for AI agents and app platforms.

Describe agent security

AI agents can take actions. That creates security risk.

A patent spec may say:

“In some examples, an agent may generate a proposed action based on input data. Before the proposed action is performed, the system may evaluate the proposed action against a policy, permission, risk score, user approval, or safety condition.”

Then:

“If the proposed action is allowed, the system may perform the action or send an action request. If the proposed action is not allowed, the system may block the action, request review, or generate a warning.”

This is very relevant for AI automation.

It keeps the description simple but powerful.

Describe human approval for AI actions

For AI agents and automation:

“In some examples, the system may require human approval before performing a high-risk action generated by an AI agent.”

Then:

“The high-risk action may include sending data to an external system, changing a setting, deleting data, approving a transaction, issuing a command, or modifying a record.”

Then:

“The system may present the proposed action, supporting data, and risk information through a review interface.”

This supports safe AI workflows.

Describe data loss prevention

Data loss prevention means stopping sensitive data from leaving.

A patent spec may say:

“In some examples, the system may detect protected data in an output, message, file, model input, model output, or API response before the data is sent to a destination.”

Then:

“When protected data is detected, the system may block the transfer, mask the protected data, request approval, route for review, or send a security alert.”

This is strong and broadly useful.

Describe location-based security

Location can affect access.

For example:

“In some examples, the system may determine a location associated with a user, device, request, or data. The system may allow, deny, or modify access based on the location.”

Then:

“The location may be used to select a processing region, apply a data residency rule, require additional authentication, or block a request.”

This supports location-aware security.

Describe time-based security

Time can also matter.

For example:

“In some examples, the system may allow access or actions only during a permitted time period.”

Or:

“The system may require re-authentication after a session time expires.”

Or:

“The system may rotate a key after a time period.”

These details can support security claims when tied to the invention.

Describe security thresholds as flexible

Security thresholds are common.

A risk score above a threshold triggers review. A number of failed logins triggers lockout. A request rate over a threshold triggers rate limiting.

Avoid fixed numbers unless needed.

For example:

“The system may trigger a security action when a risk score satisfies a threshold.”

Then:

“The threshold may be fixed, user-defined, tenant-defined, learned, adjusted over time, or selected based on context.”

This keeps the spec flexible.

Describe adaptive thresholds

Adaptive thresholds can be valuable.

For example:

“In some examples, the system may adjust a security threshold based on past security events, user behavior, device behavior, system load, feedback, or a policy change.”

Then:

“The adjusted threshold may be used to trigger later authentication, review, blocking, or alerting.”

This supports adaptive security claims.

Describe security rules

Rules are common.

A rule may say who can access what, when a command is allowed, when data must be masked, or when review is required.

A patent spec may say:

“In some examples, the system may apply a security rule to a request, output, data item, command, or user action.”

Then:

“The security rule may include a condition and an action.”

Then:

“When the condition is satisfied, the system may perform the action.”

This simple structure is strong.

Add examples:

“The condition may relate to user role, device state, data type, risk score, location, time, policy, or request type. The action may include allow, deny, mask, encrypt, route for review, alert, log, or require additional authentication.”

Describe security policies as configurable

If customers can configure security, say so.

For example:

“In some examples, an organization may configure a security policy that controls access, masking, review, export, retention, authentication, or alerting.”

Then:

“The system may apply the security policy to data, users, devices, requests, outputs, or workflows associated with the organization.”

This supports enterprise features.

Describe security inheritance

In complex systems, permissions may be inherited.

For example:

“In some examples, a permission associated with a project, folder, tenant, device group, or data source may apply to records within the project, folder, tenant, device group, or data source.”

If relevant, include it.

It can support access control claims.

Describe exceptions

Sometimes a system allows exceptions.

For example:

“In some examples, a user may request an exception to a security policy. The system may route the exception request for approval and apply the exception only after approval is received.”

This supports enterprise governance workflows.

Describe security and notifications together

Security actions often notify people.

For example:

“When the system blocks a request, the system may send a notification to an administrator.”

Or:

“When a device becomes untrusted, the system may notify a user associated with the device.”

Or:

“When protected data is detected in an output, the system may notify a reviewer.”

This connects security events to workflows.

Describe security and audit together

A strong pattern is:

Detect event.

Take action.

Store audit record.

For example:

“When the system detects an unauthorized request, the system may reject the request and store an audit record associated with the unauthorized request.”

This simple pattern gives the spec structure.

It is useful across many security features.

Describe security and feedback together

Another strong pattern is:

Detect event.

Receive review.

Update rule or model.

For example:

“When a security event is routed for review, the system may receive a review result. The system may use the review result to update a security rule, risk model, threshold, or trust score.”

This is powerful for adaptive security and AI security.

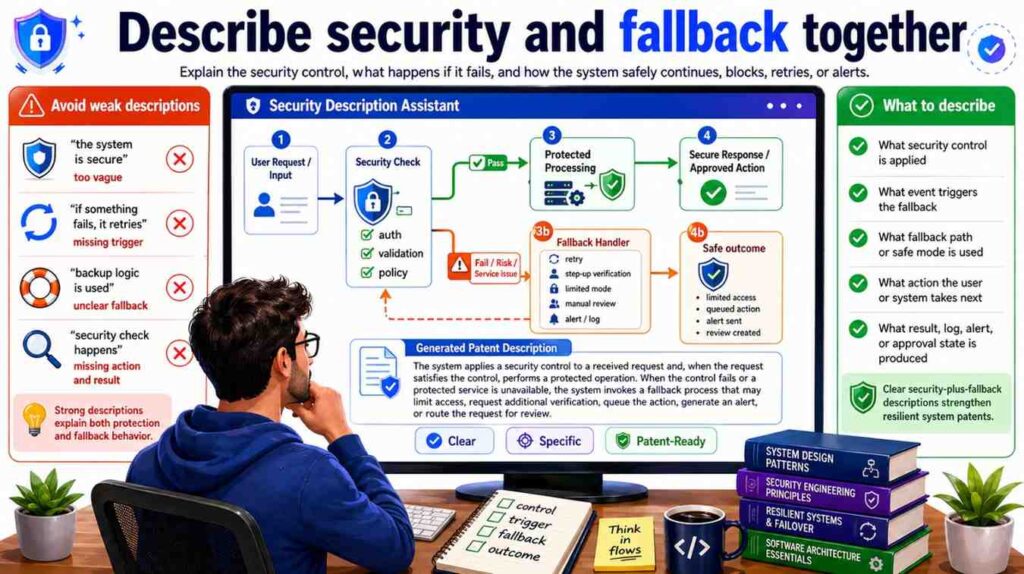

Describe security and fallback together

Security may trigger fallback.

For example:

“When a remote service is not trusted or unavailable, the system may process data locally.”

Or:

“When a device fails authentication, the system may use data from another device.”

Or:

“When an output fails a security check, the system may provide a limited output.”

These fallback paths can support strong claims.

Describe security and privacy together, but keep them distinct

Security and privacy are related but not the same.

Security often protects systems from unauthorized access or misuse.

Privacy often controls how personal or sensitive data is used, shown, stored, or shared.

A patent spec can include both.

For example:

“The system may authenticate a user before allowing access to patient data. The system may also mask selected patient fields based on a privacy policy before displaying the patient data.”

That is clear.

Authentication is security. Masking is privacy.

Both matter.

Avoid over-narrowing security tools

Do not lock security to one tool unless necessary.

Instead of saying:

“The system uses OAuth.”

Say:

“The system may use an authorization protocol or access token to control access.”

Then:

“In one example, the authorization protocol may include OAuth.”

Instead of saying:

“The system uses TLS.”

Say:

“The system may use a secure communication channel.”

Then include examples if needed.

Instead of saying:

“The system uses YubiKey.”

Say:

“The system may use a hardware security key or other authentication factor.”

This keeps scope flexible.

Avoid saying “secure” without explaining how

Words like “secure,” “safe,” and “protected” are not enough by themselves.

Weak:

“The system securely stores data.”

Better:

“The system may encrypt data before storing the data and may limit access to the stored data based on a user role or permission.”

Weak:

“The system safely controls the device.”

Better:

“The system may verify a safety condition before sending a control command to the device.”

Weak:

“The system protects model outputs.”

Better:

“The system may evaluate a model output to detect protected data before providing the model output to a user.”

Simple but specific.

Avoid making security optional if it is core

Sometimes security is the invention.

If the main innovation is a new way to detect unauthorized access, verify device trust, protect AI context, or route sensitive data, then security may be central.

Do not hide the core security step as a casual optional feature.

For example, if your invention is a system that blocks AI from using restricted documents, then permission checking before context inclusion may be core. The spec should make that clear.

But if encryption is just standard protection in your product, it may be described as an example or optional feature.

The key is to know what is core.

PowerPatent helps founders identify the real inventive security features and separate them from ordinary implementation details. See how it works here: https://powerpatent.com/how-it-works

Avoid making security features required if they are not

The opposite mistake is making every security feature sound required.

A system may use encryption, MFA, audit logs, role-based access, and anomaly detection in one version. But not every version may need all of them.

If a feature is optional, use optional language.

“In some examples…”

“May…”

“Can…”

“Such as…”

“One or more…”

“Or another…”

For example:

“In some examples, the system may require additional authentication before performing a protected action.”

This is safer than:

“The system always requires additional authentication.”

Unless always is true.

Describe security features in layers

A good security description often has layers.

First, broad function.

Then specific examples.

Then optional variations.

Then system response.

For example:

“The system may control access to output data.”

That is broad.

“In some examples, access may be controlled based on a user role, permission, tenant identifier, device identifier, location, time, or policy.”

That gives examples.

“When access is allowed, the system may provide the output data. When access is not allowed, the system may hide the output data, provide a masked version of the output data, request additional authentication, or create an audit record.”

That gives response.

This layered style is clear and strong.

Describe security in the figures

If security is important, show it in figures.

A system diagram may show an authentication service, policy engine, audit log, secure data store, key manager, review interface, or security monitor.

A flowchart may show receiving a request, checking permission, allowing or denying access, and logging the result.

An AI pipeline may show context access control before model input generation.

A device diagram may show secure boot, tamper detection, or command verification.

A UI figure may show a security warning or approval flow.

Then describe the figures carefully.

“Figure 4 illustrates an example process for controlling access to output data based on a permission check.”

“Figure 5 illustrates an example process for validating a control command before sending the control command to a device.”

“Figure 6 illustrates an example process for checking access to context data before generating a model input.”

These captions help support the security story.

Describe security flowcharts with pass and fail paths

Security flowcharts should include both outcomes.

For example:

The system receives a request.

The system checks permission.

If permission is satisfied, the system allows access.

If permission is not satisfied, the system blocks access and logs the event.