Generative AI is moving fast. Founders are shipping new tools every week. Teams are building with prompts, models, agents, fine-tuning, retrieval, ranking, and multi-step workflows. In the middle of that speed, one big question keeps coming up: what part of this can you actually protect? That is where patent claims matter.

Why Patent Claims Matter More Than the Model Itself

Most businesses start by looking at the model. That makes sense. The model feels like the engine behind everything. It writes, classifies, predicts, summarizes, and generates.

But in most real products, the model is only one part of the full system. It is often not the part that gives a company its strongest edge.

The real value usually sits in how the business uses the model inside a practical product flow.

That is why patent claims often matter more than the model itself. A model can be swapped. A vendor can change.

An API can get cheaper. A new foundation model can arrive and outperform the old one in a month.

But a well-designed workflow that solves a hard business problem in a repeatable way can stay valuable for a long time. The patent strategy should match that reality.

The model is often replaceable

When founders first think about protection, they often focus on the model because it looks like the most technical piece. But from a business view, that is not always where the moat lives.

Many companies use outside models. Even when they tune or adapt those models, the market may move so fast that today’s model advantage becomes tomorrow’s baseline.

That does not mean the product has no protectable value. It means the business should look one layer up.

The stronger question is not, “Which model do we use?” The stronger question is, “What does our system do with the model that others do not?”

The business edge usually lives in the system around the model

In many generative AI products, the winning move is not raw generation. It is the structure around generation.

It is the way the system collects context, breaks down tasks, controls inputs, ranks outputs, checks quality, and routes the result into a business action.

That is where strong claim thinking begins. A company that treats the model as one step in a broader solution often sees the invention more clearly.

This is a much better place to start if the goal is real protection that supports growth, funding, product leverage, and future enforcement.

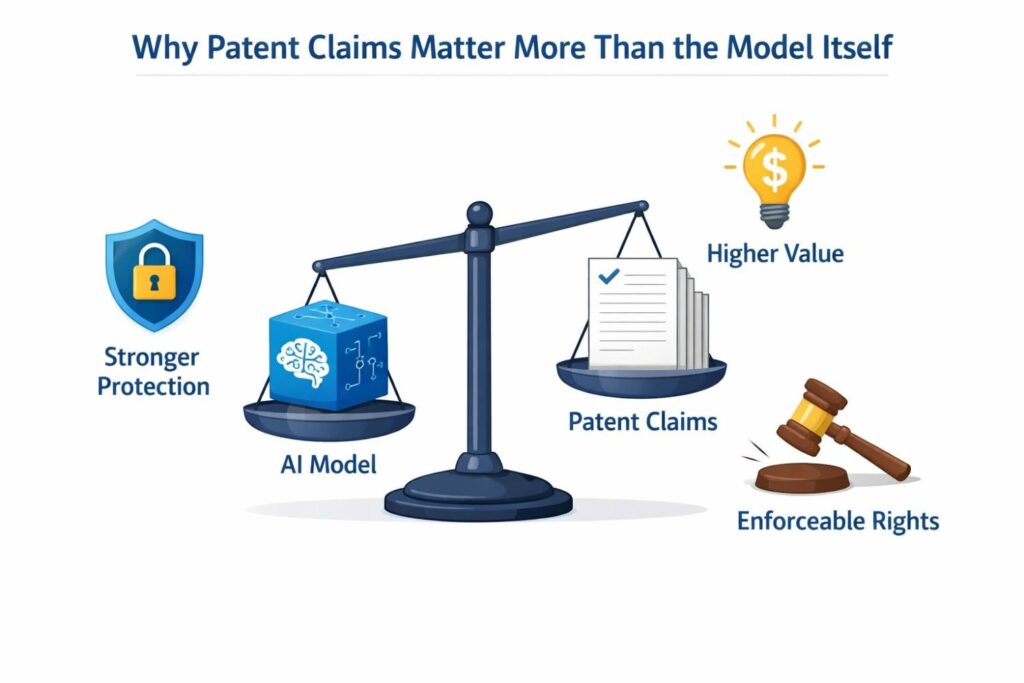

Patents should follow commercial value

A patent should not just describe something technical. It should protect what makes the business matter.

If a company saves customers hours of work, lowers error rates, improves trust, reduces review burden, or creates a new kind of result that users will pay for, those gains should shape the claims.

This is a key shift for AI teams.

Instead of writing around abstract intelligence, write around business impact created through a technical flow. That framing is more grounded, easier to explain, and often more durable as products evolve.

Why generic AI language weakens protection

Many teams describe their systems in broad terms. They say things like “using AI to generate content” or “using a language model to automate responses.”

The problem is simple. That kind of language is too wide at the top and too thin at the center. It does not say what the invention actually does in a useful, specific way.

A stronger patent story explains how the system reaches a result and why that path matters. It captures the sequence, the controls, and the logic that make the result better than a plain model call.

Specificity creates business leverage

Specificity is not the enemy of value. It is often the source of value. A claim becomes more useful when it focuses on the company’s actual technical approach to a real business pain point.

That can mean the system uses a structured prompt template tied to user state.

It can mean output is tested against internal rules before delivery. It can mean multiple models are used in a staged process with fallback logic based on confidence or risk.

These details are not small. These details are often the product.

Competitors can copy your use case faster than your full workflow

In fast AI markets, competitors do not need to copy your exact code to threaten your position. They only need to copy enough of your visible use case to confuse buyers.

That is why surface-level product ideas are not enough. A company needs protection around the hidden system design that makes the product work better in the real world.

When claims cover the actual workflow, they do more than describe a feature. They help draw a line around the way the company solves the problem. That can matter a great deal when the market becomes crowded.

A strong claim can outlast a model cycle

Models rise and fall quickly. APIs change pricing. Context windows grow. New providers appear.

If a patent depends too heavily on one model name or one narrow implementation choice, it may age badly. That is not a good result for a business trying to build long-term value.

A better claim strategy captures the function of the system in a flexible but still concrete way.

It protects the process, not just the current vendor or model release. This matters because investors, buyers, and partners care more about durable advantage than temporary tool choice.

The best patent angle may be in the workflow nobody sees

Some of the most valuable parts of an AI product are invisible to users. Users may only see a clean answer on the screen.

They do not see the pre-processing, retrieval, decomposition, guardrails, validation, human review triggers, or post-generation transformation steps underneath.

That hidden machinery is often where the company made the hardest design choices. It is also where claim opportunities often live.

Look at failure points, not just success points

A very useful way to spot patentable value is to study where a plain model call fails. Maybe it hallucinates. Maybe it misses a policy rule. Maybe it returns output in the wrong format.

Maybe it sounds good but cannot be trusted in a regulated flow. The fixes your team built to handle those failure points may be more important than the generation step itself.

This is a smart business exercise. Ask where customers would lose trust without your extra system layers. Then ask how your product prevents that failure in a technical way. That answer can lead to claim language with real bite.

Claims should capture decision logic

A lot of business value in generative AI comes from decisions made by the system before and after generation. The system may choose a prompt style based on account type.

It may select a retrieval source based on document class. It may send high-risk outputs to review while allowing low-risk outputs to pass automatically.

This kind of decision logic is often highly practical and highly protectable. It connects technical behavior to business goals like safety, speed, consistency, and cost control.

Practical advice for founders

A founder should not wait until the product feels “finished” to map this logic. Start now. Open the product design doc, the prompt manager, the evaluator settings, and the workflow orchestration diagram.

Then mark every step where the system makes a choice that improves performance or trust. Those steps often contain the strongest patent material.

Good claims can protect margins, not just features

Business leaders should think about patents as margin tools, not only legal tools. If your workflow lowers review time, reduces support cost, improves throughput, or makes onboarding faster, that is not just product polish.

That is economics. And if those economics come from a new technical workflow, that is exactly the kind of business value worth trying to protect.

This is especially important in AI markets where user-facing features can look similar from the outside.

Two products may both “generate reports” or “draft emails,” but one may do it with far better control, lower rework, and stronger accuracy. Claims should aim at that difference.

Focus on repeatable advantage

A useful question for any AI business is this: what part of our product creates repeatable value every single time a customer uses it? The answer is rarely just “the model.”

More often it is a repeatable pipeline that turns a general model into a reliable tool for a specific business outcome.

That repeatability matters because it is what customers pay for. They do not pay for vague intelligence. They pay for dependable results.

Your patent story should match how you sell

If your sales team wins deals by saying your product is safer, more accurate, better controlled, easier to audit, or faster to deploy, your patent strategy should listen carefully.

Sales language can reveal where the market sees true difference. Product language can explain how the system delivers that difference.

When both line up, the company gets a stronger position. The patent story becomes grounded in real market need instead of abstract technical language.

A simple internal exercise

Have one person from product, one from engineering, and one from go-to-market sit down together. Ask them to answer one question: why does the customer stay with us after trying the product?

Then keep pushing until the answer becomes technical and specific. If the answer turns into a workflow detail, a control layer, a routing rule, or a validation engine, you are getting close to the real invention.

Strong claims start with a strong map of the workflow

Before drafting anything, a business should map the full lifecycle of one important AI task. Start with the incoming data. Follow how context is gathered. Show how prompts are built.

Show how outputs are scored, checked, changed, stored, or sent into another action. Mark where the system improves quality, reduces risk, or saves time.

That map can be one of the most valuable things a company creates during patent prep. It helps separate what is generic from what is truly special.

This is how businesses avoid weak AI patents

Weak AI patents often happen when teams file too early with language that is too broad and too generic. Or they file too late after the unique parts are no longer easy to reconstruct.

The answer is not delay. The answer is disciplined capture. Write down what the system is doing today that creates business value now, even if the models change later.

That approach leads to stronger claims because it ties the legal strategy to the real operating system of the business.

The goal is not to protect “AI” in general

No business wins by trying to claim all of AI. The smart move is to protect the company’s distinct way of turning AI into a working product.

That means the exact flow that takes messy inputs and turns them into trusted outputs through a controlled sequence of technical steps.

That is why patent claims matter more than the model itself. The model may power the system, but the claim should protect the thing that makes the system worth buying.

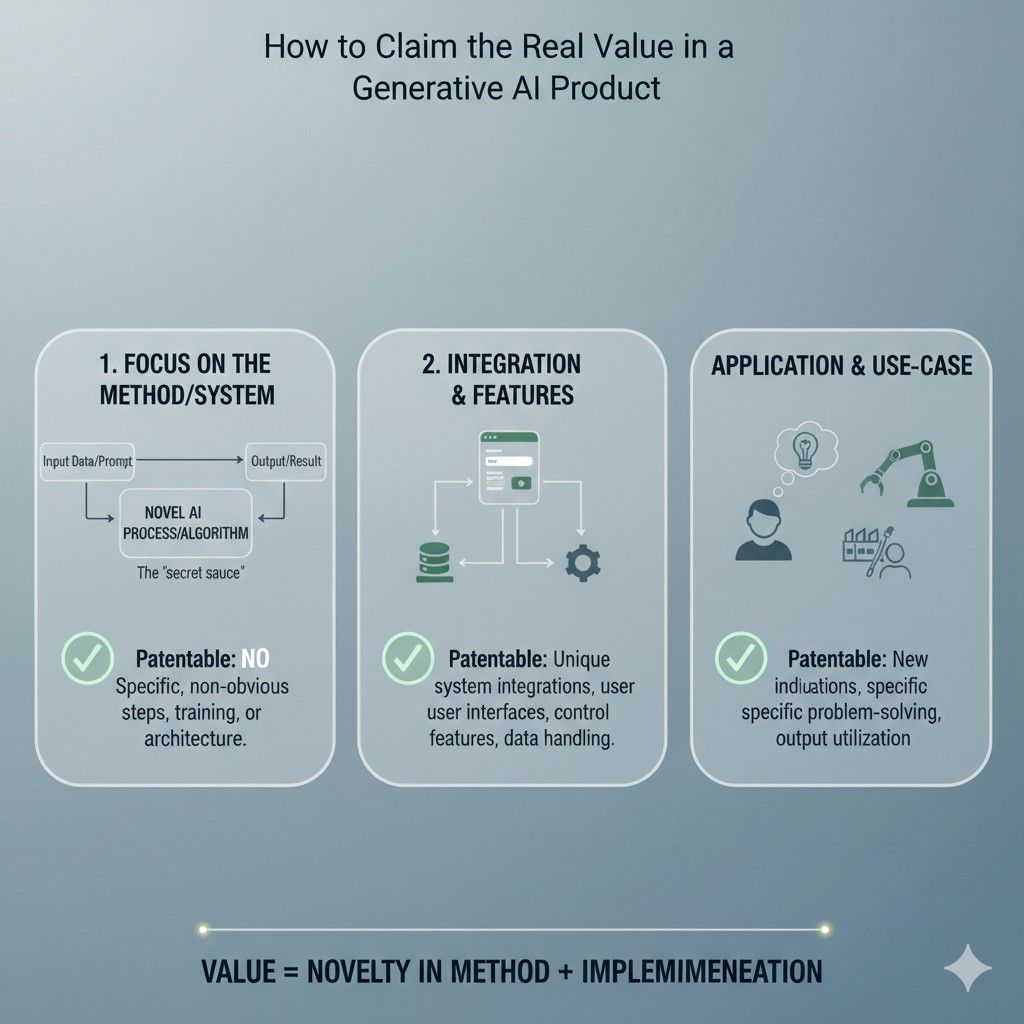

Can You Patent a Prompt, an Output, or a Full AI Workflow?

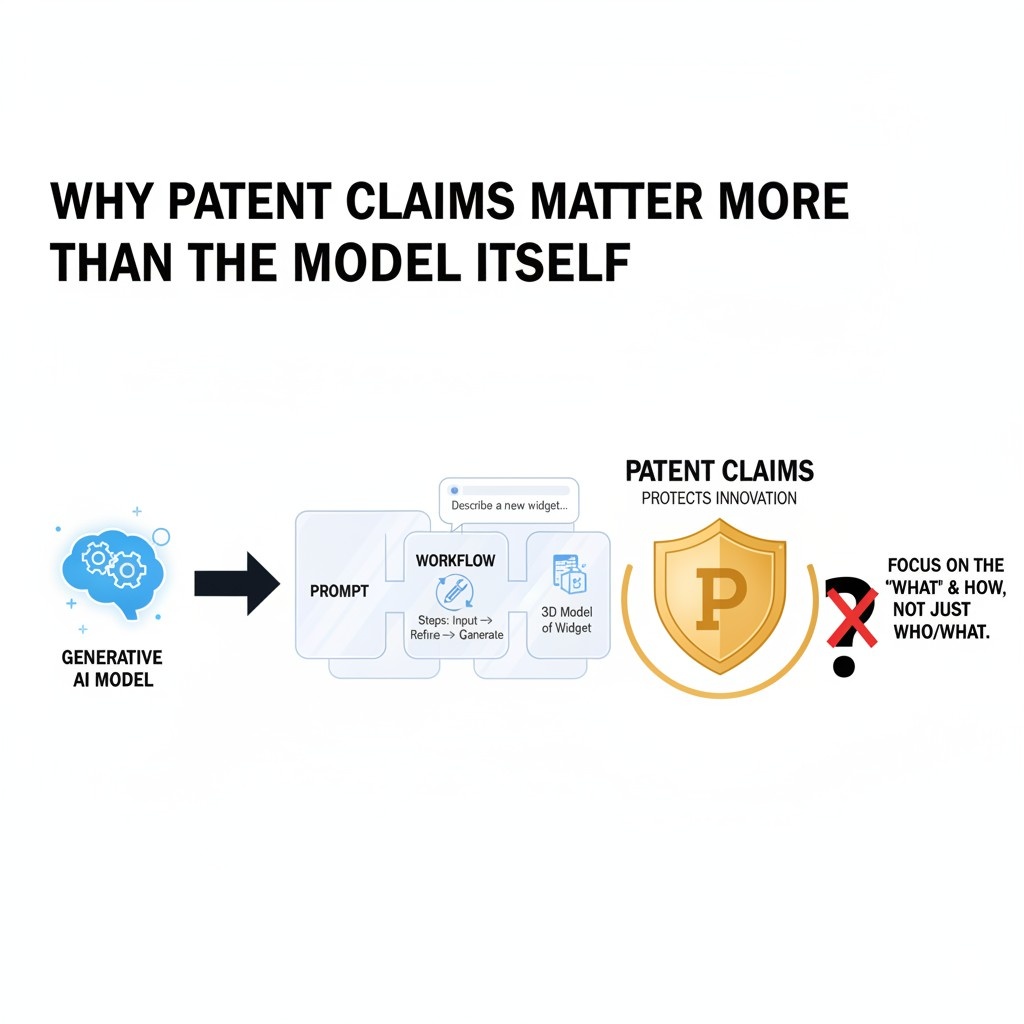

This is one of the biggest questions in generative AI right now, and it matters because many founders are building value in exactly these layers. They are not training a giant foundation model from scratch.

They are building smart systems on top of existing models. That means the protectable value often sits in the prompt design, the output handling, the orchestration logic, or the full path from input to final result.

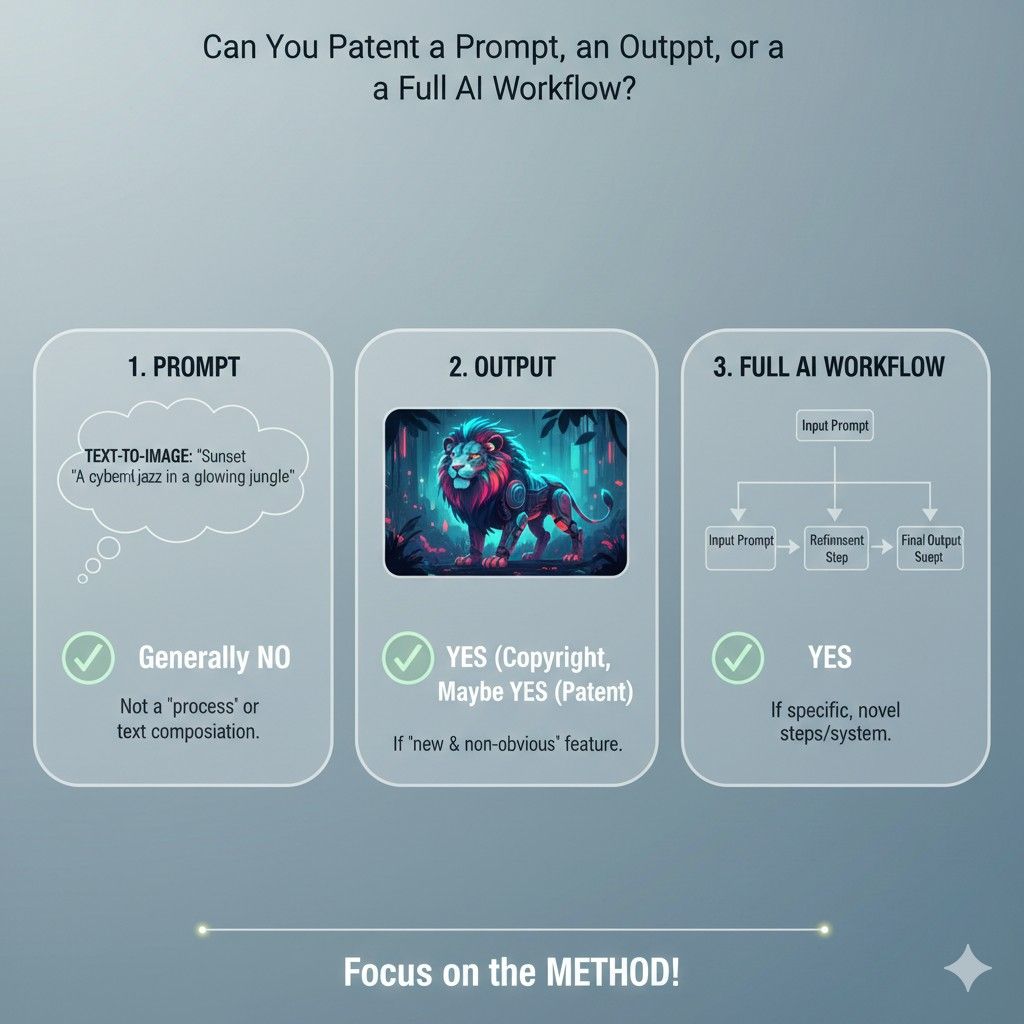

The hard part is that people often ask the wrong version of the question. They ask, “Can I patent a prompt?” as if the prompt sits alone in a vacuum. In real products, it usually does not.

A prompt works because of context, structure, timing, rules, post-processing, feedback loops, and business purpose. That is why the better question is not just whether a prompt, output, or workflow can be patented.

The better question is what part of that system is truly new, useful, and described in a way that protects real business value.

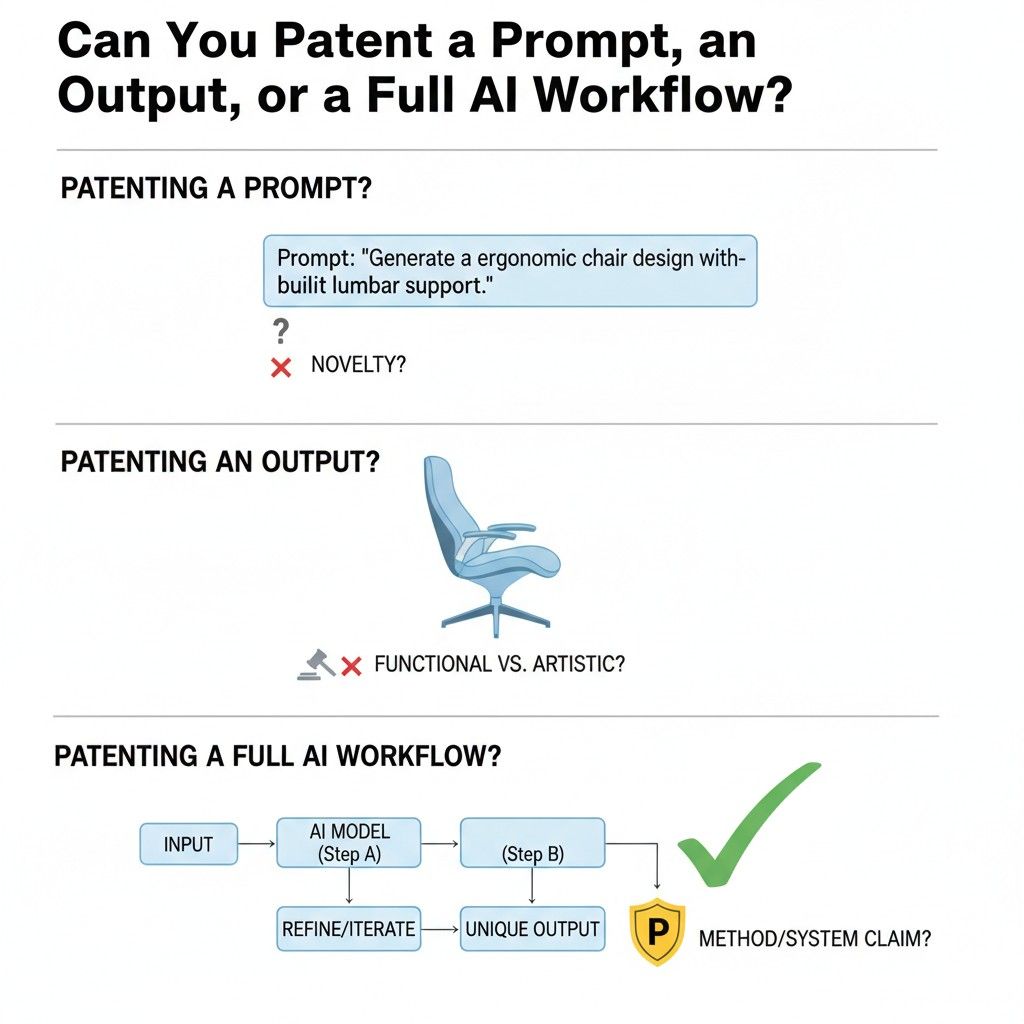

A prompt by itself is usually not the full story

A prompt can be important. In some products, it is very important. A carefully designed prompt may control tone, format, task order, error checking, citation behavior, tool use, or response boundaries.

It can be the difference between a weak result and a product customers trust.

But from a patent strategy view, a single prompt written as plain text is often too thin if it stands alone.

That is because the value rarely comes from the words alone. The value usually comes from how the prompt is built, when it is used, what information is inserted, how the model response is evaluated, and what happens next.

The better frame is prompt architecture

A more useful way to think about this is prompt architecture. That means the structured design behind prompting, not just the text string.

It includes how variables are pulled in, how user state changes the instruction, how system rules are layered, how task-specific examples are selected, and how the prompt shifts based on risk, confidence, or domain.

This matters because businesses do not usually win by having one clever sentence. They win by building a repeatable prompting system that works inside a product.

Ask what the prompt is doing inside the machine

A good internal question is this: what job is the prompt doing in the larger system? Is it separating user intent into sub-tasks? Is it enforcing a format that makes downstream automation possible?

Is it adapting language based on customer profile? Is it narrowing output so a later rule engine can validate it?

When you ask it this way, the invention starts to look less like text and more like a technical method. That is often a much stronger place to build claims.

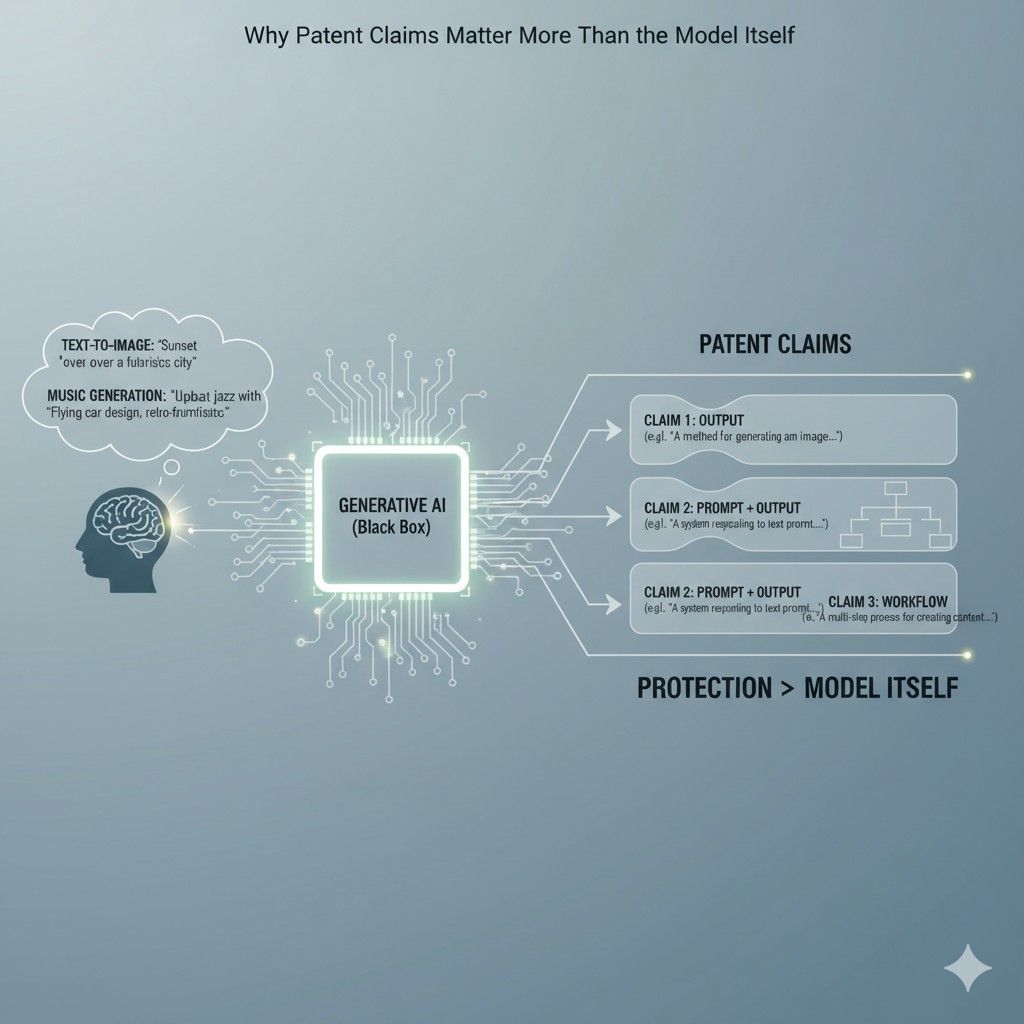

Outputs can matter, but only when tied to a technical process

Many teams also ask whether an AI output can be patented. By itself, that can be tricky as a framing device, because an output is often just the end result.

A summary, draft, recommendation, image description, plan, or code block may be useful, but the stronger patent angle is usually not the existence of the output. It is the system that produces the output in a special way.

That does not make outputs irrelevant. In fact, outputs can be very important in claim design when they reflect a specific transformation or structure created by a technical flow.

The shape of the output may reveal the invention

Sometimes the output is where the system’s unique logic becomes visible. Maybe the output is not just a paragraph of text.

Maybe it is a structured compliance package, a machine-readable action plan, a ranked diagnostic tree, a verified code patch, a clause-by-clause contract rewrite, or a multi-part result with confidence labels and review markers.

In those cases, the output may help tell the patent story because it shows that the system is not merely generating content. It is producing a controlled result shaped for a practical use.

Focus on outputs that drive action

The strongest business case often appears when the output triggers something real. It may start a workflow, update a record, route a case, launch a review, create a downstream query, or feed a decision system.

Once that happens, the output becomes part of an operational chain, not just a piece of generated text.

That is a very important shift. Businesses should pay close attention to outputs that move work forward. Those outputs often sit at the center of value creation.

A tactical way to spot output-based claim value

Take one high-value customer action in your product and trace backward. Look at the exact output that makes that action possible.

Then ask how that output is formed. If it depends on structured prompting, retrieval choices, scoring, filtering, transformation, or threshold-based routing, there may be strong claim material in that path.

This exercise helps founders avoid a common mistake, which is focusing on the visible output instead of the technical steps that make the output reliable.

Full workflows are often the strongest patent target

For most AI businesses, the full workflow is where the best protection lives. That is because the workflow connects all the meaningful pieces.

It shows how data comes in, how context is selected, how prompts are formed, how one or more models are used, how outputs are checked, how exceptions are handled, and how final results are delivered.

That full sequence is often the product’s real secret. It is also where the technical design meets business performance.

Workflows turn general AI into a specific business engine

A general-purpose model can do many things. But a workflow turns that general capability into a purpose-built business system.

It narrows the task, adds controls, improves consistency, and connects the output to a real need. That is what makes the product valuable.

This is why many businesses should spend less time asking whether they can patent AI in the abstract and more time documenting how their workflow solves one hard problem in a way that is not obvious or generic.

The workflow should be described as a sequence with purpose

It is not enough to say the system uses prompts, a model, and output review. That is still too loose. The stronger approach is to describe the sequence clearly. Show the order of operations.

Show the decision points. Show what changes based on input type, customer profile, system confidence, or downstream risk. Show where validation happens and what triggers re-generation, escalation, or approval.

A workflow becomes more valuable as patent material when it is described as an intentional system rather than a bundle of features.

Businesses should document branch points early

One of the most overlooked sources of claim strength is branch logic. This is the point where the system chooses one path over another.

It may use a first prompt for low-risk requests and a second controlled process for high-risk ones. It may call a retrieval layer only for certain document classes.

It may re-run generation when a format check fails. It may send edge cases to a human reviewer.

These branch points often reflect hard-won engineering decisions. They are exactly the kind of details businesses should capture before those choices become so normal that no one writes them down.

A prompt can be part of a claim without being the whole claim

Businesses do not need to force a false choice. It is not always prompt versus workflow. A prompt structure can absolutely matter. It can be one part of a strong claim when it is tied to a broader technical method.

For example, the claim may involve generating a prompt from a set of classified inputs, inserting rule-specific context, producing a candidate output, validating that output against defined criteria, and taking a follow-up action based on the result.

That kind of framing is usually much stronger than trying to protect a static prompt in isolation.

The same is true for outputs

An output can also matter as part of a larger system claim. The key is to connect the output to how it is formed and what it causes next.

A company should ask whether the output is merely expressive or whether it has a defined technical role inside the system.

If it triggers later machine actions, enables reliable automation, or reflects a structured conversion of messy input into a usable artifact, it may have much more strategic value.

This is where founders often find the clearest business case for protection.

The real question is where the non-obvious value sits

In practice, businesses should stop chasing labels and start chasing leverage. The point is not to win a debate over whether the invention is a prompt, an output, or a workflow.

The point is to identify where the system creates a result that would not happen from a plain model call used in a basic way.

That is where non-obvious value tends to live. It may sit in prompt generation. It may sit in output structuring. It may sit in a multi-stage orchestration layer. In many cases, it lives across all three.

A practical founder test

Here is a useful way to pressure-test your system. Imagine a smart competitor has access to the same base model tomorrow. What would they still not know from your landing page or product demo?

Whatever remains hidden but essential is often the best place to look for patentable value.

That hidden value is usually not “we use AI.” It is the internal design that makes the AI dependable, controlled, and useful in a business setting.

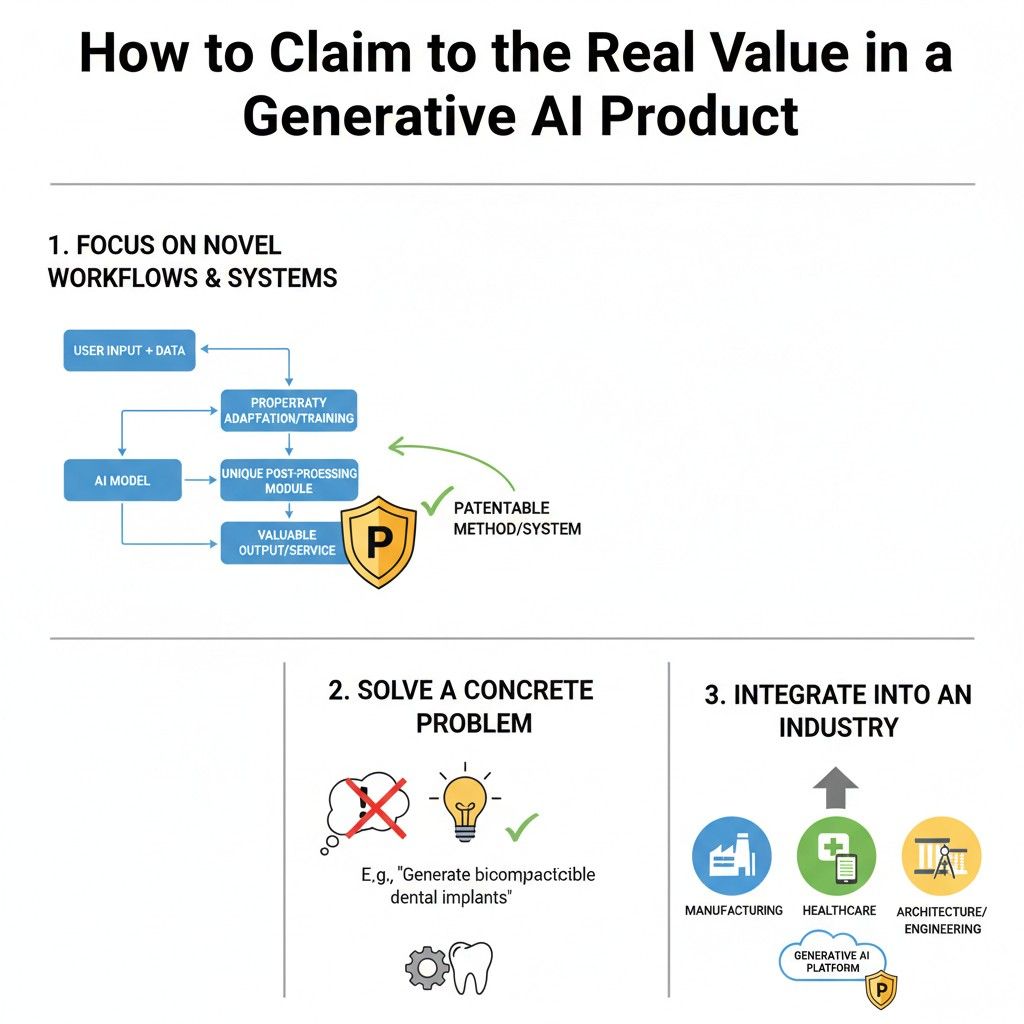

How to Claim the Real Value in a Generative AI Product

Most generative AI products look simple from the outside. A user enters something, the system thinks, and a result appears. But that clean surface can hide a lot of real engineering work.

The product may classify requests, pull context from many sources, build structured prompts, run several model steps, score outputs, reject weak results, trigger review, and then turn the final answer into a business action. That hidden design is usually where the real value lives.

The mistake many teams make is focusing on the most visible part instead of the most important part. They point to the model, the chatbot screen, or the generated answer.

But those things are often the easiest for others to copy at a high level. The deeper value is in the method that makes the product reliable, useful, and hard to replace. That is the part worth claiming.

Start with the business result, not the AI label

A strong claim strategy begins with the outcome the product creates in the real world. That means looking past words like “AI-powered” and asking what the system actually gets done better than older tools or simpler model wrappers.

This matters because patents are much stronger when they connect technical steps to a clear practical result. A business should be able to say, in plain language, what changes because its system exists. Maybe the product cuts review time from hours to minutes.

Maybe it turns messy source material into a clean structured file. Maybe it creates safer outputs by checking for errors before release.

Maybe it helps teams act faster without losing control. The clearer that business result is, the easier it becomes to see what should be protected.

Find the step that customers would truly miss

Not every feature deserves equal attention. Some features are nice to have. Some are expected. Some are just decoration. The real value usually sits in the step that, if removed, would make the product much weaker or much less trusted.

That is the place where businesses should look first. Ask what part of the product makes customers stay. Ask what part reduces pain in a way that is hard to replace.

Ask what would cause complaints, slowdowns, or extra labor if it vanished. Those answers often point to the strongest claim material.

Look for the point where trust is created

In many generative AI systems, the breakthrough is not generation alone. It is the moment where the system becomes safe enough, structured enough, or dependable enough for real use.

That may happen when the product checks outputs against a rule set, compares results across sources, explains why an answer was chosen, or limits model behavior using domain controls.

That trust layer is often worth more than the generation layer. Businesses should study it closely because that is where true product value often becomes visible.

Claim the workflow that creates the result

A generative AI product is rarely a single step. It is usually a chain. Data comes in. Context is selected. Instructions are built. A model is called. The output is tested.

The result is revised, routed, or stored. That end-to-end flow is often what turns general AI into a usable product.

That is why businesses should think in terms of claiming workflows, not just isolated parts. A workflow shows how the product actually works. It gives shape to the invention. It also makes it easier to explain why the system is more than a generic AI tool.

Show how the system moves from messy input to usable output

One of the strongest ways to frame value is to map the transformation. What enters the system, and what comes out that was not possible before in the same way? This is where many AI teams find their best story.

For example, a system may take raw user language, classify the request, pull in account data, generate structured instructions, produce a first answer, score that answer against policy rules, and then turn the approved result into a ready-to-use business artifact.

That full movement is often much more valuable than any single prompt or model call.

Protect the controls around generation

A plain model call is easy to imitate. What is much harder to copy well is the set of controls built around it. Controls are what make outputs more consistent, more useful, and less risky.

This can include many things. It can include how context is selected before generation. It can include how prompt parts are assembled based on user state or task type.

It can include how the system checks formatting, accuracy, or confidence after generation. It can include how the product decides whether to accept, revise, reject, or escalate the result. These controls often carry the real economic value of the product.

The guardrails may be the invention

Many teams think of guardrails as support code. They treat them like helpers attached to the main AI feature. But in a lot of cases, those guardrails are exactly what make the product commercially viable.

If a system prevents risky outputs, catches failures early, or keeps results inside a useful structure, that is not a side detail.

That is often the reason the product can be sold to serious customers. Businesses should not overlook this when thinking about what to claim.

Focus on what is repeatable and not just clever

A one-time trick is not the same as a real product advantage. Businesses should focus on the parts of the system that create value over and over again across many users, tasks, or accounts.

Repeatability matters because it shows that the value is built into the system, not dependent on luck or a perfect prompt typed by one expert.

If the workflow works at scale, under different inputs, and still creates a better outcome, that is a much stronger foundation for a patent strategy.

Document the branching logic inside the product

One of the best sources of claim strength is branching logic. This is where the system changes its path based on conditions.

That can be based on risk, confidence, document type, customer tier, content category, prior model behavior, or downstream use.

Businesses should write this down early. These decisions can feel ordinary to the internal team because they live with them every day. But they often reflect the exact engineering judgment that creates the edge.

A system that routes one class of requests through a high-control path and another through a fast path may be doing something far more valuable than it first appears.

Good claim material often hides in exception handling

A lot of teams describe the happy path and stop there. That leaves out some of the most important value. Exception handling is where real product maturity shows up.

What happens when the model is uncertain? What happens when required fields are missing? What happens when the output fails validation? What happens when the user request touches a restricted topic or a high-risk action?

The answers to those questions often reveal the technical methods that make the system dependable. Those methods are worth serious attention.

Tie technical steps to business impact

A claim should not feel like a pile of technical pieces sitting next to each other. It should tell a clear story about how those steps create a result that matters to the business and the customer.

This is a very useful discipline for founders. Do not just say the system retrieves data, generates text, and applies filters. Explain what that sequence achieves. Maybe it lowers the need for manual review.

Maybe it improves output consistency across teams. Maybe it speeds up turnaround while preserving rule compliance. Maybe it turns open-ended generation into structured work output. That connection makes the value much easier to see.

Build claims around what a competitor would struggle to rebuild

A strong way to pressure-test the product is to imagine a competitor using the same base model. What would still be hard for them to recreate quickly? The answer usually reveals the deepest moat.

It may be the internal workflow that adapts prompts based on user behavior. It may be the scoring layer that ranks multiple generations and picks one based on a domain-specific measure.

It may be the transformation layer that turns free-form model text into a structured object that downstream systems can trust. It may be the review logic that balances automation and human oversight. That is the material businesses should elevate.

Your moat is often in the orchestration

In many generative AI products, orchestration is the true product. It is the logic that decides what happens, in what order, with what data, under which rules, and toward what end.

It is easy to overlook because users do not see it directly. But that hidden architecture can be far more important than the model name on the stack.

Businesses that understand this early tend to make better patent decisions. They stop chasing broad AI language and start protecting the mechanics that drive customer value.

Wrapping It Up

Generative AI moves fast, but strong patent thinking does not start with speed alone. It starts with clarity. The companies that win here are not just the ones with access to good models. They are the ones that understand what part of their product truly creates value and can explain that value as a real system, not just a cool demo.

Leave a Reply