Most founders know they should protect what they are building. The hard part is knowing what part of the system actually matters most. That gets tricky fast when your product lives in two places at once. Some of the work happens on the device. Some happens in the cloud. A camera may clean up images on the edge, then send data to a server for deeper analysis. A factory sensor may make split-second choices locally, while a cloud system trains the model, updates rules, and manages the whole fleet. A health device may process signals on-device for speed and privacy, but still rely on cloud logic for scoring, storage, and long-term learning.

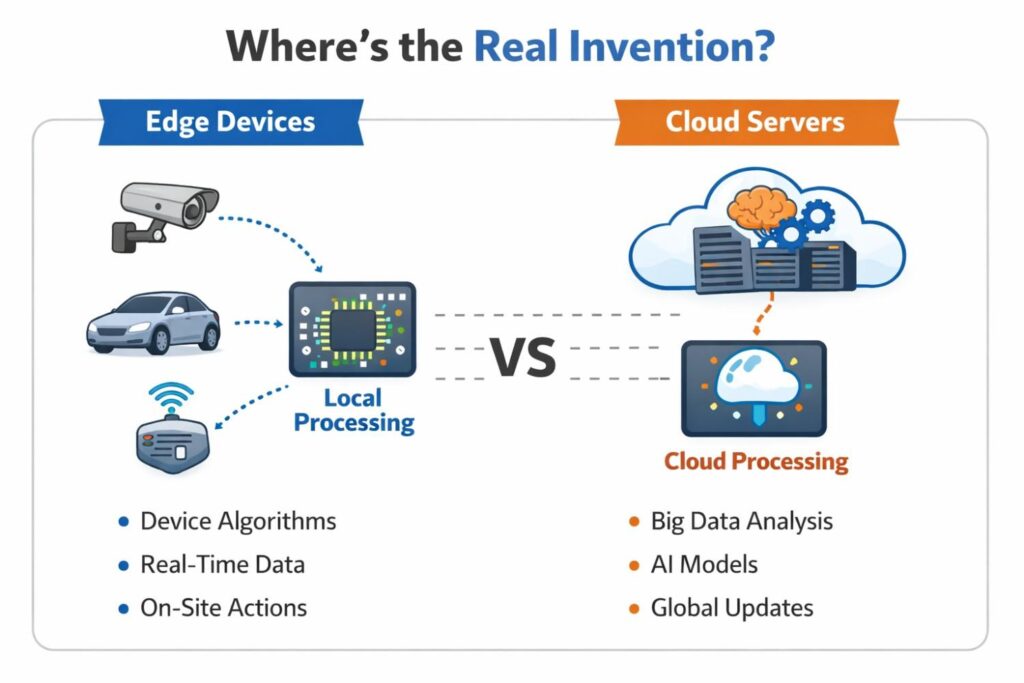

Where the Real Invention Lives in an Edge and Cloud System

In most modern products, the invention does not sit in one clean box. It is spread across the device, the network path, the cloud logic, and the rules that decide how all of them work together.

That is why many businesses miss the strongest part of their patent story. They look at the obvious feature and forget to protect the deeper system design that makes the feature work better than everything else in the market.

When you are trying to protect an edge and cloud system, the right question is not just what your product does. The better question is why your product can do it in a way that is faster, cheaper, safer, more private, more reliable, or more scalable than others.

That is usually where the real invention lives. It often hides in the split of work between the edge and the cloud, in the timing of decisions, in the way data moves, or in the system rules that keep performance high even when conditions change.

The invention is often in the split, not the parts

A lot of teams think about patents in pieces. They think about the device model. Then they think about the backend pipeline. Then they think about the mobile app or dashboard.

That approach feels neat, but it can cause a serious problem. It can make you protect each piece as if it stands alone, while the real value comes from how the pieces cooperate.

A business should take a step back and study the system as one living workflow. Ask what the edge handles first, what the cloud handles later, and what triggers a handoff.

If your product wins because the device filters noise before sending data, that is not just a device feature. If your cloud retrains based on field behavior and sends back tuned rules, that is not just a server feature.

The commercial value may come from that loop. That loop may be the thing that deserves protection.

The strongest claim idea may be hiding in a business constraint

Many inventions are born because a business had a hard limit. Maybe bandwidth was too expensive. Maybe users could not tolerate delay. Maybe privacy rules blocked raw data uploads.

Maybe battery life had to last for weeks. Maybe a factory floor had poor connectivity. These are not side details. These are often the reason the architecture took a special shape.

That matters because patentable value often comes from solving a real-world constraint in a clean and repeatable way. Businesses should document what constraint forced the design choice.

Then they should explain how the system solves it. This creates a stronger story than simply saying the product uses edge computing and cloud computing together.

Many products say that. Far fewer can explain the exact rule set that made the hybrid setup work under tough operating conditions.

The real value may be the operating rule, not the algorithm

Teams often focus too hard on the model itself. They think the special sauce is only the classifier, predictor, or optimization engine.

Sometimes that is true. But in many edge-cloud systems, the more defensible invention is the rule for when the model runs locally, when it escalates to the cloud, and how the system adapts when resources change.

That kind of logic is easy to overlook because it does not always look glamorous. It may feel like infrastructure work.

But from a business view, that infrastructure can be what gives you lower cloud bills, better uptime, stronger privacy, or a smoother user experience.

Those are real advantages. They affect margin, retention, and trust. They also make a product harder to copy if competitors only notice the visible feature and miss the decision framework under it.

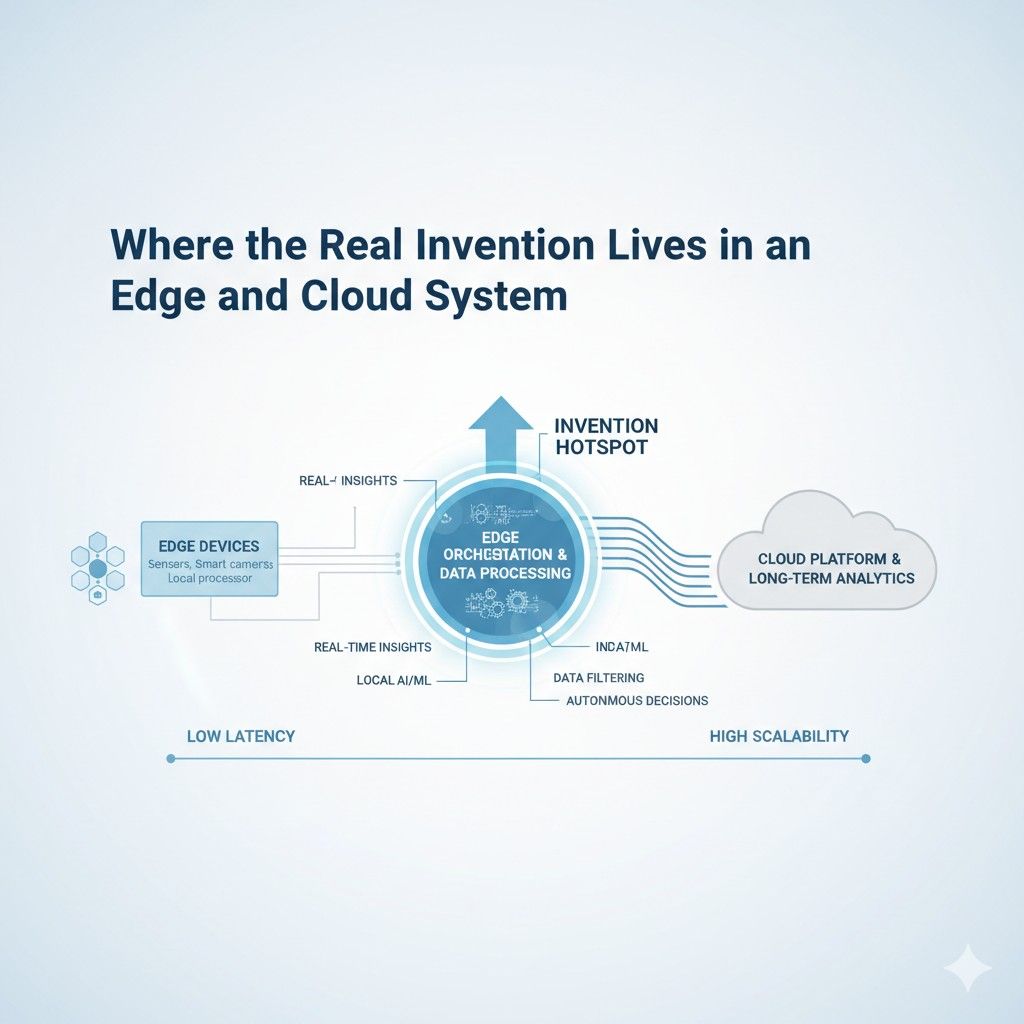

Why system boundaries create protectable value

Edge and cloud systems are full of boundaries. There is a boundary between raw data and processed data.

There is a boundary between local action and remote action. There is a boundary between urgent tasks and deferred tasks. There is a boundary between what gets stored for a second and what gets stored forever.

Each boundary is a chance to find invention. Businesses should look closely at what crosses each boundary, what stays on one side, and why. If your device turns a video stream into event markers before upload, that is a meaningful boundary.

If your system only sends summary signals unless a threshold event happens, that is a meaningful boundary. If your cloud only receives certain feature vectors instead of raw sensor data, that is a meaningful boundary too.

These choices are not just technical. They shape cost, privacy risk, latency, and product reliability. When those choices are central to business performance, they are often worth exploring for claims.

A useful test: what would a copycat need to reproduce?

A smart way to find the true invention is to imagine a copycat. If a competitor wanted to match your product outcome, what would they actually need to reproduce?

Would they only need your edge model? Probably not. Would they only need your cloud dashboard?

Probably not. In many cases, they would need the hidden coordination logic that tells the full system how to behave across different conditions.

That is the layer businesses should pay close attention to. It is often the layer that creates lock-in and differentiation.

If the answer is that a rival would need your update mechanism, your local fallback process, your device-side filtering rule, your cloud arbitration logic, or your edge-to-cloud escalation method, you may have found the real invention zone.

Founders should map the system from decision to decision

One of the most helpful exercises for a business is to stop mapping the system as boxes and arrows and start mapping it as decisions. What decision happens first?

What input triggers it? What result changes what happens next? What gets done locally because speed matters? What gets done remotely because scale matters? What is cached, retried, compressed, delayed, or discarded?

This way of thinking is powerful because patents are often stronger when they capture how a system behaves instead of only naming what components exist.

Many weak disclosures read like product diagrams. Stronger disclosures explain operational flow. They show how the system acts under normal conditions, poor conditions, and edge cases.

They reveal what the product does when bandwidth drops, when compute is limited, when the model confidence falls, or when privacy settings change.

That is the level where businesses can uncover claim language that reflects true commercial value.

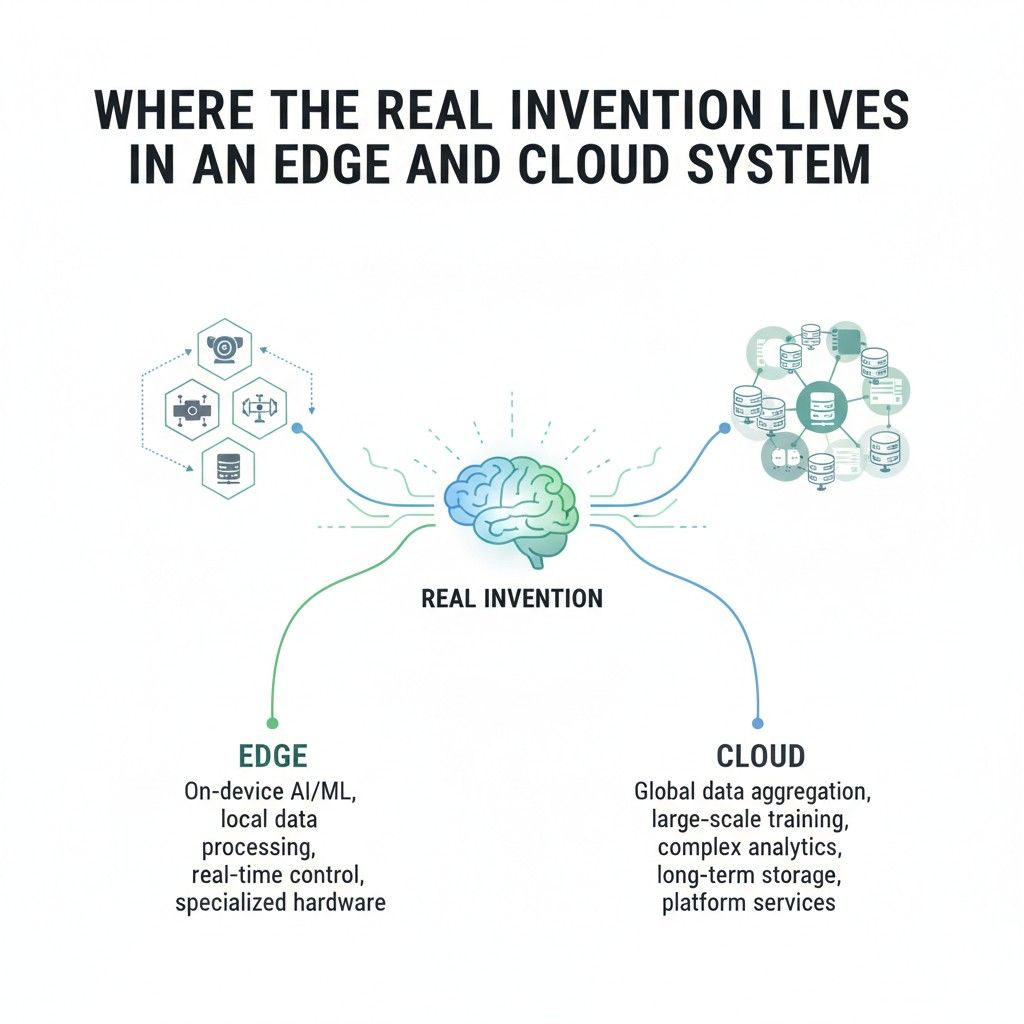

The edge is not always the invention

There is a common mistake in edge-heavy products. Teams assume the edge layer must be the star because edge computing sounds advanced. But sometimes the real invention is not at the device at all.

It may live in the cloud orchestration that manages thousands of devices. It may live in the way updates are tested and rolled out. It may live in the central policy engine that changes local behavior based on usage trends.

It may live in the learning loop that turns distributed device output into better future performance.

This matters for strategy. Businesses should avoid forcing the invention story into the edge just because that is the trendy part.

Good protection follows the actual value path. If the cloud side is where your margin, scalability, and control really come from, that deserves equal or greater attention.

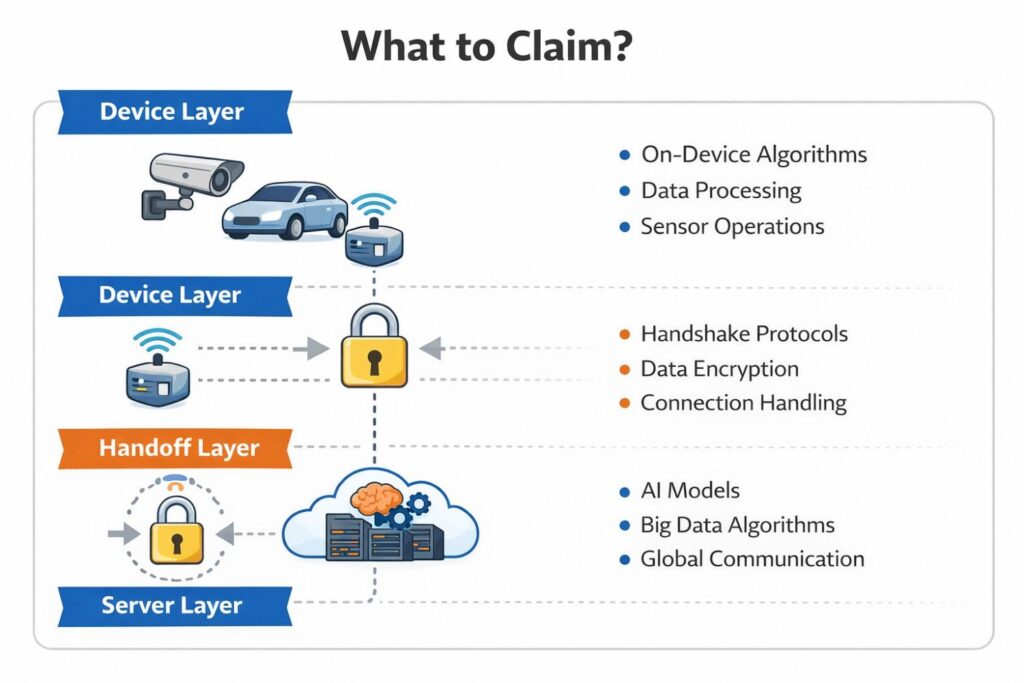

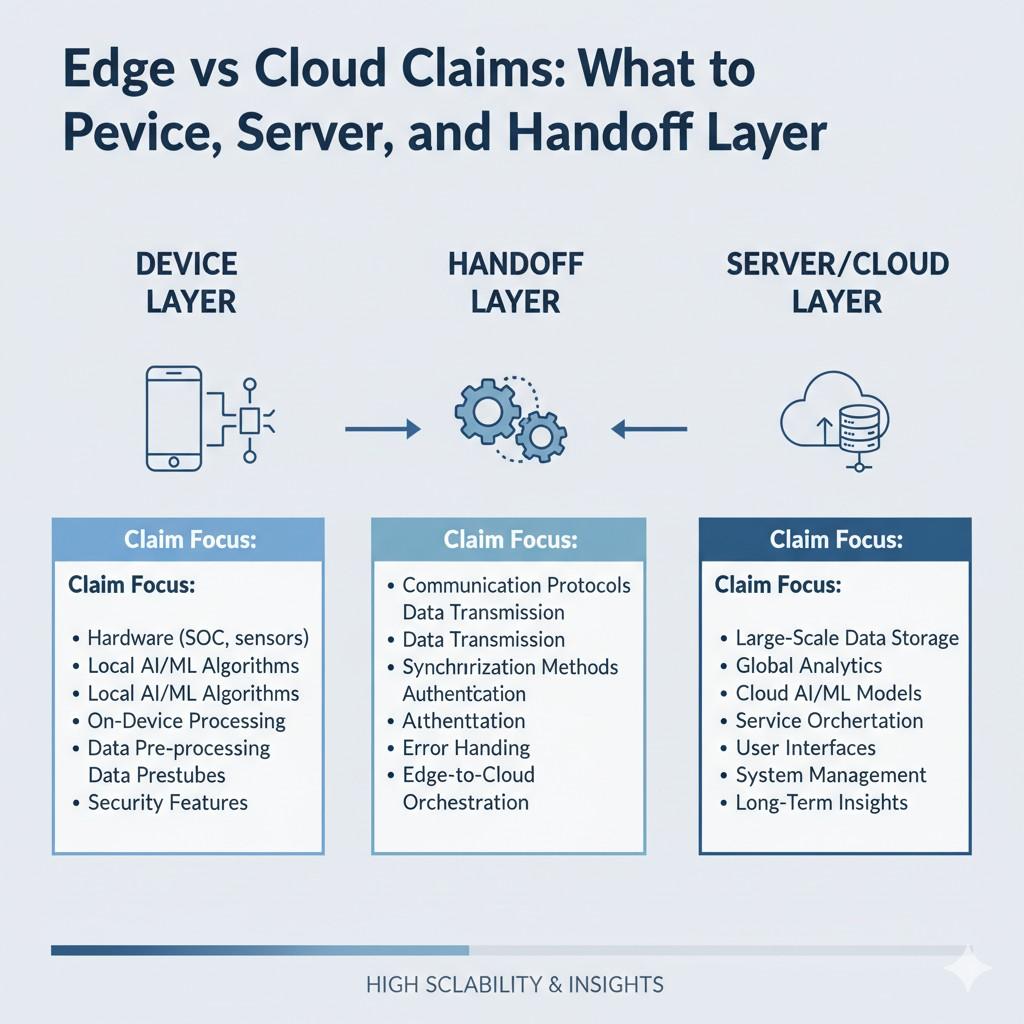

What to Claim at the Device, Server, and Handoff Layer

When a product runs across the edge and the cloud, claim strategy gets harder because the invention is not sitting in one place. A founder may look at the device and think that is the core.

Another person may look at the backend and think that is where the real value sits. In many cases, both views are partly right, but neither is complete on its own.

The better approach is to treat the system like a chain of linked decisions. The device layer, the server layer, and the handoff layer each hold different kinds of value.

If you only claim one layer, you may leave open an easy path for a competitor to copy the core result with a small design change.

The goal is to protect the parts that drive business value while also protecting the connection points that make the whole system work in a special way.

Start with what the user experiences

A strong claim strategy usually begins with a very simple question. What is the real outcome your product delivers in the field?

That outcome may be instant detection, lower delay, better privacy, lower data cost, stronger uptime, or more accurate action in changing conditions. Once that outcome is clear, you can work backward and ask which layer makes that outcome possible.

This matters because claims should not be built around random technical parts just because they sound advanced.

They should be shaped around the parts that create the result customers care about and competitors would want to copy. That is how technical work turns into business leverage.

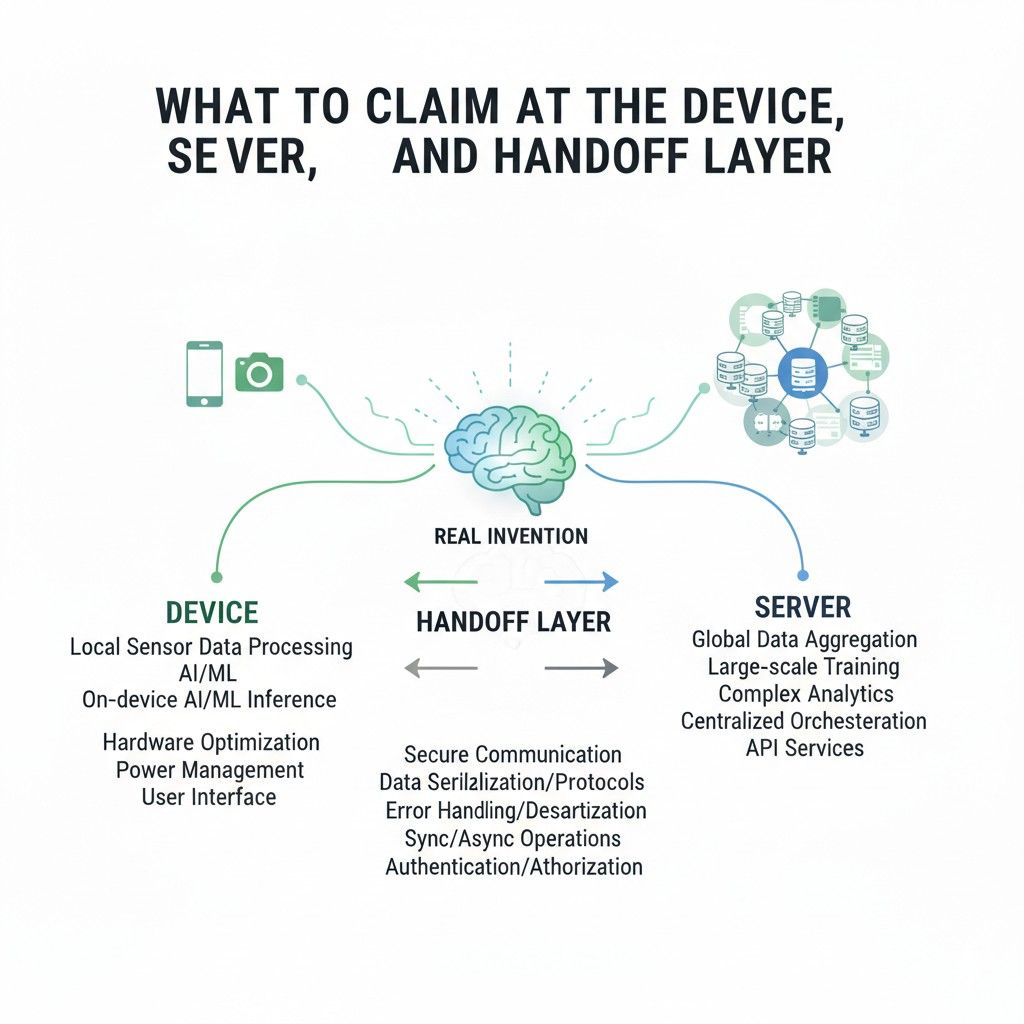

Claiming the device layer

The device layer is where local action happens. It includes what the endpoint, sensor, phone, machine, gateway, wearable, vehicle module, or embedded processor does before the cloud gets involved.

In some products, this is where speed, privacy, and resilience are won.

A lot of teams underclaim the device side because they think local logic is too small or too implementation-specific. That is often a mistake.

The device may be making first-pass decisions that dramatically change performance and cost. It may be shaping the data, reducing noise, selecting what matters, or making immediate actions possible when the network is weak or unavailable.

Claim the local processing that changes the economics

A useful way to think about the device layer is to ask whether the local behavior changes the economics of the whole system.

If on-device processing cuts cloud usage, reduces bandwidth, extends battery life, or lowers storage load, that is not just engineering detail. That is a strategic design choice.

A business should look closely at any local step that removes a large amount of unnecessary data, reduces repeat transmissions, or prevents wasteful cloud calls.

Those choices often matter more over time than teams first realize. They affect margin, scale, and reliability. They may also make the product much harder to replace with a simple copy.

Claim the local decision, not just the local component

Many weak claim sets talk about a device as if it is just sitting there collecting information. That misses the more valuable angle. The more useful thing to claim is often the local decision process.

That means focusing on what the device is deciding, when it decides, based on what signal, and what happens next. The invention may be that the device computes a confidence score and acts locally above a threshold.

It may be that the device converts continuous input into compact events before sending anything. It may be that the device runs one path when resources are healthy and another when power or connectivity drops.

Those kinds of operational rules are often more useful than a plain statement that the device performs processing.

Claim how the device behaves under real-world constraints

The strongest device-side inventions often show up when conditions are bad, not when they are perfect.

Businesses should pay attention to how the device acts when bandwidth drops, when compute is limited, when a battery is low, when the environment is noisy, or when privacy settings block a full upload.

Those fallback or adaptive behaviors can be highly valuable. They are often what makes a product usable in real customer settings.

A device that continues delivering a useful result during poor connectivity may be much more important commercially than a device that performs well only in lab conditions. If that behavior comes from a specific local process, that process deserves careful claim attention.

Claim preprocessing with purpose

Not all preprocessing is worth the same attention. Generic cleanup steps may not carry much weight on their own. But preprocessing that is tightly connected to a business outcome can be important.

For example, if the device transforms raw sensor data into a reduced form that preserves action-relevant information while cutting transfer cost, that is meaningful.

If it selects only a subset of signal windows that meet a trigger pattern tied to later cloud review, that is meaningful too. If it strips sensitive information locally before sending a safer payload upstream, that can matter a great deal.

The key is not to claim preprocessing as a vague idea. The key is to tie it to a specific role in the larger system.

A practical device-claim test

A useful internal test is this: if a rival copied your backend but skipped your device-side rule, would they still get the same business result? If the answer is no, then that local rule may deserve its own protection path.

This simple test helps teams avoid the mistake of treating edge logic like support code when it is actually central to the value story.

Claiming the server layer

The server layer is where scale, coordination, training, orchestration, long-term analysis, and cross-device learning often happen.

Many businesses assume cloud claims are naturally broad because the cloud feels central. That is not always true. Claims that simply say a server receives data and processes it are usually not where the real strength lies.

The more strategic path is to identify what the server is doing that changes how the full system behaves across many devices, many sessions, or many conditions.

Server value often comes from coordination and refinement, not just computation.

Claim the orchestration logic that shapes the fleet

For many businesses, the cloud is valuable because it manages many edge nodes together.

It may compare behavior across devices, identify drift, push updates, assign tasks, or decide how policy changes should roll out. That orchestration can be a major source of defensible value.

A company should look for server-side rules that determine how devices are grouped, how updates are selected, how edge behavior is adjusted, and how the system learns from distributed outcomes.

Those patterns can become very important because they are hard to rebuild from the outside. A competitor might see the product output but still miss the central coordination rules that make the full deployment work at scale.

Claim the learning loop, not just the server action

A single server action may not be the whole invention. The stronger angle may be the full loop in which the server receives field data, updates a model or policy, and sends refined logic back to the device.

That loop can be where improvement happens over time.

For businesses, this matters because repeated improvement is one of the strongest competitive advantages in edge-cloud systems.

If your product gets better from distributed usage in a controlled and efficient way, that system behavior deserves careful attention.

It may support claims directed to update generation, parameter selection, deployment criteria, or the process used to adapt edge behavior based on cloud-side analysis.

Claim data handling choices that support scale or trust

On the server side, data handling is often where value hides. A cloud system may only store event summaries instead of raw data. It may generate aggregate representations for model refinement.

It may separate sensitive content from operational metadata. It may use time-based or confidence-based rules to decide what becomes part of long-term learning.

These are not just backend details.

They can define whether a business can scale affordably, meet customer expectations, or serve regulated markets. When those server-side choices are tightly connected to the product’s advantage, they should not be left buried in technical notes.

Claim the policy engine if that is where control lives

In some products, the real cloud-side invention is not a model or a database flow. It is a policy engine.

That policy engine may decide what can run locally, what must escalate, what gets logged, what gets shared, what gets cached, and what changes under different conditions.

That kind of logic can be highly strategic because it gives the company control over cost, privacy, performance, and user experience from a central point.

If your business depends on cloud-issued policies that shape device behavior in a specific way, that is worth examining closely.

A practical server-claim test

Ask this question internally: if a competitor copied the device behavior but did not copy your server-side coordination logic, would they scale the same way, improve the same way, or manage risk the same way?

If the answer is no, then your server layer may hold much more claim value than it first appears.

Claiming the handoff layer

The handoff layer is where many of the strongest claims live because this is where the edge and cloud stop being separate pieces and start acting like one system.

The handoff layer includes what is sent, when it is sent, why it is sent, how it is formatted, what triggers the send, and what happens after it arrives.

This is the layer teams most often overlook. They claim the edge model. They claim the cloud analysis.

But they skip the transfer logic, even though that logic may be the very reason the system is practical, fast, cheap, and hard to copy.

Claim what crosses the boundary

One of the first things to study is the payload itself. Does the system send raw data, selected features, compressed summaries, event markers, confidence values, model deltas, or only exception cases?

The answer matters because what crosses the boundary often shapes the whole economics of the system.

A company should not just say that data is transmitted. It should look at whether the specific content of the transmission is tied to speed, privacy, cost, or reliability.

A handoff that sends a compact, purpose-built representation instead of the full local state may be doing much more work than it seems.

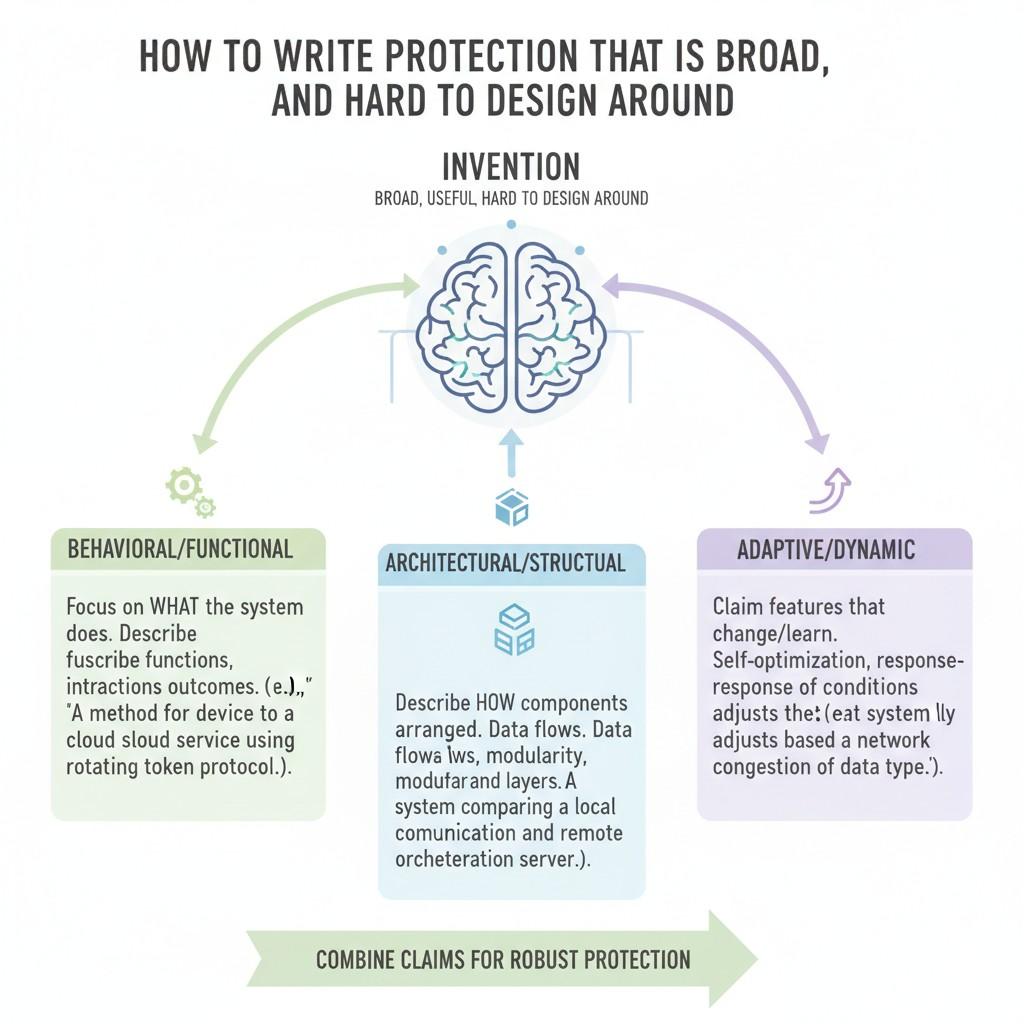

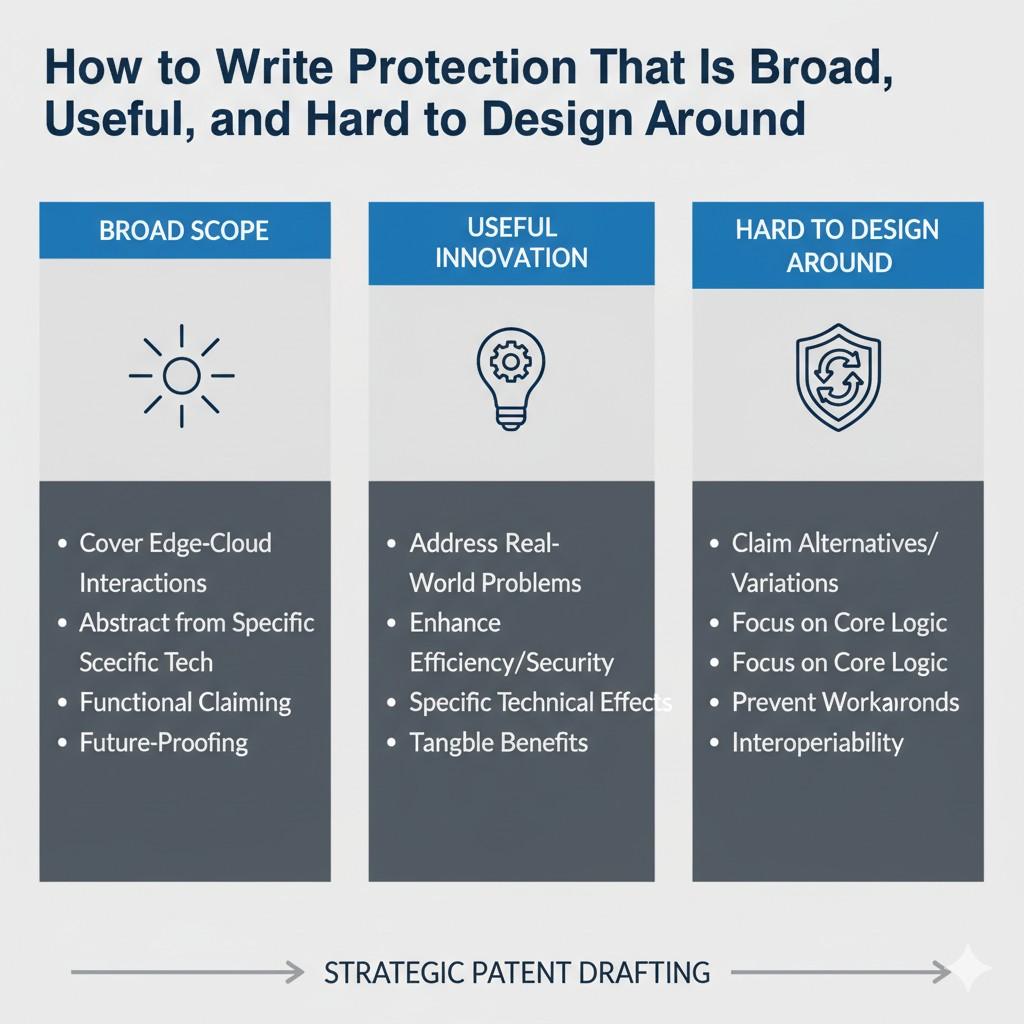

How to Write Protection That Is Broad, Useful, and Hard to Design Around

The hardest part of patent strategy is not spotting that your system is valuable. The hardest part is turning that value into protection that still matters after the market shifts, the product evolves, and competitors start looking for workarounds.

That is where many businesses lose ground. They describe what they built too narrowly, tie the language too closely to one version of the product, or focus so much on one implementation that a rival can step around the claim with small changes.

When you are dealing with edge and cloud systems, this problem gets even bigger. The architecture is flexible by nature. Work can move from the device to the server. A handoff can happen earlier or later. A local rule can become a cloud rule.

A model can be replaced with a policy engine. A direct transfer can become a staged transfer. If protection is written too tightly, a competitor may preserve the same business value while changing just enough of the technical path to avoid the claim.

That is why strong protection needs to do three things at once. It needs to be broad enough to cover meaningful variations. It needs to be useful enough to map to how competitors actually build.

And it needs to be framed in a way that makes design-arounds harder, not easier. The goal is not to write vague language. The goal is to capture the true operating idea in a way that survives product changes and market copycats.

Start with the business advantage, not the product description

A lot of weak protection begins with a simple mistake. The team starts by describing the product as it exists today rather than the advantage the system creates.

That sounds harmless, but it can shrink the whole strategy from the start. If the write-up follows a current release too closely, it may miss the deeper idea that would still matter even if the code, hardware, or deployment pattern changes next quarter.

A better move is to ask what the system really achieves in a durable sense. Maybe it reduces cloud load without hurting quality.

Maybe it keeps private data local while still enabling central learning. Maybe it makes fast local decisions but reserves heavy analysis for uncertain cases.

Maybe it keeps service quality stable even when networks are unreliable. Those are business-facing outcomes. They help point to the real operating principle behind the current implementation.

When the protection starts from that principle, it becomes easier to write claims that map to future versions of the product and to likely competitor versions too.

That is how a company protects the value of the invention rather than just the shape of one release.

Write around the system rule that causes the advantage

Once the business advantage is clear, the next question is what system rule creates it. In strong patent work, the real target is often a decision rule, routing rule, transformation rule, or coordination rule rather than a named component.

This matters because components change quickly. A gateway may become a module. A server may become a distributed service. A local engine may move into firmware.

A classifier may become a ranking model or a rule set. But the system rule may stay the same.

The product may still decide locally under one condition, escalate remotely under another, and feed back revised behavior after central analysis. That rule is often the more stable and useful target.

A business should look for the mechanism that makes the edge-cloud split valuable.

It may be a rule about when data is compressed, when only exceptions are sent, when uncertainty triggers cloud review, when updates are issued, or how the system adapts to resource conditions. If that rule drives the product’s advantage, the protection should be shaped around it.

Avoid locking the claim to one technical label

One very common mistake is using labels that are too specific. Teams often write in the vocabulary of the current build.

They refer to a certain sensor type, one model class, one networking method, one storage structure, or one update path. That language may feel precise, but it can quietly narrow the claim in ways that help competitors.

Broad and useful protection usually comes from describing what a part does in the system rather than naming it too tightly.

The aim is not to be fuzzy. The aim is to avoid tying the invention to a term that a rival can replace while keeping the same core behavior.

If your value comes from a local process generating a reduced representation for remote review, that may matter more than whether the reduced representation is called a feature vector, event packet, or summary object in the current codebase.

If your value comes from cloud-issued behavior updates, that may matter more than whether the update is called a policy, threshold set, parameter group, or instruction bundle.

Strong protection often leaves room for these variations while still holding onto the real operating idea.

Capture function, timing, and condition together

Claims become much more useful when they do not just say what happens, but also when it happens and under what condition.

This is especially important in edge-cloud systems because timing and triggers often define the invention more than the raw operation itself.

A device that processes data locally is not very informative by itself.

But a device that performs a first-stage evaluation locally in response to a detected event, and only sends a reduced representation when a confidence value falls below a threshold, is describing a much more meaningful system behavior.

That kind of framing is harder to design around because the value often sits in the relationship between the step, the timing, and the condition.

Businesses should train themselves to describe inventions in this more operational way.

Ask what happened, what caused it, what information shaped the choice, and what happened next. Those links often reveal the claim language that carries the most strategic value.

Why operational detail matters more than buzzwords

Many technical teams naturally fall back on broad labels like edge inference, cloud processing, adaptive routing, or intelligent orchestration.

Those phrases may be useful for product marketing, but they are not enough on their own for strong protection. What matters is the actual operational structure.

When teams explain the trigger, the path selection, the fallback, the data form, and the result, they make it easier to protect the idea at the level where competitors will actually copy it. That is the level that matters most.

Protect the full loop, not only one moment

A lot of inventions are described as isolated events even though their value comes from a repeated loop.

That is a major missed opportunity. In many edge-cloud systems, the product gets stronger because local action, remote review, and return updates work together over time.

A business should look closely at whether the real invention is a cycle rather than a single step. The edge may detect and filter. The cloud may analyze across devices.

The cloud may then send back a refined threshold or policy. The edge may use that new behavior on the next pass. That closed loop can be far more valuable than any one stage by itself.

Protection that reflects the loop can be much harder to design around because a competitor would need to change more than one isolated element.

They would need to change the structure of the system improvement process itself. That raises the cost of imitation.

Include narrower fallbacks around the broad core

Broad protection is important, but broad alone is not enough. A smart strategy usually pairs a broader framing of the core invention with narrower versions that capture especially useful implementations.

This creates a stronger fence line. If one path is challenged or avoided, other paths may still matter.

The practical lesson for businesses is simple. Do not stop at the highest-level description. Also preserve the more concrete versions that are commercially meaningful.

If the broad idea is selective remote escalation, the narrower forms may cover escalation based on confidence, latency sensitivity, privacy score, battery state, or event type.

If the broad idea is local generation of a reduced transmission form, the narrower forms may cover specific ways that reduced form is generated or used.

This layered approach makes protection more useful because it mirrors how products are built in reality. Systems have a general logic and then specific modes. Good patent strategy captures both.

Write with competitor movement in mind

A strong claim is not just a description of your invention. It is also a forecast of how competitors may try to copy the result while avoiding the exact structure of your current product.

That means good writing requires a little strategic imagination.

A business should ask where a rival is most likely to move the line. Would they move a local classification step into the cloud? Would they shift a cloud threshold rule onto the device?

Would they replace continuous streaming with event-triggered sync? Would they use summaries instead of raw uploads? Would they split one action into two smaller ones to argue they are different?

These are not legal side notes. They are central to writing useful protection. When teams think in advance about those moves, they are more likely to describe the invention at the right level.

They stop writing claims that can be avoided by moving one box in an architecture diagram.

Protect the boundary shifts, because that is where redesign happens

In edge-cloud systems, one of the easiest ways to work around narrow protection is to shift where a step happens.

A competitor may keep the same outcome while changing whether the step happens on-device, at a gateway, in a near-edge service, or in a central backend.

That is why protection needs to look beyond the exact location of the step when the real value is in the role the step plays.

This does not mean every claim should ignore architecture. It means the strategy should account for role-preserving shifts.

If the valuable idea is selective escalation of uncertain cases for further analysis, that concept may matter even if the uncertain-case check is done in a different compute layer.

If the valuable idea is local transformation into a privacy-preserving representation before wider processing, the protection should think carefully about how to capture that even if the transformation point moves slightly.

Businesses that understand this write stronger protection because they are not trapped by the layout of the first version of the system.

Use the language of outcomes and relationships

Claims become more durable when they emphasize the relationships between system parts and the outcomes produced.

A lot of narrow writing happens because teams describe isolated mechanics without saying how those mechanics interact to create value.

A stronger approach is to connect the actions. Explain that one process generates a reduced form that enables a second process to perform remote evaluation with lower transmission cost.

Explain that a local rule determines whether a remote resource is engaged. Explain that a cloud-generated update changes later local operation. Explain that a fallback path preserves a defined level of service when the normal handoff path is unavailable.

Those relationships often make the invention clearer and more defensible. They show that the system is not just a pile of parts. It is a coordinated method for producing a useful result.

Relationship-based writing often travels better across product changes

This is especially helpful for startups because products move fast. The more the protection is built around relationships and system roles, the more likely it is to stay relevant as implementation details evolve.

That does not solve everything, but it can greatly improve the shelf life of the work.

Do not confuse broad with vague

Many teams worry that if they avoid narrow labels, the protection will become soft and empty. That is a fair concern, but the answer is not to over-specify everything. The answer is to be broad in the right way.

Useful breadth comes from clearly describing the operating idea without boxing it into unnecessary details. Vague writing, by contrast, skips the real structure and hides behind general terms.

Broad and clear is strong. Broad and hand-wavy is weak.

A good test is whether someone reading the description can understand how the system behaves and why it matters, even if the wording leaves room for different implementations.

If the answer is yes, that is usually a better place to be than a write-up that is packed with product-specific labels but misses the deeper rule.

Anchor the write-up in measurable business impact

Protection becomes more strategic when it connects to outcomes the business actually cares about.

That may include lower compute spend, lower data transfer cost, lower failure rates, faster response time, stronger privacy posture, or better deployment reliability. These outcomes help teams decide what is worth emphasizing and what is not.

A business should identify where the invention changes economics or customer value in a measurable way. Then the technical story should reflect that.

This does not mean stuffing metrics into every sentence. It means making sure the write-up is centered on the design choices that move those real-world results.

That approach helps in two ways. It improves internal judgment about what deserves protection. It also makes the patent effort more aligned with strategy, which is where the highest value usually comes from.

Wrapping It Up

When you build across the edge and the cloud, the patent question is not just what your product does. The real question is what part of the system gives you your edge in the market and keeps others from catching up too easily. That is the part worth protecting with care. A lot of companies describe the visible feature and stop there. That is rarely enough. In edge-cloud systems, the deeper value often lives in how work is divided, how decisions move across the system, and how the whole setup keeps delivering results under real-world limits.

Leave a Reply