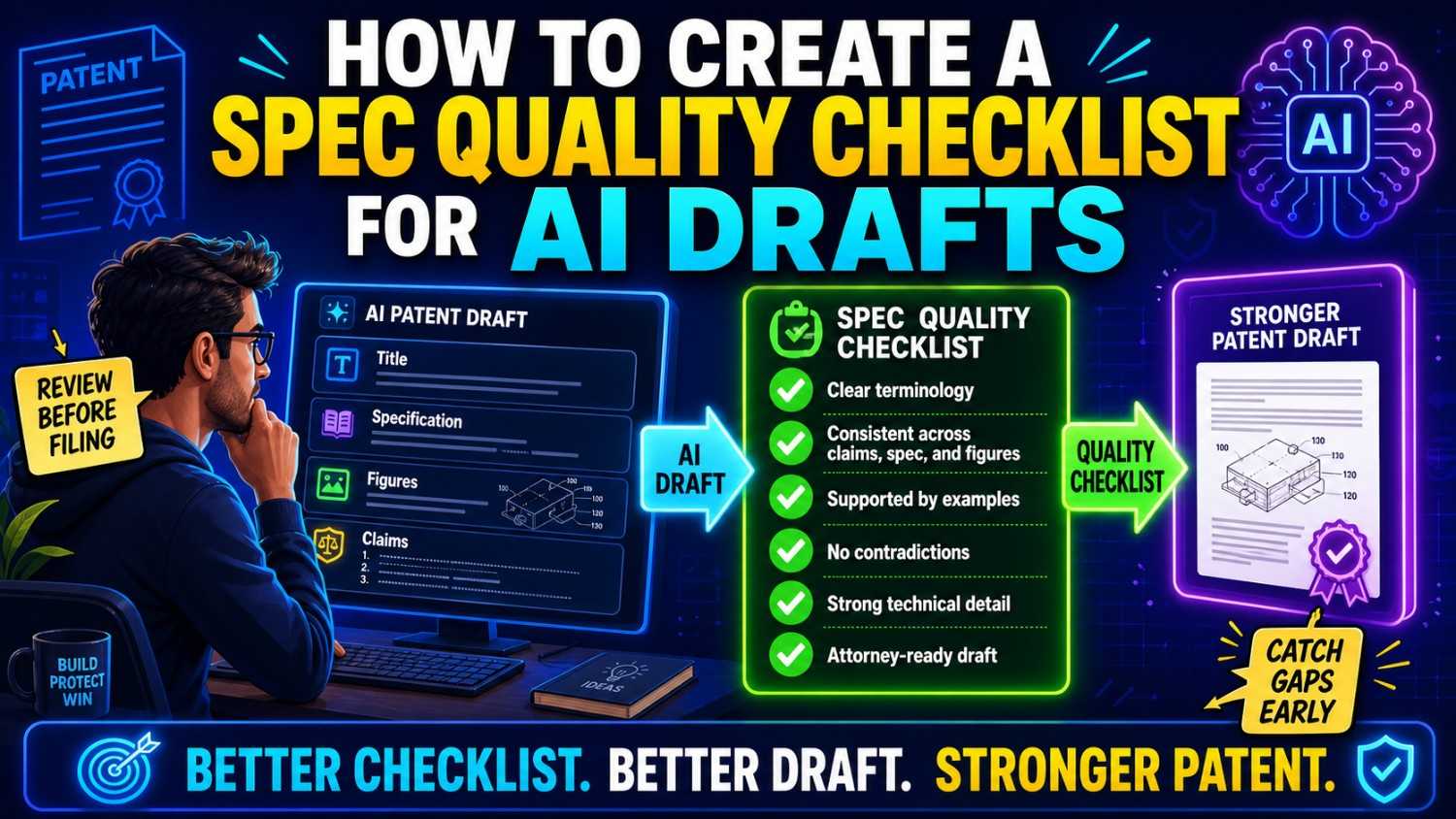

AI can help you draft a patent spec faster. But a fast spec is not always a strong spec.

A strong spec should be clear, true, complete, and easy to connect to the claims and figures. This guide shows how to build a simple quality checklist so your team can review AI-generated patent drafts before small problems become costly ones.

PowerPatent helps startups turn code, models, and technical ideas into stronger patent filings with smart software and real patent attorney oversight. You can see how it works here: https://powerpatent.com/how-it-works

Why AI patent specs need a checklist

AI can write a lot of text quickly.

That is useful.

It is also risky.

A patent spec is not just a long description. It is the part of the patent application that explains the invention. It gives the details. It supports the claims. It helps future readers understand what was built, how it works, and what versions may be possible.

If the spec is weak, the whole filing can suffer.

An AI-generated spec may look polished but still have hidden problems. It may use the wrong names. It may add features that do not exist. It may leave out the real invention. It may describe the product too narrowly. It may include broad language with no support. It may say one thing in the summary and another thing in the detailed section.

These issues are easy to miss because the writing often sounds confident.

That is why a checklist matters.

A checklist gives your team a repeatable way to review the draft. It keeps the review from becoming a random read-through. It helps founders, engineers, and patent attorneys focus on the parts that matter.

Startups need this because time is tight. You do not want to spend days arguing over every sentence. You want a clear way to spot the biggest risks fast.

A good spec quality checklist helps you answer one key question:

Is this draft strong enough to support a real patent filing?

The answer should come from structure, not guesswork.

The checklist should protect the invention story

Before building the checklist, understand the goal.

The goal is not to make the spec sound more legal.

The goal is to make the invention clear and well supported.

A good patent spec tells one strong story. It says what problem the invention solves. It explains the technical solution. It shows the key parts. It describes how the parts work together. It gives examples. It leaves room for useful versions without becoming vague.

AI can help draft that story, but it can also drift.

It may turn your invention into a generic product description. It may focus on the wrong feature. It may give too much attention to a dashboard and too little attention to the model pipeline. It may write about a cloud server even when the core invention runs on device. It may treat an optional feature like a required one.

Your checklist should stop that drift.

Every item on the checklist should help protect the invention story.

Ask yourself:

Does the draft describe the real invention?

Does it support the claims we want?

Does it show how the invention works?

Does it avoid fake details?

Does it leave room for future versions?

Does it stay clear enough for a founder, engineer, attorney, examiner, investor, or future buyer to understand?

That is the mindset.

PowerPatent is built around this kind of clarity. The platform helps founders turn real technical work into stronger patent drafts with software speed and real patent attorney review. See how it works here: https://powerpatent.com/how-it-works

Start with a one-page invention source of truth

A spec quality checklist works best when you have something to check against.

That “something” should be a one-page invention source of truth.

This is not a legal document. It is a simple plain-word summary of the invention.

It should say what the problem is, what the invention does, what parts are involved, what data or signals move through the system, what output is created, what action happens, what is new, what is optional, and what should not be included.

For example, say your startup built an AI tool that predicts failure in factory machines.

Your source of truth might say:

The problem is that current systems often detect machine failure too late.

The invention uses machine sensor data and a trained model to create a future failure risk score.

The score is used to change a maintenance schedule before the machine fails.

The system may run on a local device, edge server, or cloud server.

The system may use vibration data, temperature data, motor current data, or other machine data.

The system does not require GPS.

The dashboard is optional.

The key idea is using the predicted future failure risk to change the maintenance schedule before failure.

This page becomes the anchor.

When the AI draft says the system always runs in the cloud, you can check the source of truth and see that cloud is only one option. When the draft adds GPS, you can remove it. When the draft spends five pages on a dashboard but barely explains the risk score, you know the focus is wrong.

Without a source of truth, your checklist will be weaker.

You may notice grammar issues but miss invention issues.

The source of truth lets you check meaning.

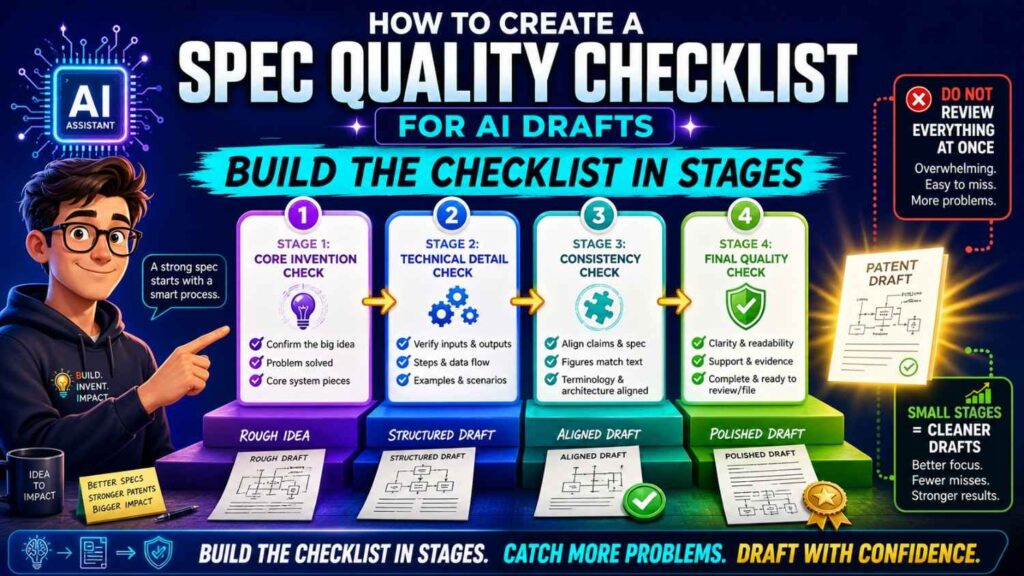

Build the checklist in stages

A spec quality checklist should not be one giant wall of tasks.

That makes review harder.

Instead, group the checklist into stages.

Start with invention fit. Then check technical truth. Then check support for claims. Then check consistency. Then check examples and alternatives. Then check figures. Then check risky language. Then check final filing readiness.

This staged approach helps because each pass has one job.

A founder can focus on business fit.

An engineer can focus on technical truth.

A patent attorney can focus on claim support and legal quality.

AI can help run mechanical checks, such as finding term drift or missing figure references.

The key is to avoid asking one person to catch everything at once.

Patent specs are long. AI drafts can be even longer because they may include filler. A staged checklist keeps review focused.

Checklist item 1: Does the draft match the real invention?

This is the first and most important check.

Before looking at style, grammar, or formatting, ask whether the spec describes the real invention.

Read the title, abstract, summary, and first detailed section.

Then ask:

Is this what we built?

Is this what we want to protect?

Is the core technical idea clear?

Does the draft focus on the real moat?

Does the draft spend too much time on side features?

Does the draft describe a generic version instead of our specific improvement?

This check should be done by a founder or inventor.

Only the people close to the product can say whether the draft has drifted from the invention.

For example, your real invention may be a new way to reduce model update size on edge devices. But the AI draft may focus on user dashboards, model training, and cloud analytics. Those may be related, but they may not be the moat.

A good checklist should force this question early:

What is the one thing this patent should protect?

If the draft cannot answer that clearly, the spec is not ready.

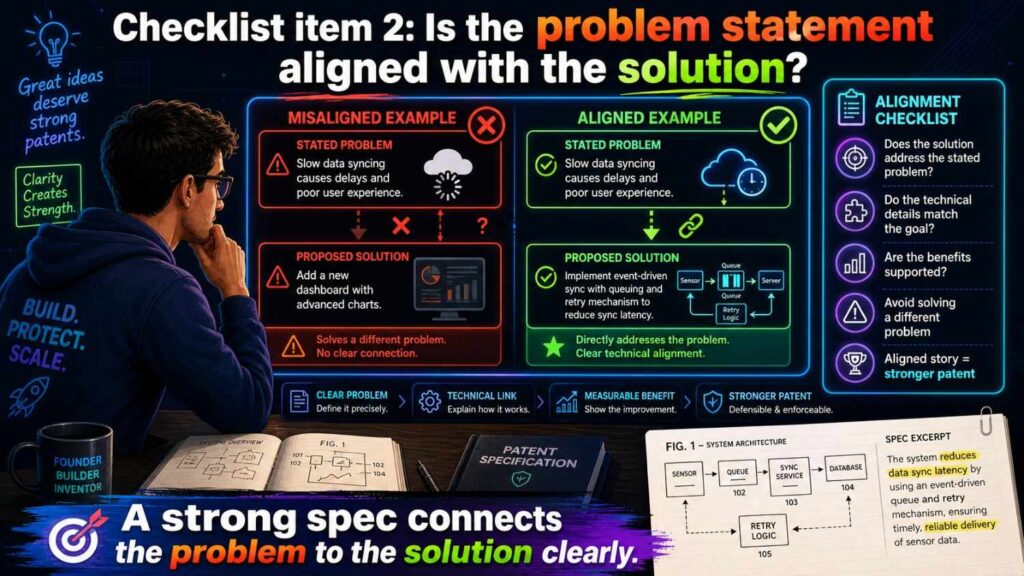

Checklist item 2: Is the problem statement aligned with the solution?

A strong spec should set up the problem and then explain the solution.

AI often writes broad problem statements that sound official but do not fit.

For example, your invention may solve slow response times in edge robotics. But the AI background may talk about poor user interfaces, low customer engagement, or generic data management.

That mismatch weakens the draft.

The problem does not need to be long. It needs to be true.

If the invention reduces delay, the spec should explain why delay matters.

If the invention improves model updates, the spec should explain why updates are hard.

If the invention improves sensor fusion, the spec should explain the limits of using one sensor alone.

If the invention protects privacy, the spec should explain what data movement creates risk.

This does not mean the spec should attack all prior systems or make broad claims about the market. It should stay careful. But it should give the reader enough context to understand why the invention matters.

The checklist question is simple:

Does the problem point directly to the solution described in the spec?

If not, rewrite the problem section.

Checklist item 3: Does the spec explain the technical solution in plain words?

AI can write formal text that hides the actual invention.

A good spec should include a clear plain-language explanation.

It does not need to sound casual. It should be simple and direct.

For example:

“The system receives sensor data from a machine, creates a failure risk score using a trained model, and changes a maintenance schedule based on the score.”

That is easy to understand.

Compare that with:

“The system implements an intelligent processing framework configured to perform dynamic operational optimization based on multidimensional information streams.”

That sounds fancy, but it says less.

A strong patent spec can include formal language, but the core solution should still be clear.

Your checklist should ask:

Can a technical founder explain the invention after reading the summary?

Can an engineer identify the inputs, process, outputs, and action?

Can a patent attorney see what the claims should focus on?

If not, the spec may need a clearer overview.

PowerPatent helps founders avoid patent drafts that sound complex but fail to explain the real invention. The goal is clear, useful protection backed by attorney review. Learn more here: https://powerpatent.com/how-it-works

Checklist item 4: Are the key terms defined and used consistently?

Term consistency is one of the biggest spec quality issues in AI drafts.

AI may call the same thing by many names.

A “risk model” may become a “prediction engine,” “AI model,” “classification unit,” “analytics module,” and “scoring service.”

A “failure risk score” may become a “risk value,” “health score,” “confidence score,” “machine score,” and “alert level.”

This creates confusion.

A strong spec should use one main name for each key part.

If the spec uses more than one name, it should explain the relationship.

For example:

“In some examples, the failure risk score may also be referred to as a risk value.”

But too many alternate names can still make the draft harder to read.

Build a term table before review.

It can be simple.

Risk model means the model that creates the failure risk score.

Failure risk score means a value that shows predicted risk of future machine failure.

Maintenance scheduler means the component that changes planned maintenance based on the score.

Sensor data means data from one or more machine sensors.

Then search the draft for other names.

If the draft uses “health score” when it means “failure risk score,” decide whether to change it or explain it.

This check is especially important for claims. If the claims use “failure risk score,” the spec should support that exact term.

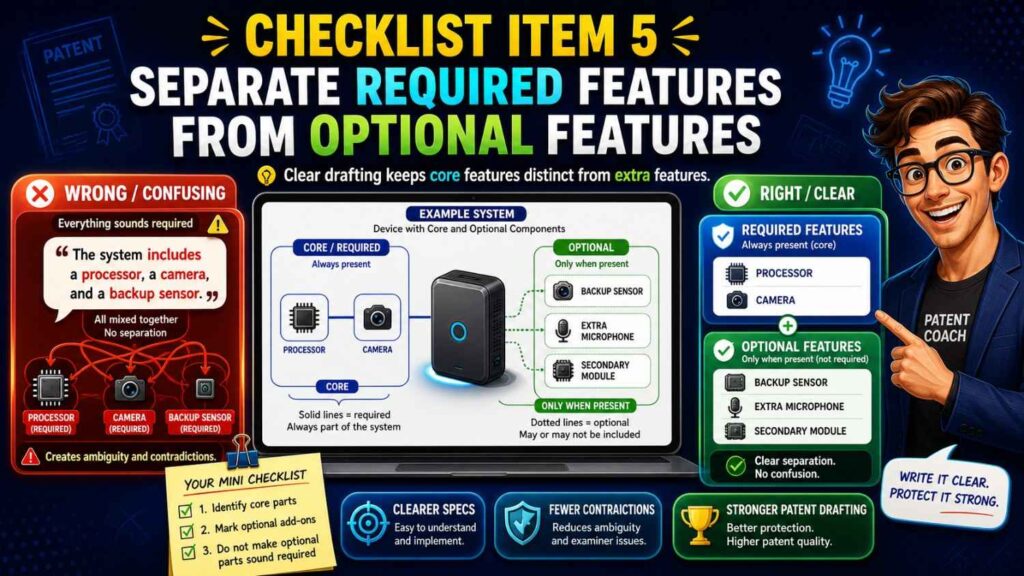

Checklist item 5: Are required features separated from optional features?

AI drafts often blur what is required and what is optional.

This can hurt patent scope.

For example, the invention may use a trained model and a risk score. It may optionally include a dashboard. But the AI spec may describe the dashboard as if every version needs it.

That can narrow the invention.

A good spec makes the difference clear.

Required features are part of the core invention.

Optional features are examples, add-ons, or versions.

Your checklist should ask:

What must be present for the invention to work?

What may be present in some versions?

Does the draft treat optional features as required?

Does the draft use “must,” “always,” or “required” when “may” would be more accurate?

Does an example accidentally become the whole invention?

This is one of the most important startup checks because products change.

Your current product may have a mobile app, but your future product may use an API. Your current version may use camera data, but future versions may use radar or vibration data. Your current model may run in the cloud, but future versions may run on edge devices.

Do not let one product version trap the patent unless that version is truly the invention.

Checklist item 6: Does the spec avoid false limits?

False limits are details that make the invention narrower than it should be.

AI can add false limits by using strong wording.

For example:

“The system always receives data from a camera.”

“The model must be a neural network.”

“The user must approve the action.”

“The server is required.”

“The dashboard displays every output.”

These statements may be correct in some cases. But if they are not truly required, they should not be written that way.

A better approach is often:

“In some examples, the system receives image data from a camera.”

“In some examples, the model is a neural network.”

“In some examples, a user approves the action.”

“In some examples, the system includes a server.”

This keeps examples as examples.

The checklist should include a “false limit” pass.

Search for words like always, must, required, only, necessary, essential, and every.

When you find them, ask:

Is this really true for all versions?

If not, change the wording.

This is a small check with a big impact.

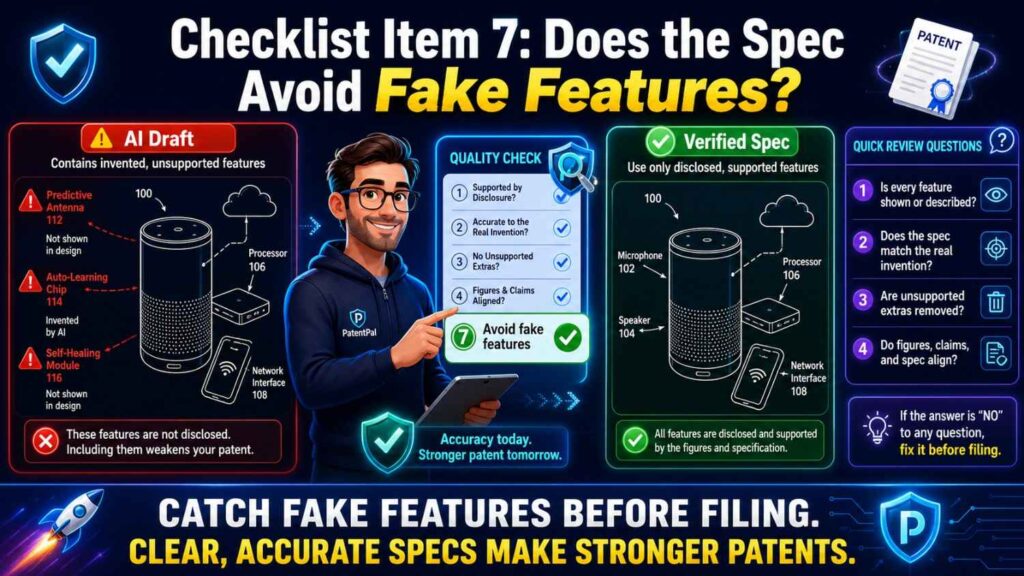

Checklist item 7: Does the spec avoid fake features?

AI may invent features.

That is one of the clearest risks of AI patent drafting.

A spec may add a cloud server, user account system, payment engine, location tracker, admin panel, feedback module, training database, or mobile app even when those are not part of the invention.

Sometimes fake features are obvious.

Sometimes they blend in.

The checklist should ask:

Did the AI add any part that was not in the invention notes?

Is each added part real, optional, or wrong?

Could any added part create confusion?

Could any added part narrow the patent?

Could any added part reveal a strategy we do not want to disclose?

Fake features should be removed unless they are useful alternatives that the team approves.

Do not leave them in just because they sound normal.

A patent spec should not describe a made-up product.

It should describe the real invention and planned useful versions.

Checklist item 8: Does the spec support the claims?

The spec must support the claims.

This is one of the most important quality checks.

Take each claim element and find where the spec explains it.

If the claim says “receiving sensor data,” the spec should explain sensor data.

If the claim says “applying a risk model,” the spec should explain the risk model.

If the claim says “generating a failure risk score,” the spec should explain the score.

If the claim says “changing a maintenance schedule,” the spec should explain how the schedule may change.

This is called claim support mapping.

You do not need a fancy tool to start. You can copy the claim into a table and add a spec location next to each element.

If a claim element has no support, the spec may need more detail.

If the spec uses a different term, align it.

If the spec describes only one narrow example but the claim is broad, add more support if the broader scope is true.

This step is where AI drafts often need human review.

AI may write claims that sound good but do not match the spec. Or it may write a spec that explains features not claimed at all.

The claims and spec should work together.

PowerPatent helps founders create patent filings where the draft, claims, and technical details are connected through a workflow with real attorney oversight. See how that works here: https://powerpatent.com/how-it-works

Checklist item 9: Does the spec explain the main flow from start to finish?

Every patent spec should include a clear flow.

For software and AI inventions, that often means data flow.

For hardware inventions, it may mean signal flow, movement, assembly, or control flow.

For biotech or lab inventions, it may mean sample handling, measurement, analysis, and output.

The reader should understand what happens first, what happens next, and what result is produced.

AI drafts can skip steps or mix the order.

For an AI system, the spec should often explain:

What data is received.

How the data is prepared.

What model or logic is applied.

What output is created.

What action is taken.

What optional feedback or update may happen.

That flow should be easy to follow.

If the draft jumps from input to output without explaining the process, it may be too thin. If it describes many processes with no clear order, it may be too messy.

The checklist should ask:

Can we trace the invention from input to final action?

Does the flow match the figures?

Does the flow match the claims?

Does any output appear before it is created?

Does any action depend on missing data?

Flow errors are common in AI drafts because the model may generate sections separately.

A flow pass helps catch them.

Checklist item 10: Are AI model inputs and outputs clear?

For AI-related inventions, the model should not be a black box.

The spec should explain what the model receives and what it creates.

It does not need to expose every secret. But it should give enough detail to support the invention.

For example, instead of saying:

“The AI model analyzes the data.”

A stronger spec may say:

“The risk model receives vibration data and operating state data and creates a failure risk score for a future time window.”

That sentence gives inputs, output, and purpose.

Your checklist should ask:

What data goes into the model?

What does the model output?

Is the output a score, label, ranking, command, alert, prediction, or recommendation?

How is the output used?

Does the same output have the same name throughout the spec?

Does the draft confuse risk score, confidence score, and prediction label?

This matters because many AI inventions live in the details of input, output, and use.

If those are unclear, the spec may be weak.

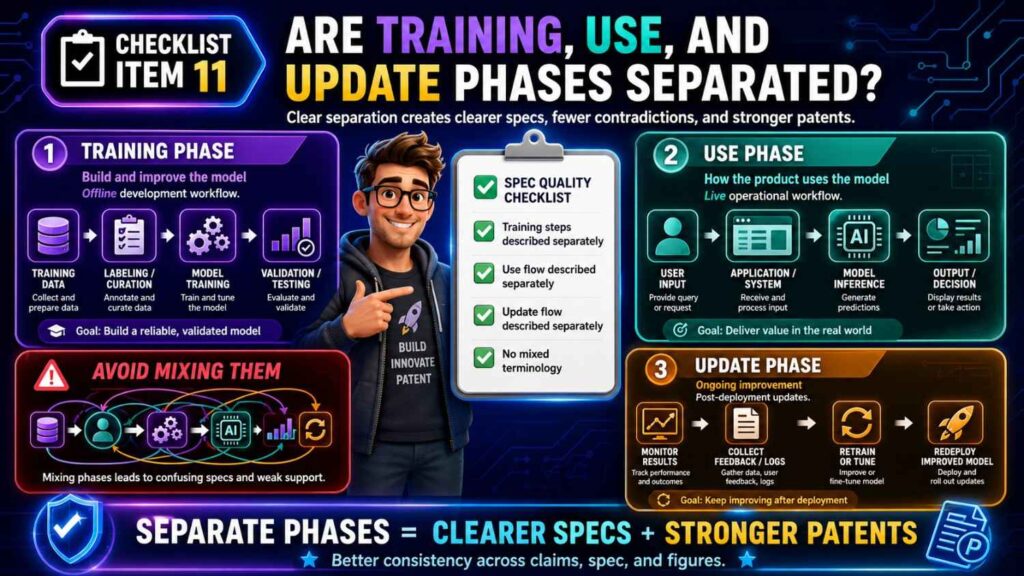

Checklist item 11: Are training, use, and update phases separated?

AI specs often mix up model training and model use.

Training means creating or improving a model.

Use means applying the model to new data.

Update means changing the model after deployment or over time.

These are different.

An AI draft may say the model is trained using live data, then later say the model is already trained before live use. It may say the model is fixed, then later say user feedback updates it in real time.

These can all be valid in different versions, but the spec must explain that.

The checklist should ask:

Does the invention include model training?

Does it include model use?

Does it include model updates?

Are those phases clearly separated?

Does the spec say where training happens?

Does the spec say where the model runs during use?

Does the spec explain whether feedback changes the model?

If the invention is only about using a trained model, do not let the spec imply training is required.

If the invention is about a new training method, do not let the spec hide that method in a short sentence.

For AI patents, this check is essential.

Checklist item 12: Does the spec describe enough examples?

Examples help support the invention.

They show how the invention can work in real situations.

AI drafts may provide examples, but they may be generic or poorly chosen.

A good spec should include examples that support the claim scope and business goal.

If the claim covers different sensors, the spec may describe several sensor examples.

If the claim covers local and server processing, the spec may explain both.

If the claim covers alerts and control actions, the spec may describe each.

This does not mean the spec should include endless lists.

It means the examples should be useful.

Your checklist should ask:

Do the examples support the broad terms in the claims?

Do the examples show the core invention clearly?

Are the examples technically true?

Are there examples for the most valuable use cases?

Do any examples create false limits?

Do examples describe optional features as optional?

Examples should expand and clarify the invention, not distract from it.

Checklist item 13: Does the spec cover future versions without guessing wildly?

Startups evolve.

A good patent spec should often support future versions of the invention.

But it should not include wild guesses that do not fit.

This balance matters.

For example, if your invention can run on a robot today and a vehicle tomorrow, the spec may describe both if the technical idea applies. But if AI adds unrelated uses like retail checkout, social media ranking, or medical diagnosis with no real connection, that may create noise.

The checklist should ask:

What future versions are likely and valuable?

Does the spec support them?

Are future versions framed as examples?

Are future versions technically plausible?

Do future versions stay tied to the core invention?

This helps protect the startup as it grows.

A patent filing should not be trapped in today’s product if the invention is broader. But it should also not become so broad that it loses focus.

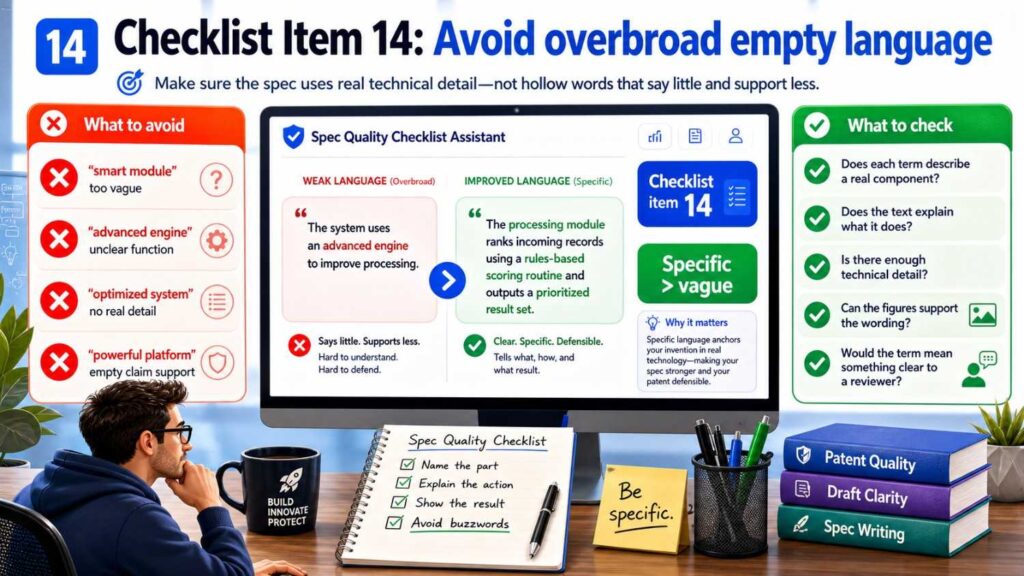

Checklist item 14: Does the spec avoid overbroad empty language?

AI often uses empty broad phrases.

For example:

“The system may be used with any data, in any environment, for any purpose.”

This sounds broad, but it does not add much.

Strong breadth comes from meaningful support.

Instead of saying “any data,” explain useful data categories.

Instead of saying “any device,” explain device types that matter.

Instead of saying “any action,” explain actions tied to the invention.

For example:

“The sensor data may include vibration data, temperature data, motor current data, pressure data, acoustic data, or other machine operation data.”

That is much stronger than:

“The system may use any data.”

The checklist should ask:

Does the broad language have real examples?

Does the spec explain why the broad category fits the invention?

Are broad terms supported by technical detail?

If not, the broad language may be weak filler.

Checklist item 15: Does the spec avoid unsupported promises?

AI may write big claims about results.

It may say the invention guarantees accuracy, prevents all failures, eliminates risk, or ensures safety.

That kind of language can be risky.

Most inventions improve something. They do not guarantee perfection.

A strong spec should use measured language.

It may say the invention “may improve,” “may reduce,” “may help,” or “may increase” when that is accurate.

More important, it should tie benefits to technical features.

For example:

“By generating the failure risk score before a machine reaches a failure state, the system may allow maintenance to be scheduled earlier.”

That is credible.

The checklist should ask:

Does the spec overpromise?

Are benefits tied to mechanisms?

Are claims like “always,” “guarantees,” “eliminates,” or “prevents all” used too strongly?

Would an engineer agree with the result language?

Would a customer-facing team be comfortable with the statement?

A patent spec should be strong, not hype-driven.

Checklist item 16: Does the spec match the figures?

Figures and spec should tell the same story.

AI drafts often create mismatches.

A figure may show a cloud server while the spec says processing happens on the device. A figure may show five steps while the spec describes six. A figure may label a block “prediction engine” while the spec calls it “risk model.”

Your checklist should include a figure walk-through.

Start with Figure 1.

Read the figure description.

Look at each label.

Check whether each label appears in the spec.

Check whether the same reference number is used correctly.

Check whether the flow arrows match the text.

Then move to the next figure.

Ask:

Does every figure support the invention?

Does every figure have a clear description?

Does every key reference number appear in the spec?

Do figure labels match spec terms?

Does the method figure match the claimed step order?

Do optional parts look optional?

Figures are often where hidden contradictions become visible.

Do not skip this check.

Checklist item 17: Does the spec support all figure elements?

The spec should explain what appears in the figures.

If a figure shows a “model update engine,” the spec should explain it.

If a figure shows a “feature extractor,” the spec should describe what it does.

If a figure shows arrows between components, the spec should explain what moves along those arrows if it matters.

Unexplained figure elements create confusion.

The checklist should ask:

Does every important block in each figure have text support?

Does every reference number have the right name?

Does the spec explain how the parts work together?

Are any figure elements outdated?

Are any figure elements fake AI additions?

If a figure element does not matter, consider removing it.

A figure should help the reader understand the invention. It should not add clutter.

Checklist item 18: Does the spec support every important claim term?

This check is similar to claim support, but it focuses on terms.

Claims often use terms that carry weight.

“Risk score.”

“Control signal.”

“Training data set.”

“Feature vector.”

“Route segment.”

“Model update package.”

“Confidence value.”

“Anomaly state.”

Each important term should be explained in the spec.

If the claim uses “model update package,” the spec should say what that package may include and how it is used.

If the claim uses “route segment,” the spec should explain what a route segment is.

If the claim uses “control signal,” the spec should show what creates it and what it changes.

The checklist should ask:

Does each claim term have a clear meaning in the spec?

Is the same term used consistently?

Does the spec use a different term for the same idea?

Could a reader misunderstand the term?

If a term is important enough to claim, it is important enough to support.

Checklist item 19: Does the spec include enough implementation detail?

A patent spec should not be a vague idea.

It should explain how the invention can be made or used.

AI drafts sometimes stay at a high level.

For example:

“The platform uses AI to optimize energy use.”

That is too thin by itself.

A stronger spec explains what data is used, what is optimized, how the model creates an output, and what system action occurs.

The checklist should ask:

Does the spec explain the parts involved?

Does it explain how data, signals, or materials move through the system?

Does it explain what the model or controller does?

Does it explain at least one way to carry out the invention?

Does it give enough detail for a skilled reader to understand the technical process?

The right amount of detail depends on the invention.

A patent attorney can help decide whether more support is needed.

PowerPatent helps founders bring real technical detail into the patent workflow so drafts are not just broad ideas in formal wording. Explore the process here: https://powerpatent.com/how-it-works

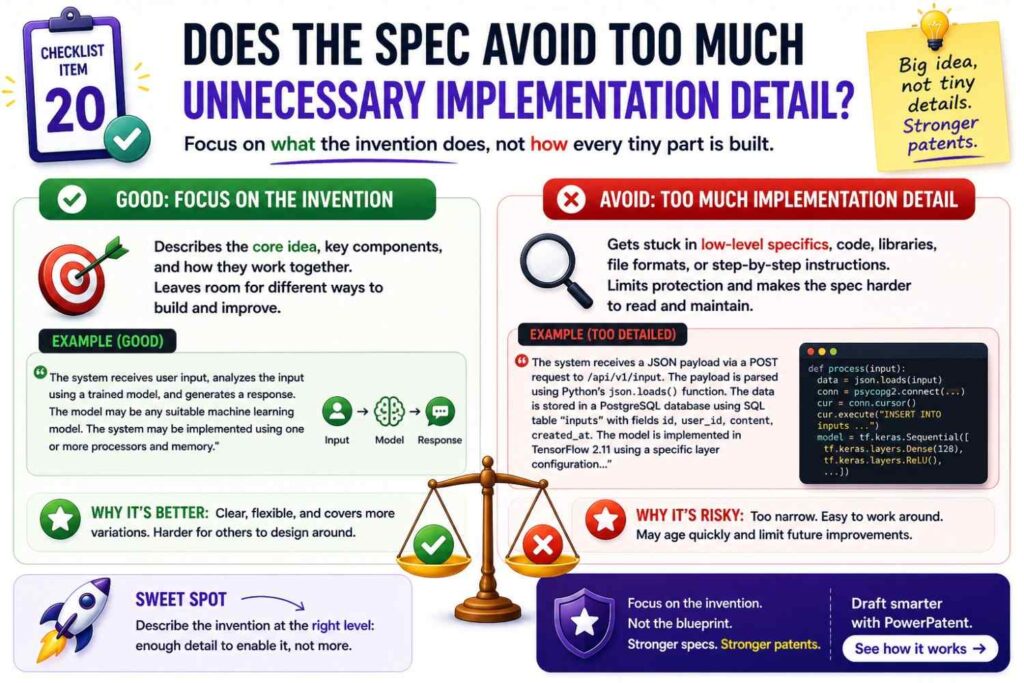

Checklist item 20: Does the spec avoid too much unnecessary implementation detail?

Too little detail is a problem.

Too much irrelevant detail can also be a problem.

AI may include long descriptions of generic processors, networks, displays, storage systems, and user interfaces. Some of this may be useful. But too much can bury the invention.

The checklist should ask:

Does this detail support the invention?

Does it support the claims?

Does it help explain an example?

Does it create a false limit?

Does it reveal something unnecessary?

Does it distract from the core idea?

A good spec is detailed where it matters.

It does not need to be bloated everywhere.

Patent attorneys often include some standard computing language for a reason. But AI may add filler without strategy.

Review it.

Checklist item 21: Does the spec describe the invention at more than one level?

A strong spec often describes the invention at multiple levels.

It gives a high-level overview.

It explains system parts.

It describes method steps.

It gives examples.

It may explain alternatives.

This layered structure helps the reader.

AI drafts may jump straight into detail or stay too broad.

Your checklist should ask:

Does the spec include a simple overview?

Does it describe the main system or device?

Does it describe the main method or process?

Does it explain examples?

Does it explain variations?

Does the detail connect back to the overview?

This structure makes the spec easier to read and stronger as support.

Checklist item 22: Does the spec include useful alternatives?

Alternatives help protect against design-arounds.

For example, if your invention can use different sensors, deployment setups, model types, thresholds, or actions, the spec should say so.

But the alternatives should be real.

Your checklist should ask:

What could a competitor change while still copying the idea?

Can the spec cover that variation?

Does the spec describe different data sources?

Does the spec describe different model types when appropriate?

Does the spec describe different deployment locations?

Does the spec describe different outputs or actions?

Are the alternatives tied to the core invention?

This is a business-focused check.

It helps make sure the spec protects more than a single product screen or narrow implementation.

Checklist item 23: Does the spec avoid locking to one vendor or tool?

Startups often use cloud providers, databases, AI frameworks, open-source libraries, or third-party APIs.

AI may include those names if they appear in notes.

Be careful.

If the invention does not depend on a specific vendor, the spec should not require it.

For example, if your system uses a vector database today but could use other storage, do not make one database required.

If your system runs on one cloud today but could run elsewhere, do not lock the patent to that cloud.

If your model uses one framework today, avoid making that framework part of the invention unless it truly matters.

The checklist should ask:

Does the spec name any third-party product?

Is that product required for the invention?

Could a broader term be used?

Does naming the vendor narrow the patent?

The patent should protect your invention, not the vendor stack around it.

Checklist item 24: Does the spec handle data carefully?

Data is often central to AI and software inventions.

The spec should be clear about what data is used, where it comes from, how it is processed, and what happens to it.

AI drafts may add data sources that are not real.

They may also describe sensitive data in ways that are not needed.

Your checklist should ask:

What data does the invention require?

What data is optional?

Does the spec add data sources we do not use?

Does the spec say personal data is used when it is not?

Does the spec explain derived values, features, summaries, scores, or labels?

Does the spec describe storage and transmission accurately?

Does the spec avoid exposing private customer or business details?

Data language should be precise.

For AI inventions, unclear data language can weaken the whole spec.

Checklist item 25: Does the spec handle privacy and security accurately?

AI may add privacy and security claims because they sound good.

But they must match the design.

If the spec says data never leaves the device, that must be true.

If the spec says data is anonymized, the draft should explain how or when that happens.

If the spec says data is encrypted, make sure that matches the system.

Privacy and security statements should not be marketing claims.

They should be technical statements.

The checklist should ask:

Does the invention include privacy or security features?

Are those features technically explained?

Does the spec avoid absolute claims unless true?

Does the spec match the actual product design?

Does the spec avoid promising more than the system does?

If privacy is a key advantage, the spec should describe the mechanism clearly.

If it is not central, avoid adding broad privacy language that may create risk.

Checklist item 26: Does the spec handle human review clearly?

Many AI systems include human review.

Some systems only recommend actions.

Some systems act automatically.

Some do both.

The spec should be clear.

For example, an AI maintenance tool may send a recommendation to a technician. Another version may automatically change a schedule. Another may stop a machine if risk is high.

Those are different modes.

The checklist should ask:

Does the system recommend, alert, control, or decide?

Is human approval required?

Is human approval optional?

Does the spec describe automatic and manual modes clearly?

Do the claims match the intended mode?

This matters because the role of a human can change the invention.

Do not let AI blur it.

Checklist item 27: Does the spec handle thresholds and scores consistently?

AI drafts often use scores and thresholds.

A risk score may trigger an alert. A confidence score may decide whether to take action. A similarity score may select a match.

The spec should keep these meanings clear.

If a high risk score means high risk, it should mean that throughout the spec.

If a threshold is crossed when the score is greater than a value, do not later say the action happens when the score is below the value unless that is a different score or example.

The checklist should ask:

What does each score mean?

Does a higher score mean more or less of something?

What threshold triggers action?

Are thresholds examples or required values?

Are numbers consistent?

Does the spec confuse confidence, risk, probability, and rank?

Scores can look simple, but they often carry the invention.

Review them carefully.

Checklist item 28: Does the spec use numbers safely?

AI may add exact numbers.

For example:

“The threshold is 80%.”

“The device samples data every 10 milliseconds.”

“The model has 12 layers.”

“The alert is sent within 5 seconds.”

If those numbers are real and useful, fine.

If they are guesses, they may create risk.

The checklist should ask:

Where did each number come from?

Is the number required?

Is it just an example?

Should it be a range?

Does the number match the engineering reality?

Does another section use a different number?

In many cases, numbers should be framed as examples.

For example:

“In some examples, the threshold may be about 80%.”

Or:

“In some examples, the sampling rate may be selected based on machine type.”

Use exact numbers with care.

Checklist item 29: Does the spec avoid product-only thinking?

A patent should protect the invention, not just the current product interface.

AI drafts may focus on the product because the input notes come from product docs.

That can make the spec too narrow.

For example, a product may show a risk score in a web dashboard. But the invention may be the way the score is created and used to change machine behavior.

The checklist should ask:

Is this detail part of the invention or just part of the current product?

Does the spec over-focus on screens, buttons, tabs, or workflows?

Could the invention work through an API, controller, report, or automated action?

Does the spec protect the technical idea behind the product?

Product details can be useful examples. But they should not swallow the invention.

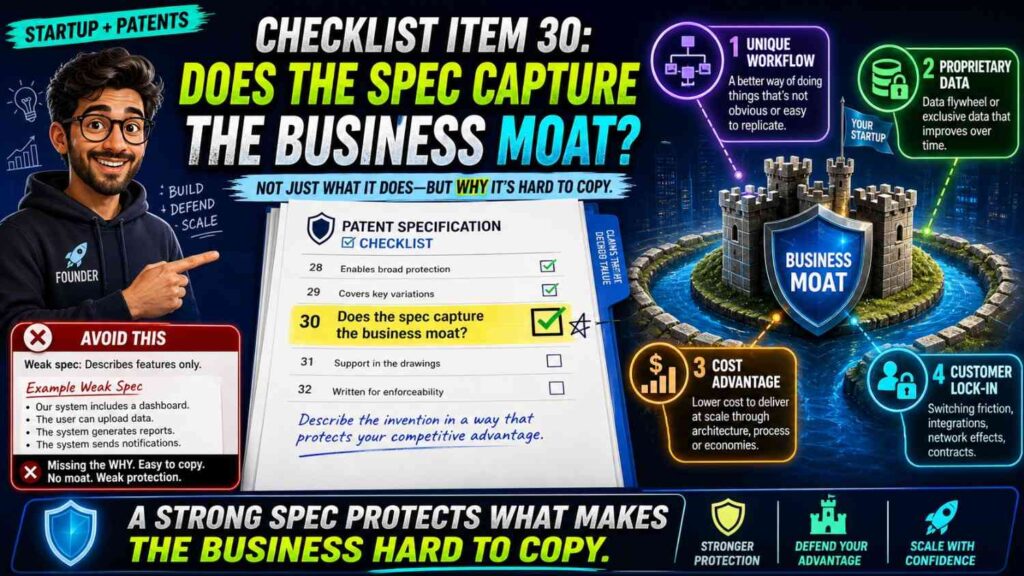

Checklist item 30: Does the spec capture the business moat?

A good spec should protect what matters to the business.

It should not just describe what is easy to write.

The checklist should ask:

What would a competitor copy?

What technical feature makes the product better?

What part was hard to build?

What supports pricing, speed, accuracy, safety, trust, or scale?

Does the spec focus on that?

If the real moat is a model update method, the spec should not spend most of its energy on a generic user dashboard.

If the real moat is a robotic control loop, the spec should not read like a generic sensor system.

This check should involve a founder.

Patent quality is not only legal quality. It is also business fit.

PowerPatent helps startups connect patent drafting to the real technical moat so the filing can support the company’s growth, fundraising, and long-term strategy. See how it works here: https://powerpatent.com/how-it-works

Checklist item 31: Does the spec include fallback positions?

A fallback position is a more specific version of the invention that may still be valuable.

Broad claims may face pushback later. A good spec gives your attorney room to adjust.

For example, if the broad invention is “creating a risk score from machine data,” fallback positions may include using vibration data, using a future time window, using an edge device, changing a maintenance schedule, or combining sensor data with operating state data.

These details should be in the spec if they are useful and true.

The checklist should ask:

What narrower versions still matter?

Does the spec describe them?

Are fallback features tied to business value?

Are they shown in examples?

Do the figures support them where helpful?

Fallback positions are like safety rails.

They can help later if the broadest path is challenged.

Checklist item 32: Does the spec avoid mixing multiple inventions without structure?

Startups often have many inventions inside one product.

AI may mix them together.

For example, a product may include a new data collection method, a new model training process, a new edge deployment system, and a new dashboard workflow.

Those may be related. They may also be separate inventions.

A spec can sometimes cover more than one inventive concept, but it should be organized.

The checklist should ask:

Is the draft trying to cover too many inventions at once?

Is there one clear main idea?

Are separate ideas clearly separated?

Should some ideas be saved for another filing?

Does the claim strategy match the spec?

This is a strategic issue.

A patent attorney can help decide whether to split filings or keep related concepts together.

The key is to avoid a spec that feels like a pile of features.

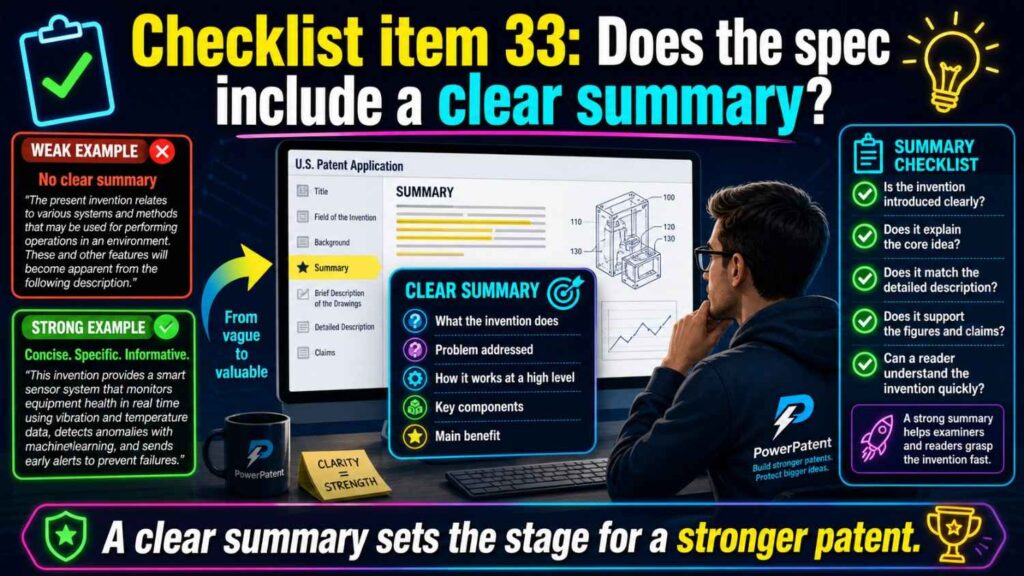

Checklist item 33: Does the spec include a clear summary?

The summary should give a clean overview of the invention.

It should not introduce unsupported features.

It should not overpromise.

It should not be so broad that it says nothing.

Your checklist should ask:

Does the summary match the claims?

Does it use the same key terms?

Does it describe the main technical flow?

Does it avoid fake or optional features being treated as required?

Does it stay aligned with the source of truth?

The summary may be short, but it sets the tone.

A confused summary is often a sign of a confused draft.

Checklist item 34: Does the spec include a useful detailed description?

The detailed description should do the heavy lifting.

It should explain the invention in enough detail to support the claims.

AI may write a detailed description that is long but shallow.

Length alone is not quality.

The checklist should ask:

Does the detailed description explain each main component?

Does it explain each main step?

Does it describe how parts interact?

Does it describe examples and alternatives?

Does it explain figures clearly?

Does it support the claim terms?

Does it avoid repeating the same vague sentence in different ways?

A detailed section should feel useful.

If it feels like filler, it needs work.

Checklist item 35: Does the spec include good figure descriptions?

Figure descriptions should help the reader understand the drawings.

They should not merely repeat labels.

For example, instead of saying:

“Figure 1 shows a system.”

A better description may explain what the system includes and what flow the figure shows.

The checklist should ask:

Does each figure description explain what matters?

Does it match the drawing?

Does it use the same terms as the claims and spec?

Does it explain data or signal flow where needed?

Does it clarify optional components?

Good figure descriptions make the spec easier to follow.

Checklist item 36: Does the spec avoid stale text from old drafts?

AI drafts may use old material.

Old text can create contradictions.

A previous product version may have used manual review. The new version may be automatic. A previous architecture may have used cloud processing. The new one may run on edge devices. A previous model may have required labeled data. The new one may not.

The checklist should ask:

Does the spec include outdated features?

Are old names still present?

Does the draft mix old and new architecture?

Do figures show an old version?

Do examples describe abandoned product behavior?

Version drift is common in startups.

Review the spec against the current invention source of truth and planned future versions.

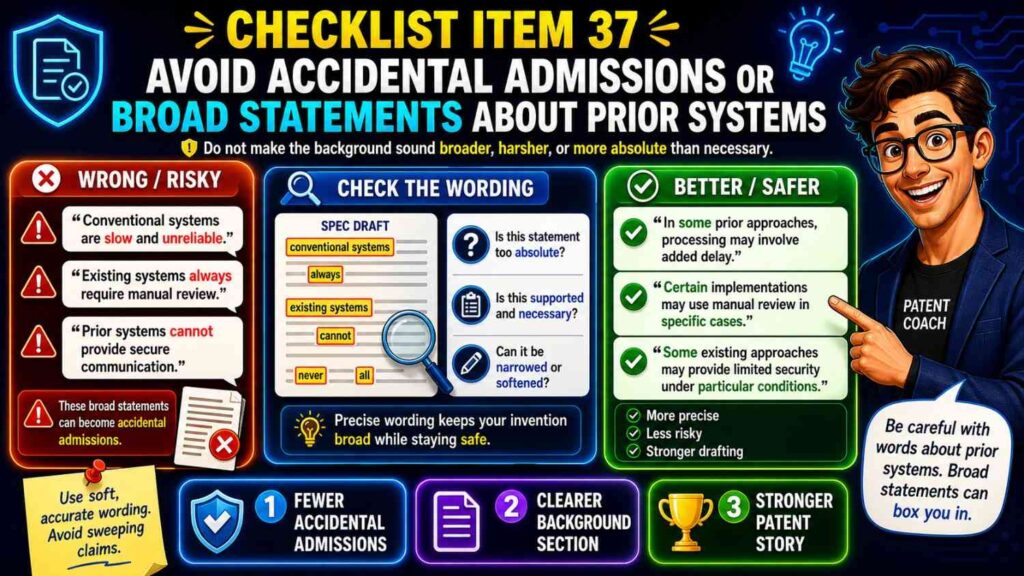

Checklist item 37: Does the spec avoid accidental admissions or broad statements about prior systems?

AI may write background sections that say too much.

For example:

“All existing systems fail to predict machine faults.”

Or:

“Prior systems cannot process data locally.”

Such statements may be too broad or wrong.

A safer background is more measured.

It may say some systems have certain limits or that there is a need for improved approaches.

The checklist should ask:

Does the background make unsupported claims about all prior systems?

Does it overstate what others cannot do?

Does it create unnecessary risk?

Does it focus on the problem without being careless?

This is an area where attorney review is important.

Checklist item 38: Does the spec stay formal without becoming unreadable?

The user asked for simple words and a formal tone. That balance also matters in patent specs.

A spec should be professional.

But professional does not mean hard to read.

AI may write long, dense sentences.

The checklist should ask:

Can each sentence be understood?

Are sentences too long?

Are terms used clearly?

Does the writing explain rather than hide?

Does the draft repeat phrases without adding meaning?

Simple language can improve patent quality because it helps everyone review the draft.

Clear writing is not weak writing.

Checklist item 39: Does the spec avoid repeated phrases and copied sections?

AI often repeats.

It may describe the same system in nearly the same words across multiple sections.

Some repetition is normal in patent drafting. But pointless repetition can make review harder and may introduce small differences.

The checklist should ask:

Does each repeated section add something useful?

Do repeated descriptions stay consistent?

Are there near-duplicate paragraphs that could be merged?

Did AI repeat boilerplate too much?

Does repetition create term drift?

Clean repetition is fine.

Messy repetition is not.

Checklist item 40: Does the spec match the expected filing type?

A provisional application may have different drafting goals than a non-provisional application, but both need quality.

A provisional can be faster and more flexible, but it should still support the invention.

A non-provisional needs more formal structure, claims, and careful drafting.

The checklist should ask:

What type of filing is this?

Does the spec include enough support for future claims?

Are figures included where helpful?

Are examples clear?

Does the draft avoid being just a rough idea note?

Do not assume “provisional” means “low quality.”

A weak early filing may not support later protection.

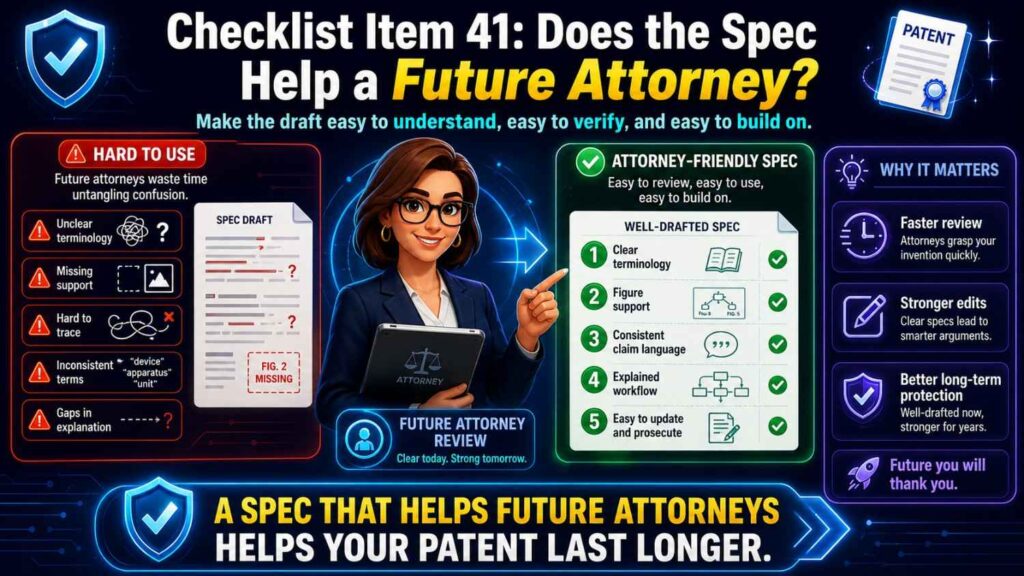

Checklist item 41: Does the spec help a future attorney?

A good AI draft should not only look complete.

It should make attorney review easier.

That means the invention should be clear. Terms should be consistent. The source of truth should be available. Technical choices should be explained. Optional features should be marked.

The checklist should ask:

Would a patent attorney understand the invention quickly?

Are open questions marked?

Are uncertain details flagged instead of guessed?

Are assumptions listed?

Are claim support points easy to find?

This can reduce back-and-forth and improve quality.

PowerPatent is designed to make this process smoother by combining structured invention capture, AI-supported drafting, and real attorney review. See how it works here: https://powerpatent.com/how-it-works

Checklist item 42: Does the spec help future prosecution?

Patent prosecution is the process after filing, when the patent office reviews the application.

A strong spec can help during that process.

It can provide fallback support. It can explain variations. It can support claim changes. It can clarify terms.

Your checklist should ask:

Does the spec include useful alternative versions?

Does it support narrower claim paths?

Does it describe technical benefits?

Does it explain important terms?

Does it avoid unnecessary limits?

This is a place where attorney input is valuable because future prosecution strategy is not always obvious at drafting time.

Checklist item 43: Does the spec help future business use?

A patent may later matter in fundraising, licensing, partnerships, due diligence, or acquisition.

A messy spec can make the invention hard to understand.

A clear spec can help tell the technical moat story.

The checklist should ask:

Could a future investor understand what this patent is about?

Could a buyer see how it relates to the product?

Could the team explain why this filing matters?

Does the spec protect a business-critical area?

Patent quality is not only about filing. It is about long-term value.

Checklist item 44: Does the spec avoid sensitive disclosures that are not needed?

Because patent applications may publish, the team should be careful about what goes into the spec.

AI may include more detail than needed if it is given raw notes.

The checklist should ask:

Does the spec include customer names?

Does it include private performance numbers?

Does it include secrets not needed for support?

Does it include exact code paths, internal tool names, or private datasets?

Does it reveal trade secrets that may be better kept out?

This does not mean the spec should be vague. It means the team should be intentional.

Patent protection requires disclosure. But not every internal detail belongs in the filing.

Work with counsel on this balance.

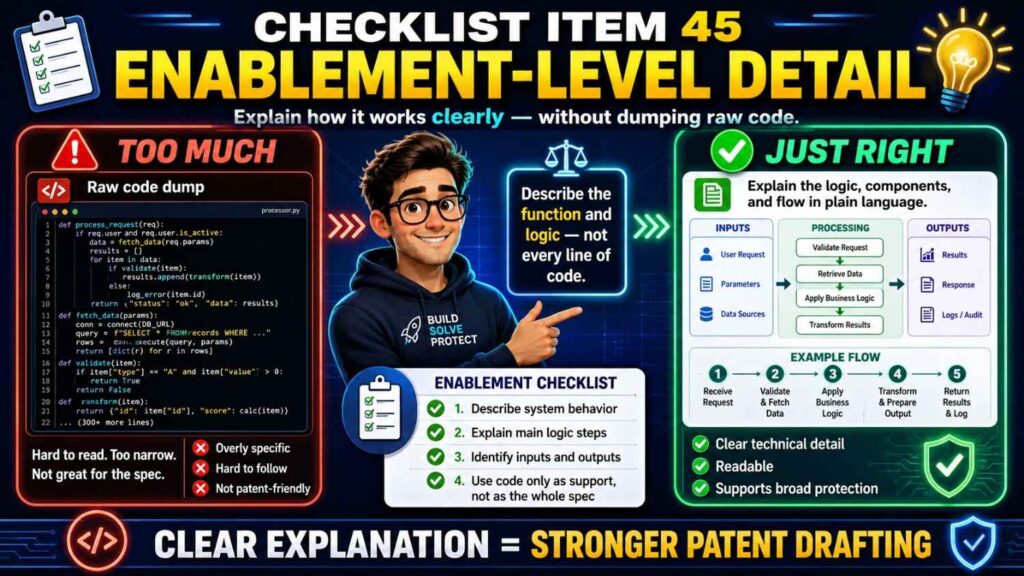

Checklist item 45: Does the spec describe enablement-level detail without dumping code?

For software and AI inventions, founders sometimes wonder if the spec needs code.

Usually, a patent spec explains the method and system rather than dumping raw code.

The checklist should ask:

Does the spec explain the logic clearly?

Does it describe the data flow?

Does it describe the model input and output?

Does it describe the action taken?

Does it avoid raw code unless there is a specific reason to include it?

AI can help turn engineering notes into clear process descriptions.

That is often more useful than pasting code into a draft.

Checklist item 46: Does the spec explain why the invention is technically useful?

The spec should help a reader see the technical value.

It does not need to sound like marketing.

It should tie the value to the mechanism.

For example:

“By using a risk score for a future time window, the system may schedule maintenance before a machine reaches a failure state.”

That explains value.

The checklist should ask:

What technical problem is reduced?

What technical result may improve?

What feature creates that result?

Does the spec connect them?

This is useful for patent strength and business clarity.

Checklist item 47: Does the spec avoid making the invention sound like only a business idea?

For software startups, this matters.

The spec should focus on technical steps, systems, data, models, signals, and outputs.

If the draft only says the invention improves business results, it may be too abstract.

For example, “increasing sales with AI” is not enough.

A stronger spec explains how the system receives data, creates a score or prediction, selects an action, and changes a technical process.

The checklist should ask:

Does the spec explain a technical method?

Does it describe system parts and interactions?

Does it avoid relying only on business benefits?

Does it show how the computer, device, model, or controller works?

A patent should protect a technical solution, not just a goal.

Checklist item 48: Does the spec make design-around harder?

A good spec should help the claims cover likely design-arounds.

A competitor may try to change one part while copying the core idea.

The checklist should ask:

Could a competitor use another sensor?

Could they move processing from cloud to edge?

Could they use a different model type?

Could they replace an alert with a control signal?

Could they use a different data format?

Could they change the user interface?

Does the spec describe these variations where appropriate?

This is not about claiming everything in the universe.

It is about thinking like a competitor before filing.

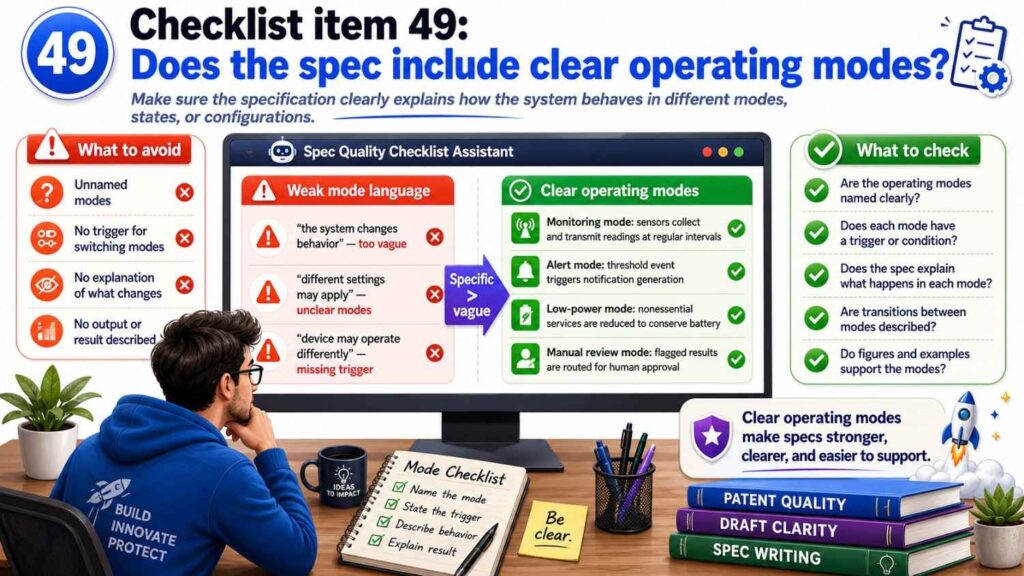

Checklist item 49: Does the spec include clear operating modes?

Many inventions have modes.

A normal mode.

A training mode.

A fallback mode.

A manual mode.

An automatic mode.

An offline mode.

A calibration mode.

An update mode.

AI drafts may mention these without structure.

The checklist should ask:

Are modes clearly named?

Does each mode have a purpose?

Are steps in each mode clear?

Does the spec avoid mixing modes?

Are optional modes framed as optional?

Modes can make a spec richer and more useful, but only if they are clear.

Checklist item 50: Does the spec explain edge cases or fallback behavior?

Some inventions are stronger when they handle edge cases.

For example, what happens if sensor data is missing? What happens if the model confidence is low? What happens if network access is lost? What happens if a safety threshold is crossed?

Not every invention needs every edge case. But useful fallback behavior should be described.

The checklist should ask:

Are important failure cases handled?

Does fallback behavior support the invention?

Does the spec describe safety or reliability features where relevant?

Does the fallback create a useful claim angle?

For deep tech products, fallback behavior can be part of the invention.

Do not let AI skip it if it matters.

Checklist item 51: Does the spec explain where processing happens?

This is very important for modern AI systems.

Processing may happen on a device, edge server, cloud server, robot, vehicle, gateway, phone, browser, or distributed system.

The location can affect privacy, latency, power, cost, and scope.

The checklist should ask:

Where does each main step happen?

Where does the model run?

Where is data stored?

Where are updates created?

Where are control signals sent from?

Does the spec support alternative locations?

Does the spec avoid cloud-only assumptions?

This is one of the most common AI drafting issues.

Make deployment clear.

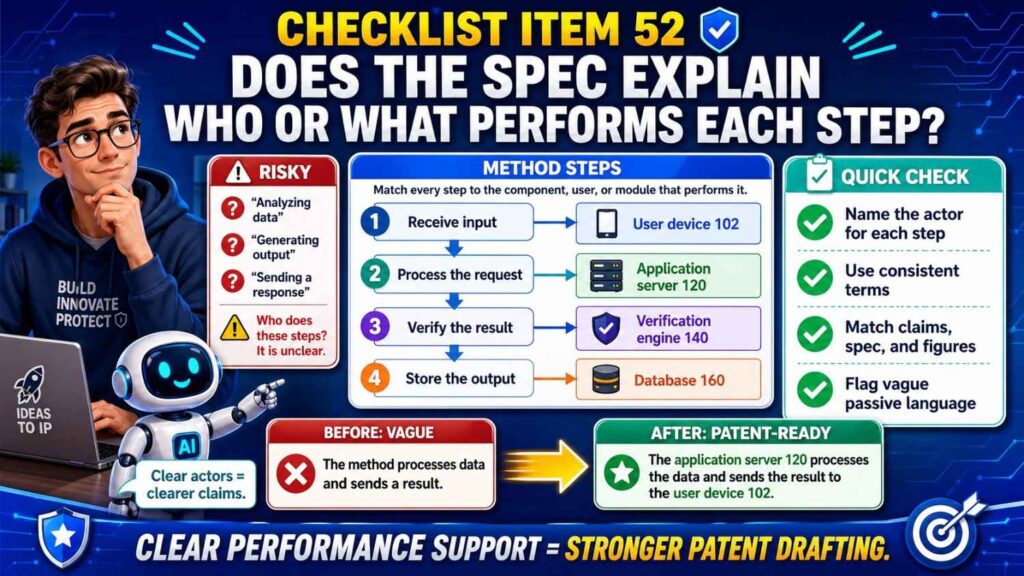

Checklist item 52: Does the spec explain who or what performs each step?

Actor confusion creates weak drafts.

The spec should make clear whether a step is performed by a processor, server, device, controller, model, user, robot, or system.

The checklist should ask:

Who receives the data?

Who processes it?

Who creates the output?

Who makes the decision?

Who takes action?

Does the actor match the claims?

Does the actor match the figures?

If the spec says the “system” performs everything, that may be okay in broad sections. But detailed sections should often identify the component that performs key steps.

Checklist item 53: Does the spec avoid inconsistent singular and plural language?

A draft may say “a sensor” in one place and “sensors” in another.

This may be fine if the invention supports one or more sensors.

But the spec should make that clear.

The checklist should ask:

Does the invention require one item or many?

Does the spec say “one or more” where helpful?

Does the figure show multiple parts while the text implies one required part?

Does the claim language match the intended scope?

This applies to models, processors, servers, devices, users, databases, scores, signals, and modules.

Small grammar choices can affect meaning.

Checklist item 54: Does the spec handle modules carefully?

AI drafts often use modules.

A scoring module.

A training module.

A control module.

A display module.

A feedback module.

Modules can be useful. But too many modules can make the spec feel artificial.

The checklist should ask:

Is each module real or just a drafting label?

Does the spec explain what each module does?

Are modules separate parts or logical functions?

Do module names match figures and claims?

Could modules be combined or distributed?

If modules are used, define them clearly.

Do not let AI create a maze of fake modules.

Checklist item 55: Does the spec support system, method, and device views?

Many patent applications include different claim types.

The spec should support them.

If the patent may include system claims, the spec should describe the system components.

If it may include method claims, the spec should describe steps.

If it may include device claims, the spec should describe structure.

If it may include software-related claims, the spec should describe instructions, memory, and processing where appropriate.

The checklist should ask:

Does the spec support each planned claim type?

Does it describe both components and actions?

Does it explain how the system performs the method?

This helps the attorney create a stronger claim set.

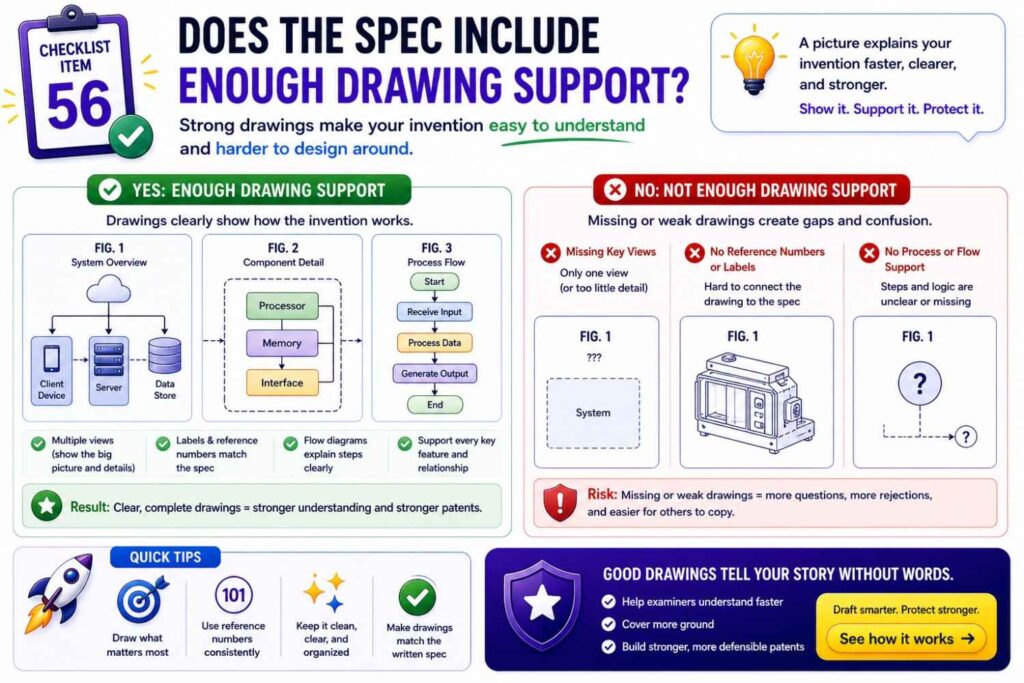

Checklist item 56: Does the spec include enough drawing support?

Sometimes AI-generated specs have text but weak figures.

Figures can be very important.

They help show system architecture, method flow, data flow, device structure, model pipeline, and user interaction.

The checklist should ask:

What figures are needed to explain the invention?

Does the spec refer to those figures?

Do figures show the main claim elements?

Are method steps shown where useful?

Are system components shown where useful?

Are model phases shown where useful?

A good figure set can make the spec clearer and easier to review.

Checklist item 57: Does the spec avoid drawing-based false limits?

Figures often show one example.

The spec should say that when appropriate.

If the figure shows one sensor, but the invention can use several sensors, the spec should explain that.

If the figure shows a robot, but the invention can apply to other machines, the spec should say so.

The checklist should ask:

Does the text frame figures as examples?

Does the spec avoid saying the invention is limited to the shown arrangement?

Are alternative arrangements described?

Figures should support the invention, not trap it.

Checklist item 58: Does the spec make the abstract easy to align?

The abstract should be short and accurate.

AI may write an abstract that introduces new features or uses different terms.

The checklist should ask:

Does the abstract match the spec?

Does it match the claims?

Does it use the same key terms?

Does it avoid unsupported features?

Does it avoid overpromising?

Review the abstract last.

It should be a clean snapshot of the invention.

Checklist item 59: Does the spec include clear definitions without over-defining?

Definitions can help.

But over-defining can narrow or complicate the spec.

The checklist should ask:

Which terms need definition?

Are definitions broad enough?

Do definitions match the intended scope?

Do definitions create unwanted limits?

Are common terms being defined unnecessarily?

For example, defining “sensor data” with examples may be helpful. But defining it as only image data may be too narrow if other sensors are possible.

Use definitions with care.

Checklist item 60: Does the spec avoid contradictions across sections?

A final contradiction pass is important.

AI drafts often contain small conflicts.

The summary may say one thing.

The detailed description may say another.

The figure text may say a third.

The examples may add a fourth.

The checklist should ask:

Does the system run locally, remotely, or both?

Is the model trained before use, during use, or both?

Is a feature required or optional?

Does the score mean the same thing everywhere?

Does the same actor perform the same step?

Do figures and text match?

Search for the main terms and read all uses.

Contradictions often hide far apart.

Checklist item 61: Does the spec answer “what is new?”

A patent spec should make the invention clear, even if it does not argue like a marketing page.

The checklist should ask:

Can we identify what is different from common approaches?

Does the spec show the technical improvement?

Does it explain the special step, structure, flow, or combination?

Does it avoid sounding like a generic system?

If the draft could describe any startup in the space, it is not specific enough.

The spec should carry the fingerprint of your invention.

Checklist item 62: Does the spec reflect inventor input?

AI should not replace inventor knowledge.

The checklist should ask:

Did an inventor review the draft?

Did the draft capture what the inventor believes is new?

Were wrong assumptions corrected?

Were missing technical details added?

Were optional versions confirmed?

Inventor review is one of the best ways to catch AI errors.

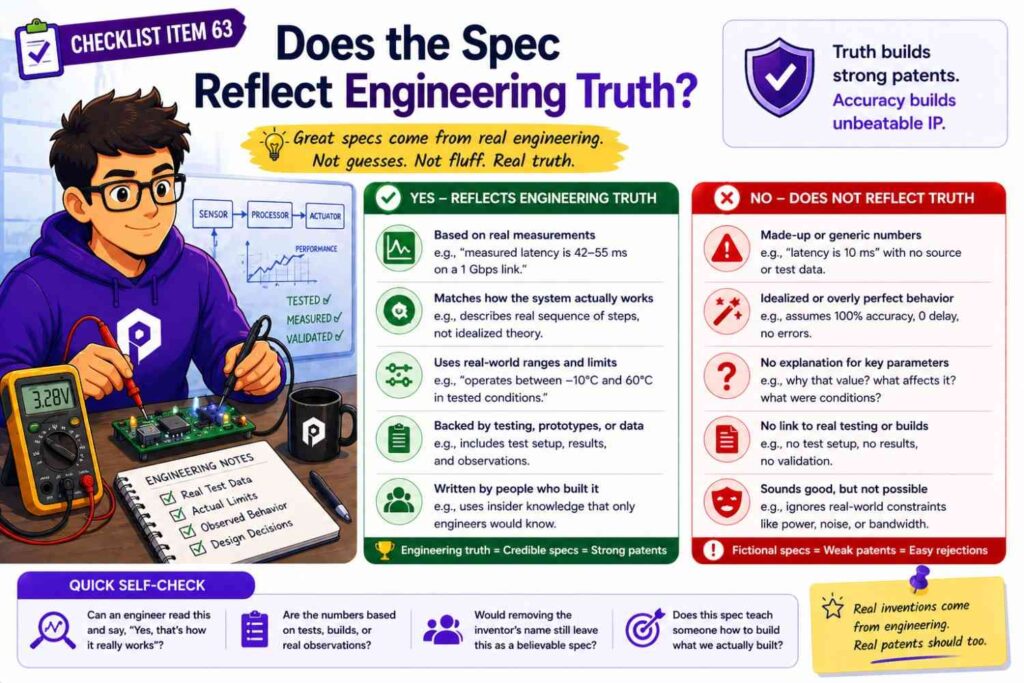

Checklist item 63: Does the spec reflect engineering truth?

A founder may know the business story. An engineer knows the technical details.

Both matter.

The checklist should ask:

Did an engineer confirm the architecture?

Did an engineer confirm data flow?

Did an engineer confirm model behavior?

Did an engineer confirm hardware structure?

Did an engineer flag fake or outdated parts?

A patent draft should not describe a system the engineering team would not recognize.

Checklist item 64: Does the spec reflect attorney judgment?

After founder and engineer review, attorney review matters.

The checklist should ask:

Has a patent attorney reviewed claim support?

Has the attorney checked for scope issues?

Has the attorney reviewed risky wording?

Has the attorney checked whether examples support the claims?

Has the attorney considered filing strategy?

AI can help draft. But attorney judgment helps protect.

That is why PowerPatent combines software with real patent attorney oversight. Founders get speed without being left alone with a risky AI draft. See how it works here: https://powerpatent.com/how-it-works

Checklist item 65: Does the spec support the filing deadline?

Sometimes a startup needs to file quickly.

The checklist should help prioritize.

If time is short, focus first on the highest-risk items.

Does the draft capture the real invention?

Does it support the main claims?

Does it include useful figures?

Does it avoid fake details?

Does it avoid false limits?

Does it describe the main flow?

Does it include enough examples?

Does attorney review confirm filing quality?

A checklist should not become a reason to freeze.

It should help the team move faster with more confidence.

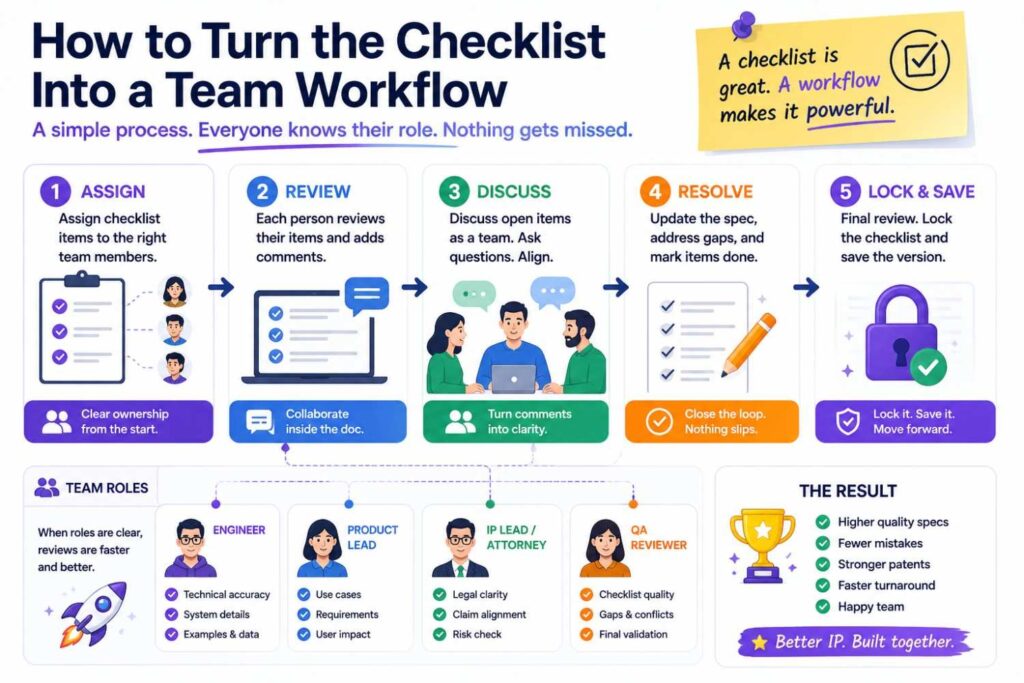

How to turn the checklist into a team workflow

A checklist only works if people use it.

Make it part of the drafting process.

After AI creates the first draft, do not send it straight to filing.

Run the founder review first.

Then run the engineering review.

Then run the claim and spec support review.

Then run the figure review.

Then run attorney review.

Each person should have a focused job.

The founder checks business fit and moat.

The engineer checks technical truth.

The patent attorney checks patent strength.

AI can help with search, summaries, support maps, and consistency scans.

This workflow reduces wasted time because each reviewer focuses on what they know best.

It also improves trust.

The founder does not have to become a patent attorney. The attorney does not have to guess the invention from thin notes. The engineer does not have to rewrite legal text.

Everyone contributes where they are strongest.

A simple founder review pass

The founder review pass should focus on the big picture.

Read the title, abstract, summary, first claim, and main figure.

Then answer:

Does this protect the thing that matters?

Does it match the product and roadmap?

Does it focus on the moat?

Does it avoid side features becoming the invention?

Does it avoid saying things we do not want to say?

Does it feel like a filing that supports our business?

This pass should be fast but serious.

A founder may catch a strategic issue that no one else sees.

For example, the draft may protect an internal workflow, but the real value is the external platform. Or it may focus on the first customer use case, while the company is moving toward a larger platform.

Patent drafts should support where the company is going, not only where it has been.

A simple engineer review pass

The engineer review pass should focus on truth.

Read the technical sections and figures.

Then answer:

Does this system work as described?

Are the parts real?

Are the steps in the right order?

Are the model inputs and outputs correct?

Are data sources correct?

Are deployment locations correct?

Are optional features marked correctly?

Are there old details from past versions?

Did AI invent anything?

Engineers should not worry about making the language sound legal.

They should mark issues plainly.

For example:

“This model does not train on live data.”

“The cloud server is optional.”

“We do not use GPS.”

“The output is a score, not a command.”

“The dashboard is not required.”

These comments are very valuable.

A simple attorney review pass

The attorney review pass should focus on protection.

The attorney should review whether the spec supports the desired claims, whether the claims are aimed at the right invention, whether the draft has unwanted limits, whether the examples are useful, whether the figures support the claims, and whether the filing strategy makes sense.

This review can also decide whether the spec should be broader, narrower, split into multiple filings, or supported with more examples.

AI can speed up the preparation, but attorney review is where judgment enters.

That is the right division of labor.

How AI can help run the checklist

AI can help with many checklist tasks.

It can summarize the draft.

It can list key terms.

It can find inconsistent names.

It can map claim elements to spec sections.

It can flag strong words like “always” and “must.”

It can compare figure labels to text.

It can list assumed features.

It can identify where the model input and output are described.

It can create a plain-language version of the claims.

These uses are helpful.

But AI should not be the final reviewer.

Use it like a sharp assistant.

Let it surface issues. Then have humans decide what matters.

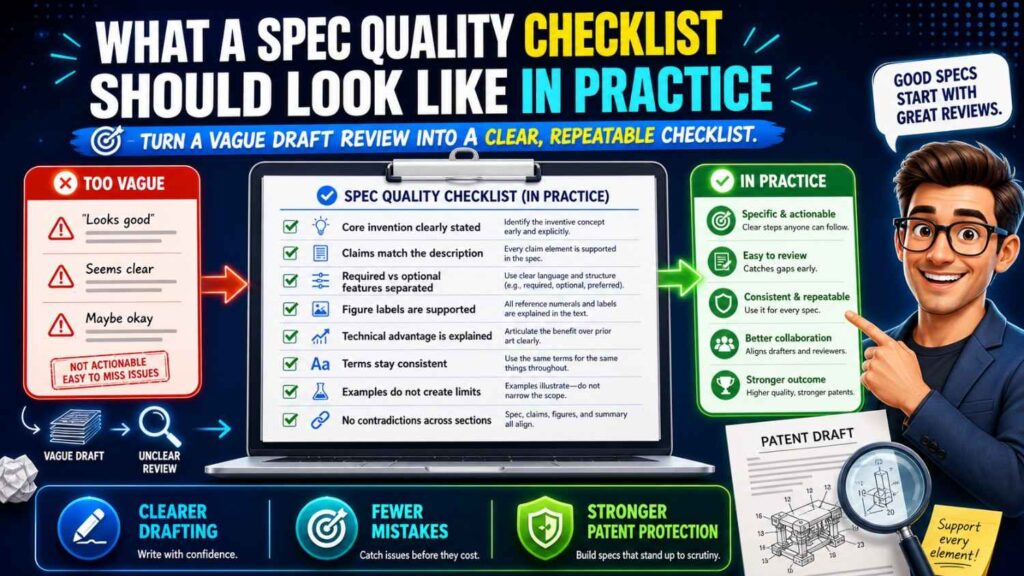

What a spec quality checklist should look like in practice

The checklist should be simple enough to use.

If it is too long, no one will finish it.

You can keep a master checklist and a shorter working checklist.

The working checklist may include the most important questions:

Does the draft match the invention source of truth?

Does it support the claims?

Are key terms consistent?

Are required and optional features separated?

Are fake AI-added features removed?

Does the main flow make sense?

Are model inputs and outputs clear?

Are training and use phases separated?

Do the figures match the spec?

Are examples useful and true?

Are broad terms supported?

Are risky words checked?

Did founder, engineer, and attorney review happen?

That short version may catch most serious problems.

The longer version can be used for important filings or final review.

Example checklist for an AI software patent

Suppose your startup built a tool that routes customer support tickets to the right engineer.

The source of truth says the invention receives a ticket, creates a ticket embedding, compares it to engineer skill profiles, and assigns or recommends an engineer.

The checklist would ask whether the spec explains tickets, embeddings, skill profiles, comparison, assignment, and recommendation.

It would check whether the draft uses “engineer profile,” “skill profile,” and “user profile” consistently.

It would check whether the system assigns tickets automatically or recommends a person for approval.

It would check whether the model is trained, updated, or only used.

It would check whether the figure shows the ticket input, embedding step, profile comparison, and output.

It would check whether the claims focus on skill-based routing instead of a generic dashboard.

It would check whether optional features like a manager approval screen are described as optional.

This review would catch many issues before filing.

Example checklist for an AI robotics patent

Suppose your startup built a robot that avoids unsafe zones by predicting route risk.

The checklist would ask whether the spec explains sensor data, map data, route segments, risk model, route risk score, and path change.

It would check whether the robot makes the decision locally or receives help from an edge server.

It would check whether GPS is required or optional.

It would check whether the figures show the robot, map, sensors, model, score, and path update.

It would check whether the score direction is clear.

It would check whether user approval is required.

It would check whether the spec covers slowing down, rerouting, stopping, or sending an alert if those are useful actions.

The goal is to make the robot invention clear and protectable, not just say “AI robot navigation.”

Example checklist for an AI health tool

Suppose your startup built a tool that detects changes in wearable data and sends alerts.

The checklist would be extra careful with language.

It would ask whether the spec says “alert” instead of “diagnosis” if diagnosis is not part of the product.

It would check whether the system supports a user, clinician, or care team.

It would check whether raw data stays on device or is sent to a server.

It would check whether the model output is a risk indicator, change pattern, or alert level.

It would check whether the figures show the data source, model, output, and alert path.

It would check whether the draft overpromises medical results.

This kind of review helps keep the spec accurate and aligned with the product.

Example checklist for an edge AI patent

Suppose your startup built a way to reduce model update size for edge devices.

The checklist would ask whether the spec explains the device state, model update need, update selection, compression or packaging, transfer, and edge update.

It would check whether the cloud is required or optional.

It would check whether the update package is clearly defined.

It would check whether the system sends full models or partial changes.

It would check whether the spec explains why update size is reduced.

It would check whether figures show the update flow.

It would check whether fallback positions include device type, update trigger, package format, and network condition.

This makes the spec much stronger than a generic “AI update system” draft.

How often should you update the checklist?

Your checklist should evolve.

After each filing, ask what issues came up.

Did AI add fake details?

Did engineers find old architecture?

Did attorneys find unsupported claim terms?

Did figures fail to match the spec?

Did the draft overuse broad language?

Add those lessons to the checklist.

Over time, your team will build a better process.

This is how startups get faster without getting sloppy.

The first checklist may be simple. That is fine.

The key is to use it and improve it.

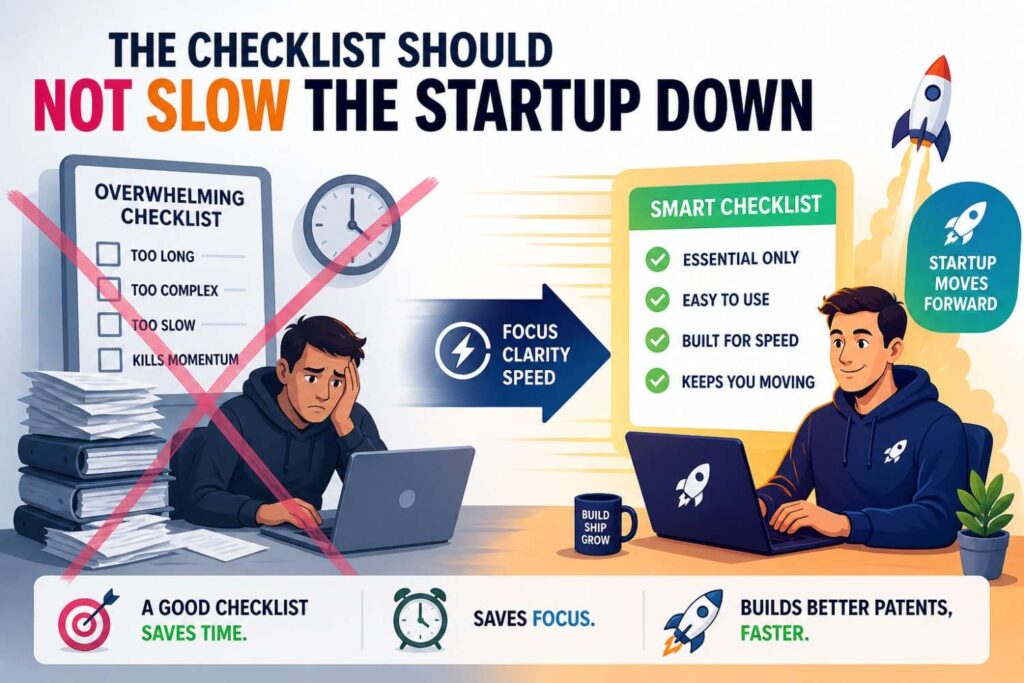

The checklist should not slow the startup down

Some founders worry that checklists create process drag.

A good checklist does the opposite.

It saves time.

It prevents long back-and-forth.

It helps reviewers know what to look for.

It catches problems before filing.

It gives attorneys better inputs.

It lets AI help without taking over.

The wrong way is to read a 40-page AI draft with no plan and hope the issues stand out.

The better way is to run focused checks.

That is faster and safer.

The PowerPatent approach

PowerPatent helps startups use AI for patent work without relying on AI alone.

The platform is designed to help founders capture inventions, organize technical details, draft faster, and work with real patent attorneys.

That combination matters.

AI brings speed.

Structured workflows bring clarity.

Attorney oversight brings judgment.

Together, they help startups avoid the common traps of AI patent drafting: fake details, narrow drafts, unsupported claims, inconsistent terms, weak examples, and filings that miss the real moat.

If your team is building something technical and wants to protect it without getting stuck in old-school patent delays, PowerPatent can help.

See how it works here: https://powerpatent.com/how-it-works

Final thoughts

AI can make patent drafting much faster.

But speed is not enough.

A patent spec must be clear, true, complete, and aligned with the claims and figures. It must describe the real invention, not a generic version. It must support the claim strategy. It must avoid fake details and false limits. It must give enough examples and alternatives to protect the business as the startup grows.

A spec quality checklist helps make that happen.

Start with a plain invention source of truth. Check the draft against it. Review term consistency. Separate required and optional features. Confirm technical flow. Check model inputs and outputs. Match the spec to the figures. Map the claims to the spec. Remove risky language. Bring in founder, engineer, and attorney review.

That is how startups can use AI drafting with more confidence.

You do not need to choose between speed and quality.

With the right checklist, you can have both.

PowerPatent helps founders turn real technical work into stronger patent filings with smart software and real attorney oversight. Learn how it works here: https://powerpatent.com/how-it-works

Leave a Reply